Understanding Neural Networks and Their Components

Apr 08, 2026 4 Min Read 355 Views

(Last Updated)

How do machines recognize patterns in images or speech without explicit rules? Neural networks make this possible by learning directly from data through layered computations. Their main impact lies in handling complex, non-linear relationships that traditional methods struggle to capture.

Read this blog to understand neural networks and their components and why each element matters in real-world applications.

Quick Answer:

Neural networks learn from data through layers using neurons, weights, bias, and activation functions to model non-linear relationships. Components include input, hidden, output layers, loss functions, optimizers, and backpropagation. Regularization and learning rate control training. Types such as CNNs, RNNs, LSTMs, and transformers support scalable, adaptable performance across real-world tasks.

- The global AI market, driven by neural networks, is expected to exceed $1 trillion by 2030.

- Deep learning achieved human-level performance with ~3.5% image classification error and ~5.9% speech recognition error.

- GPT-3 was trained with 175 billion parameters, highlighting the scale of modern neural networks.

Table of contents

- What are Neural Networks?

- Key Components of a Neural Network

- Neurons (Nodes)

- Layers

- Weights

- Bias

- Activation Functions

- Loss Function

- Optimizer

- Backpropagation

- Regularization Techniques

- Learning Rate and Training Dynamics

- How Neural Network Layers Work (Step-by-Step Mechanism)

- Input Layer → Receiving Raw Data

- Hidden Layers → Feature Transformation

- Output Layer → Final Prediction

- Types of Neural Networks

- Conclusion

- FAQs

- What is the main purpose of a neural network?

- What are the key components of a neural network?

- Which type of neural network is most commonly used today?

What are Neural Networks?

Neural networks are computational models that learn from data through layered transformations using weights, biases, and activation functions. By training with gradient-based optimization, they capture complex non-linear relationships that traditional methods struggle to model. Their key strength lies in learning hierarchical representations directly from raw data, which supports strong performance across domains such as natural language processing and speech recognition.

Key Components of a Neural Network

A neural network is a parameterized function that maps inputs to outputs through a sequence of linear transformations and non-linear operations. Its effectiveness depends on how each component contributes to representation learning, optimization, and generalization.

1. Neurons (Nodes)

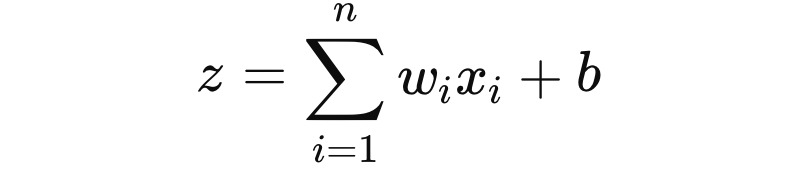

A neuron performs a parametric transformation of its inputs. Mathematically, it computes:

followed by an activation function a=ϕ(z).

Each neuron acts as a feature detector. In early layers, neurons respond to simple patterns such as edges in images or token-level signals in text. In deeper layers, neurons encode higher-level abstractions such as object parts or semantic relationships.

Modern architectures often extend this concept. Convolutional neurons operate over local receptive fields. Attention-based neurons compute weighted relationships across all inputs, as seen in transformer models.

2. Layers

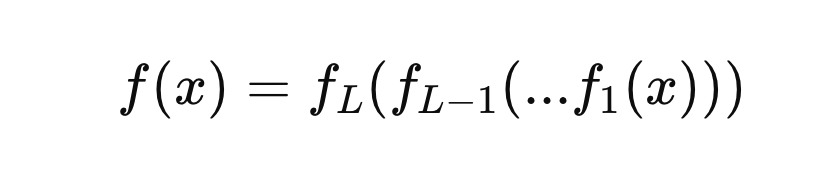

Layers organize neurons into structured transformations. A neural network can be interpreted as a composition of functions:

- Input Layer: Represents raw features such as pixel intensities, token embeddings, or numerical variables. It does not perform computation but defines the input dimensionality.

- Hidden Layers: Perform hierarchical feature extraction. Each layer transforms the representation space. Deeper networks can approximate highly complex functions, supported by the universal approximation theorem and empirical results in computer vision and NLP.

- Output Layer: Produces task-specific outputs. For classification, it often uses softmax to generate probability distributions. For regression, it may use linear activation.

Depth influences expressivity, while width influences capacity. However, deeper models require careful regularization and optimization to avoid vanishing gradients and overfitting.

3. Weights

Weights are the primary learnable parameters. They define the strength and direction of connections between neurons.

During training, weights are updated to minimize the loss function. In matrix form, a layer computes:

where WW is the weight matrix.

Weight initialization is critical. Poor initialization can lead to unstable gradients. Techniques such as Xavier and He initialization maintain variance across layers, supporting stable training.

From a statistical perspective, weights encode learned correlations between features and targets. In overparameterized models, they also contribute to implicit regularization, where multiple solutions exist but gradient-based optimization converges to generalizable ones.

4. Bias

Bias terms shift the activation function independently of input values. Without bias, the model is constrained to pass through the origin, limiting its ability to fit real-world data.

Bias improves model flexibility, particularly when input distributions are not centered. In practice, bias parameters are learned alongside weights and play a critical role in fine-grained adjustments of neuron outputs.

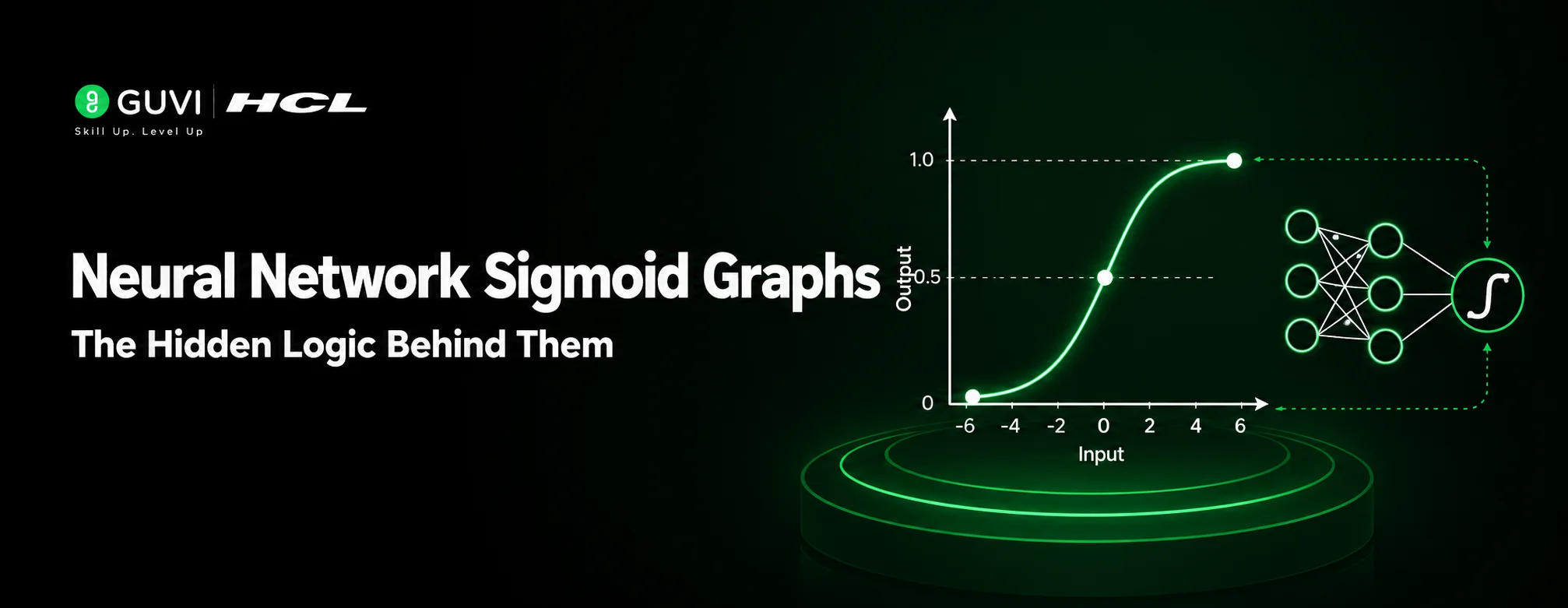

5. Activation Functions

Activation functions introduce non-linearity, which is essential for modeling complex relationships.

Common activation functions:

- ReLU (Rectified Linear Unit): max(0,x). Efficient and widely used in deep networks due to sparse activation and reduced vanishing gradient issues.

- Sigmoid: Maps inputs to (0,1). Used in binary classification but prone to gradient saturation.

- Tanh: Zero-centered output in (-1,1), often preferred over sigmoid in hidden layers.

Advanced variants such as Leaky ReLU, GELU, and Swish improve gradient flow and performance in deeper architectures.

Activation choice directly impacts convergence speed and representational capacity. For example, GELU is commonly used in transformer-based models due to its smooth probabilistic interpretation.

6. Loss Function

The loss function quantifies prediction error and defines the optimization objective.

Common loss functions:

- Mean Squared Error (MSE) for regression

- Cross-Entropy Loss for classification

- Binary Cross-Entropy for binary tasks

The loss surface determines how gradients behave during optimization. A well-defined loss function aligns closely with the evaluation metric, improving model reliability in real-world deployment.

7. Optimizer

Optimizers update weights and biases using gradients computed via backpropagation.

- Gradient Descent: Updates parameters in the direction of negative gradient.

- Stochastic Gradient Descent (SGD): Uses mini-batches to improve computational efficiency and generalization.

- Adam (Adaptive Moment Estimation): Combines momentum and adaptive learning rates, widely used in practice.

The update rule for a parameter θ is:

where η is the learning rate.

Optimization stability depends on learning rate scheduling, batch size, and gradient clipping. Poor configuration can lead to divergence or slow convergence.

8. Backpropagation

Backpropagation is the algorithm that computes gradients of the loss function with respect to each parameter using the chain rule of calculus.

It propagates error signals from the output layer back to earlier layers:

This process enables efficient training of deep networks with millions or billions of parameters.

9. Regularization Techniques

To improve generalization and reduce overfitting:

- L1 and L2 Regularization: Add penalty terms to the loss function

- Dropout: Randomly deactivates neurons during training

- Batch Normalization: Stabilizes training by normalizing layer inputs

These methods control model complexity and improve robustness across unseen data.

10. Learning Rate and Training Dynamics

The learning rate controls the step size during optimization. A high learning rate can cause instability, while a low rate slows convergence.

Schedulers such as step decay and cosine annealing adjust the learning rate during training. Warm-up strategies are commonly used in large-scale models to stabilize early training phases.

How Neural Network Layers Work (Step-by-Step Mechanism)

A neural network learns by passing data through multiple layers, transforming it step by step. This process involves two key phases: forward propagation and backpropagation.

1. Input Layer → Receiving Raw Data

- The input layer accepts raw features such as:

- Pixel values (images)

- Word embeddings (text)

- Numerical variables (tabular data)

- No computation happens here; it simply passes data forward.

2. Hidden Layers → Feature Transformation

- Hidden layers perform the core computation.

- Each neuron computes:

- Weighted sum of inputs

- Adds bias

- Applies activation function

- As data flows deeper:

- Early layers → learn simple patterns (edges, tokens)

- Middle layers → learn combinations (shapes, phrases)

- Deep layers → learn abstractions (objects, semantics)

This is called hierarchical representation learning.

3. Output Layer → Final Prediction

- Produces the final result based on task:

- Classification → probabilities (Softmax/Sigmoid)

- Regression → continuous values

- Example:

- Image → “Cat (0.92), Dog (0.08)”

Build a strong foundation in neural networks and advance into real-world AI systems with structured learning. Join HCL GUVI’s Artificial Intelligence and Machine Learning Course to learn from industry experts and Intel engineers through live online classes, master in-demand skills like Python, ML, MLOps, Generative AI, and Agentic AI, and gain hands-on experience with 20+ industry-grade projects, 1:1 doubt sessions, and placement support with 1000+ hiring partners.

Types of Neural Networks

- Feedforward Neural Networks (FNN): The simplest architecture where data moves in one direction from input to output. Commonly used for structured data tasks such as basic classification and regression.

- Convolutional Neural Networks (CNN): Designed for spatial data such as images. They use convolutional layers to capture local patterns like edges and textures, making them effective in computer vision tasks.

- Recurrent Neural Networks (RNN): Built for sequential data where order matters. They retain information from previous steps, which supports tasks such as language modeling and time series forecasting.

- Long Short-Term Memory Networks (LSTM): A specialized form of RNN that addresses short-term memory limitations. It can capture long-range dependencies, which is useful in text generation and speech recognition.

- Transformer Networks: Based on attention mechanisms rather than sequence-based recurrence. They process data in parallel and are widely used in modern natural language processing tasks due to their efficiency and accuracy.

Conclusion

Neural networks in AI and ML are practical systems that learn patterns directly from data and improve with experience. When you break them down into components such as neurons, layers, weights, and optimization methods, their behavior becomes more interpretable and easier to work with. This clarity is important when building reliable models, selecting the right architecture, or diagnosing performance issues.

FAQs

1. What is the main purpose of a neural network?

The main purpose of a neural network is to learn patterns from data and make predictions or decisions, especially in cases where relationships are complex and non-linear.

2. What are the key components of a neural network?

The key components include neurons, layers, weights, bias, activation functions, loss functions, and optimizers, all of which work together during training to learn from data.

3. Which type of neural network is most commonly used today?

Transformer networks are widely used today, particularly in natural language processing tasks, due to their ability to process large amounts of data efficiently and capture long-range dependencies.

Did you enjoy this article?