Escaping the Trap: A Beginner’s Guide to Local Search Algorithms in AI

May 04, 2026 6 Min Read 25 Views

(Last Updated)

Here is something nobody tells you when you start learning AI. Most of the hard problems are not prediction problems. They are decision problems. And the two are completely different. When I first understood this distinction, it changed how I thought about the field. ChatGPT predicts the next token. Your Netflix recommendations predict what you will click. But a hospital scheduling system or a shipping route optimizer is not predicting anything. It is choosing. Specifically, it is trying to choose the best possible option from a search space so large that even listing all the candidates would take longer than the universe has existed.

This is what Local Search Algorithms were built for. They will not generate a painting or write a cover letter. But if you care about AI problem solving, these algorithms deserve your attention.

Quick Answer:

Local Search Algorithms in AI are optimization techniques used to solve complex decision problems where exploring every possible solution is impractical. Instead of exhaustive search, they start with an initial solution and improve it step-by-step using heuristics. Algorithms like Hill Climbing quickly find good solutions but can get stuck in local optima, while Simulated Annealing introduces controlled randomness to escape these traps. These methods are widely used in real-world applications such as route optimization, scheduling, and resource allocation, where near-optimal solutions delivered quickly are more valuable than perfect solutions that take too long to compute.

Table of contents

- What Are Local Search Algorithms in AI?

- Benefits of Local Search Algorithms

- Types of Local Search Algorithms

- Hill Climbing

- Simulated Annealing

- Beam Search

- Genetic Algorithms

- Tabu Search

- Random Restart Hill Climbing

- Stochastic Hill Climbing

- A Problem That Should Be Simple (But Isn’t)

- The Mental Model: Landscape and Hikers

- Hill Climbing: The Algorithm That Always Moves Downhill

- The Local Optima Trap

- Simulated Annealing: The Controlled Risk-Taker

- Why This Works?

- Hill Climbing vs. Simulated Annealing: What Actually Matters

- Real-World Application: Route Optimization in Logistics

- The Code: Seeing Both Algorithms Side by Side

- Conclusion

- FAQs

- Why are local search algorithms preferred over exhaustive search in AI?

- What is the role of a cost function in local search algorithms?

- What does “neighboring solution” mean in local search?

- Can local search algorithms guarantee the best solution?

- What factors affect the performance of Simulated Annealing?

What Are Local Search Algorithms in AI?

Local Search Algorithms are heuristic optimization techniques used in AI to solve problems with large or continuous search spaces where exhaustive search is impractical. They start with a single solution and iteratively improve it through small local changes guided by a cost or objective function. By evaluating neighboring states, they move toward better solutions either deterministically, as in Hill Climbing, or probabilistically, as in Simulated Annealing. Unlike tree-based search methods, they do not store paths, making them memory-efficient and scalable. They are widely used for NP-hard problems like routing, scheduling, and resource allocation, where near-optimal solutions are sufficient.

Benefits of Local Search Algorithms

- Efficient Route Optimization: Quickly finds near-optimal paths in problems like delivery routing and navigation without evaluating all possible combinations.

- Real-Time Scheduling Capability: Uses Artificial Intelligence to dynamically adapt to changing constraints in workforce scheduling and timetabling, ensuring efficient and responsive decision-making.

- Handles NP-Hard Problems Practically: Provides usable solutions for complex problems like the Travelling Salesman Problem where exact methods are infeasible.

- Minimal Computational Overhead: Requires low memory and processing power, making it suitable for large-scale and embedded systems.

Types of Local Search Algorithms

Local search algorithms can be broadly categorized based on how they explore the search space and handle local optima. Here are the most important types:

1. Hill Climbing

A greedy algorithm that always moves to a better neighboring solution. It is fast and memory-efficient but often gets stuck in local optima.

2. Simulated Annealing

A probabilistic algorithm that occasionally accepts worse solutions to escape local minima. It balances exploration and exploitation using a temperature parameter.

3. Beam Search

Maintains multiple candidate solutions at each step instead of just one. It explores several paths simultaneously, improving the chances of finding better solutions.

4. Genetic Algorithms

Inspired by natural evolution, this method works with a population of solutions. It uses operations like selection, crossover, and mutation to evolve better solutions over time.

5. Tabu Search

Enhances local search by maintaining a memory of recently visited solutions (tabu list) to avoid cycling back and getting stuck in local optima.

6. Random Restart Hill Climbing

Runs Hill Climbing multiple times from different random starting points. This increases the probability of finding a better or near-global optimum solution.

7. Stochastic Hill Climbing

Instead of always picking the best neighbor, it randomly selects among better neighbors. This adds slight randomness and helps avoid early convergence.

In this blog, we will discuss local search algorithms in AI and how techniques like Hill Climbing and Simulated Annealing solve complex optimization problems.

A Problem That Should Be Simple (But Isn’t)

Consider the classic Travelling Salesman Problem (TSP), one of the most studied problems in AI optimization and computer science. The setup looks simple: one truck, 50 cities, visit each city exactly once, and return to the starting point while minimizing total distance. At first glance, the solution seems obvious, just evaluate every possible route and pick the shortest one.

But this is where complexity explodes. The number of possible routes is 50! (50 factorial), an astronomically large number with 64 digits. Even if a supercomputer evaluated one billion routes per second continuously, it would not come close to solving the problem within a realistic timeframe. This is a classic example of combinatorial explosion, where the search space grows faster than computational power can handle.

This is why brute-force methods fail in real-world AI systems. Instead, practitioners rely on heuristic search algorithms, which sacrifice guaranteed optimality in exchange for speed and scalability. These methods focus on finding near-optimal solutions efficiently, making them practical for applications like logistics, scheduling, and route optimization.

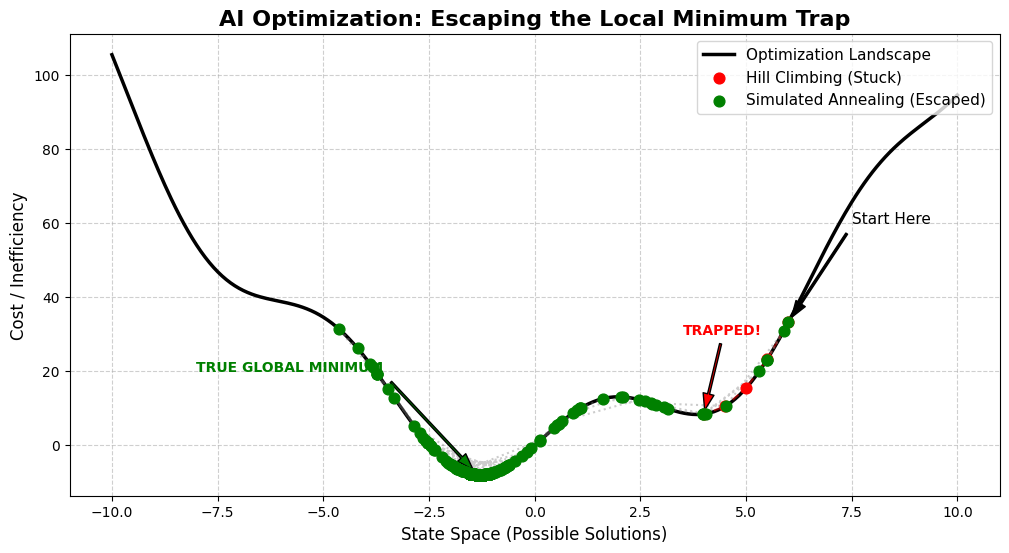

The Mental Model: Landscape and Hikers

To understand how local search algorithms work, it helps to think in terms of a search landscape. Imagine a vast mountain range where every point represents a possible solution, and the height represents its cost, such as distance, time, or expense. Your goal is to reach the lowest valley, the solution with the minimum cost.

In AI, this landscape is called the state space, the complete set of all possible configurations of a problem. Within this space, two key concepts define optimization:

- Global Minimum: The absolute best solution across the entire landscape.

- Local Minimum: A solution that appears optimal within its immediate neighborhood but is not the best overall.

This distinction is critical because most real-world optimization problems contain multiple local minima, making it difficult to identify the true global optimum without intelligent search strategies.

Build strong AI fundamentals beyond theory and apply algorithms to real-world problems. Join HCL GUVI’s Artificial Intelligence and Machine Learning Course to learn from industry experts and Intel engineers through live online classes, master in-demand skills like Python, ML, MLOps, Generative AI, and Agentic AI, and gain hands-on experience with 20+ industry-grade projects, 1:1 doubt sessions, and placement support with 1000+ hiring partners.

Hill Climbing: The Algorithm That Always Moves Downhill

Hill Climbing is one of the simplest and most widely used local search algorithms. It follows a greedy strategy:

- Start with an initial solution

- Evaluate neighboring solutions created by small changes

- Move to the neighbor with the lowest cost

- Repeat until no better neighbor exists

This approach is computationally efficient, requires minimal memory, and performs well on smooth, well-structured problems. However, its core strength, greediness, is also its biggest limitation.

The Local Optima Trap

The main challenge with Hill Climbing is the local optima problem. The algorithm stops as soon as it reaches a point where all neighboring solutions are worse, even if a much better solution exists elsewhere in the search space.

In practical terms, this means an algorithm optimizing delivery routes might settle for a route costing ₹50, even when a ₹22 route exists nearby, simply because reaching it requires temporarily accepting worse intermediate steps. The algorithm is not incorrect; it is constrained by its inability to explore beyond immediate improvements.

Simulated Annealing: The Controlled Risk-Taker

To overcome the limitations of greedy search, more advanced methods like Simulated Annealing introduce controlled randomness into the optimization process. Inspired by the metallurgical process of heating and slowly cooling materials, this algorithm allows temporary acceptance of worse solutions to escape local minima.

It introduces a parameter called temperature (T), which governs exploration:

- High temperature: Encourages exploration by allowing worse moves

- Low temperature: Focuses on exploitation, favoring better solutions

As the algorithm progresses, the temperature decreases according to a cooling schedule, gradually shifting behavior from exploration to refinement. By the end, it behaves similarly to Hill Climbing but with a much higher chance of reaching a near-global optimum.

Why This Works?

The probability of accepting a worse solution is defined as:

P(accept) = e^(−ΔCost / T)

At high temperatures, even significantly worse solutions may be accepted, enabling the algorithm to explore the search space more freely. As the temperature approaches zero, only better solutions are accepted, ensuring convergence.

This balance between exploration and exploitation is what makes Simulated Annealing effective for solving complex optimization problems. It mirrors real-world decision-making, where early experimentation is valuable, but later stages demand precision and stability.

Hill Climbing vs. Simulated Annealing: What Actually Matters

| Feature | Hill Climbing | Simulated Annealing |

| Core Strategy | Greedy | Probabilistic |

| Escapes Local Optima | No | Yes |

| Speed | Very Fast | Moderate |

| Memory Use | Minimal | Minimal |

| Guaranteed Optimum | No | No (but comes much closer) |

| Best For | Smooth landscapes, unimodal problems | Complex, multi-modal real-world problems |

Real-World Application: Route Optimization in Logistics

A logistics company managing around 40 delivery stops per driver can use Simulated Annealing to optimize routes at the start of each day. The process begins with a random route. The algorithm then makes small, iterative changes such as swapping two stops, reversing a segment, or shifting a delivery earlier in the sequence. If the total distance decreases, the new route is accepted. If not, it may still be accepted in the early stages, allowing the system to explore better possibilities.

After thousands of iterations, the temperature drops close to zero, and the algorithm stabilizes. In practice, this approach typically produces routes within 2-5% of the theoretical optimum in just seconds.

In contrast, a purely greedy method like Hill Climbing would likely plateau much earlier, getting stuck in a local optimum. In real-world logistics, this difference can translate into saving dozens of kilometers per driver each day, directly impacting cost, fuel consumption, and delivery efficiency.

The Code: Seeing Both Algorithms Side by Side

Here’s a working Python implementation. The cost function is deliberately tricky: it has multiple false valleys (local minima) to expose the weakness of Hill Climbing. Simulated Annealing, given its exploration phase, handles it much better.

import numpy as np

# Cost landscape: multiple fake valleys, one true minimum

def cost_function(x):

return x**2 + 10 * np.sin(x)

# Hill Climbing: pure greedy, iterative improvement

def hill_climbing(start_position, step_size=0.5, max_steps=100):

current_pos = start_position

for _ in range(max_steps):

# Look one step to the left and right

neighbors = [current_pos - step_size, current_pos + step_size]

best_neighbor = min(neighbors, key=cost_function)

# Every neighbor is worse? Stop immediately.

if cost_function(best_neighbor) >= cost_function(current_pos):

break

current_pos = best_neighbor

return current_pos

# Simulated Annealing: probabilistic escape from local traps

def simulated_annealing(start_position, step_size=2.0, max_steps=400, temp=100.0, cooling_rate=0.97):

current_pos = start_position

for _ in range(max_steps):

# Cool down gradually

temp = temp * cooling_rate

# Try a random neighbor

neighbor = current_pos + np.random.uniform(-step_size, step_size)

cost_difference = cost_function(neighbor) - cost_function(current_pos)

# Improvement: always accept

if cost_difference < 0:

current_pos = neighbor

# Worse move: accept with temperature-dependent probability

# High temp = more likely to accept; low temp = almost never

elif np.random.rand() < np.exp(-cost_difference / temp):

current_pos = neighbor

return current_posRun this a few times from different starting positions. Hill Climbing will consistently get stuck at whichever local minimum it stumbles into first. Simulated Annealing will almost always find a path to something much better, though not always the same solution, because of the randomness involved.

Figure 1: Visualizes both algorithms on the same landscape. Hill Climbing halts at a local minimum. Simulated Annealing makes it to the true global minimum on the left.

Conclusion

AI optimization is a field that rewards patience with abstraction. These aren’t algorithms that produce images or answer questions. They’re algorithms that decide: under uncertainty, under constraint, at scale.

Hill Climbing is a useful tool and worth understanding on its own terms. It’s fast, predictable, and perfectly adequate on well-behaved problems. But the world is not full of well-behaved problems.

Simulated Annealing carries a more interesting lesson: sometimes the only path to the best solution is a willingness to temporarily occupy a worse one. The algorithm doesn’t know exactly where it’s going. It just knows that refusing to ever move backward is guaranteed to leave it stuck.

FAQs

Why are local search algorithms preferred over exhaustive search in AI?

Local search algorithms are preferred because they handle massive search spaces efficiently. Instead of evaluating every possible solution, they focus on iterative improvement, making them practical for real-world optimization problems where time and computational resources are limited.

What is the role of a cost function in local search algorithms?

A cost function evaluates how good or bad a solution is. It guides the algorithm by assigning a numerical value to each state, helping it move toward solutions with lower cost or higher efficiency.

What does “neighboring solution” mean in local search?

A neighboring solution is a slightly modified version of the current solution. For example, in route optimization, swapping two stops or changing their order creates a new neighboring state.

Can local search algorithms guarantee the best solution?

No, most local search algorithms do not guarantee the global optimum. They aim to find a near-optimal solution quickly, which is often sufficient for real-world applications.

What factors affect the performance of Simulated Annealing?

Key factors include initial temperature, cooling rate, step size, and number of iterations. Proper tuning of these parameters significantly impacts the quality of the final solution.

Did you enjoy this article?