What Is Hugging Face? A Complete Guide for Beginners

Mar 31, 2026 5 Min Read 781 Views

(Last Updated)

What if building an AI model no longer required months of research, massive datasets, or a full team of experts?

Hugging Face has made that possible. By offering ready-to-use models, powerful tools, and a collaborative ecosystem, it has turned AI from a resource-heavy discipline into something developers, researchers, and even beginners can experiment with quickly. From enabling chatbots and translation systems to accelerating enterprise AI adoption, Hugging Face has become a core platform in modern AI development, making it essential to understand for anyone looking to build or deploy intelligent applications today.

Curious to see how it all works in practice?

Read the full blog to explore Hugging Face’s tools, real-world applications, and how you can start building AI solutions faster without starting from scratch.

Quick Answer:

Hugging Face is an open-source AI platform that simplifies building, training, and deploying machine learning models through pre-trained transformers, shared datasets, and integrated development tools. It allows developers, researchers, and organizations to create NLP, vision, and multimodal applications faster by replacing complex, from-scratch workflows with scalable, research-backed infrastructure and collaborative model ecosystems.

Table of contents

- What is Hugging Face?

- Key Features of Hugging Face

- Top 5 Benefits of Using Hugging Face

- Reduced Training Cost Through Transfer Learning

- Standardized Workflows Improve Reproducibility

- Faster Time to Production for AI Applications

- Access to Peer-Validated Models and Benchmarks

- Framework Interoperability Across ML Stacks

- Common Use Cases of Hugging Face

- Natural Language Processing for Enterprise Automation

- Conversational AI and Virtual Assistants

- Accelerated AI Research and Prototyping

- Domain-Specific Model Fine-Tuning

- Multimodal AI Development

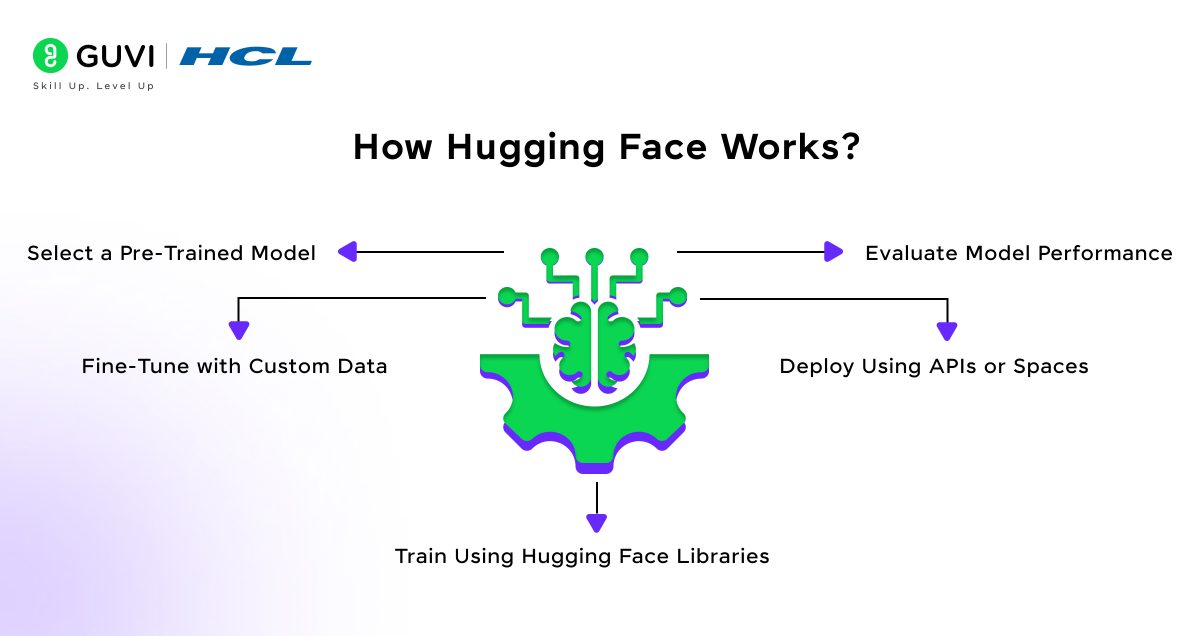

- How Hugging Face Works (Step-by-Step Workflow)

- Select a Pre-Trained Model

- Fine-Tune with Custom Data

- Train Using Hugging Face Libraries

- Evaluate Model Performance

- Deploy Using APIs or Spaces

- Hugging Face vs Traditional Machine Learning Development

- Who Should Learn Hugging Face?

- How to Get Started with Hugging Face?

- Conclusion

- FAQs

- Do Hugging Face models require high-end hardware to run?

- Can organizations use Hugging Face in secure or regulated environments?

- How does Hugging Face support collaboration across teams?

What is Hugging Face?

Hugging Face is an open-source machine learning platform that provides tools, libraries, and infrastructure for building, training, fine-tuning, and deploying state-of-the-art artificial intelligence models. It is best known for its Transformers library, which offers pre-trained deep learning models based on transformer architectures such as BERT, GPT, and Vision Transformers that are optimized for tasks across natural language processing, computer vision, and multimodal AI.

- The Hugging Face Model Hub hosts over 500,000 models and continues to grow as researchers and organizations publish new architectures and fine-tuned variants.

- The Hugging Face Hub hosts over one million models, datasets, and demos combined, reflecting rapid growth in open AI collaboration.

- The Hugging Face Model Hub hosts hundreds of thousands of shared models, making it one of the largest open repositories for machine learning used in research and production.

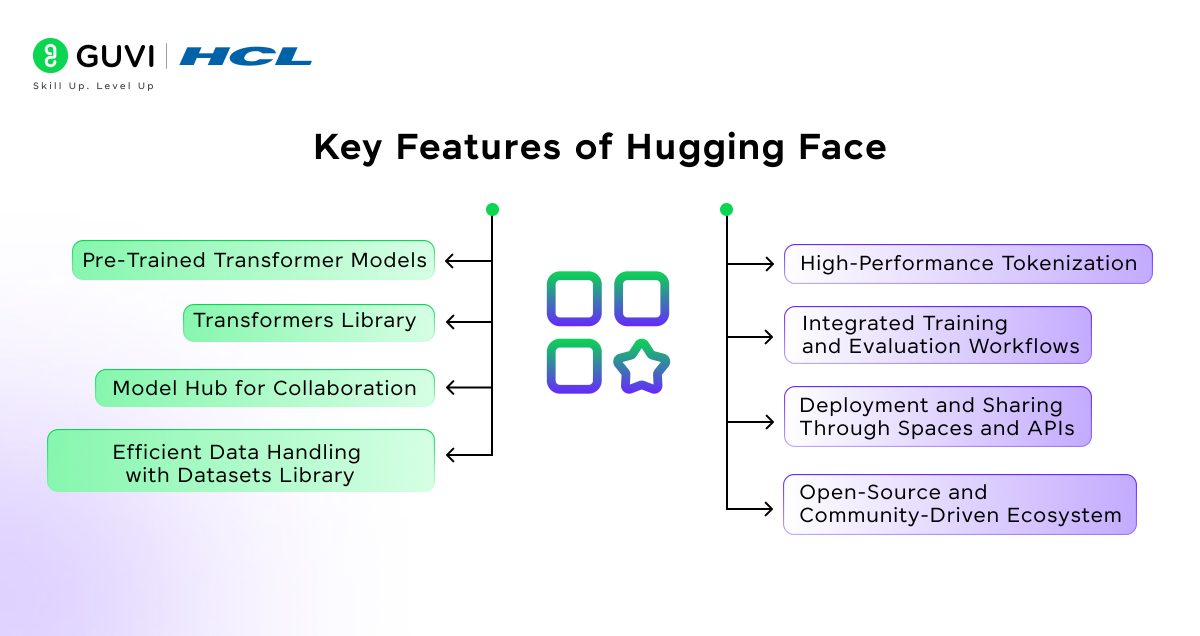

Key Features of Hugging Face

- Pre-Trained Transformer Models

Hugging Face provides access to thousands of pre-trained models trained on large-scale datasets. These models support tasks such as text classification, summarization, translation, question answering, and image analysis, which reduces the need for training models from the ground up.

- Transformers Library

The Transformers library offers standardized APIs for implementing state-of-the-art deep learning architectures. It integrates with PyTorch, TensorFlow, and JAX, allowing developers to train, fine-tune, and evaluate models within familiar frameworks.

- Model Hub for Collaboration

The Hugging Face Model Hub serves as a centralized repository where researchers and organizations publish, version, and share models. This supports reproducibility, peer validation, and faster adoption of proven architectures.

- Efficient Data Handling with Datasets Library

The Datasets library provides scalable tools for loading, preprocessing, and managing structured and unstructured datasets. It is optimized for performance and supports streaming large datasets without heavy local storage requirements.

- High-Performance Tokenization

Built-in tokenizers convert raw text into numerical representations required by transformer models. These tokenizers are optimized in Rust for speed and consistency, which improves training and inference efficiency.

- Integrated Training and Evaluation Workflows

Hugging Face includes utilities such as the Trainer API to manage batching, optimization, checkpointing, and evaluation metrics. This reduces custom engineering while maintaining transparency and reproducibility.

- Deployment and Sharing Through Spaces and APIs

Models can be deployed as APIs for application integration or hosted through Hugging Face Spaces for interactive demonstrations. This simplifies moving from experimentation to production environments.

- Open-Source and Community Driven Ecosystem

Hugging Face promotes collaborative development where models, datasets, and benchmarks are continuously improved by a global research and developer community, strengthening trust and innovation across AI workflows.

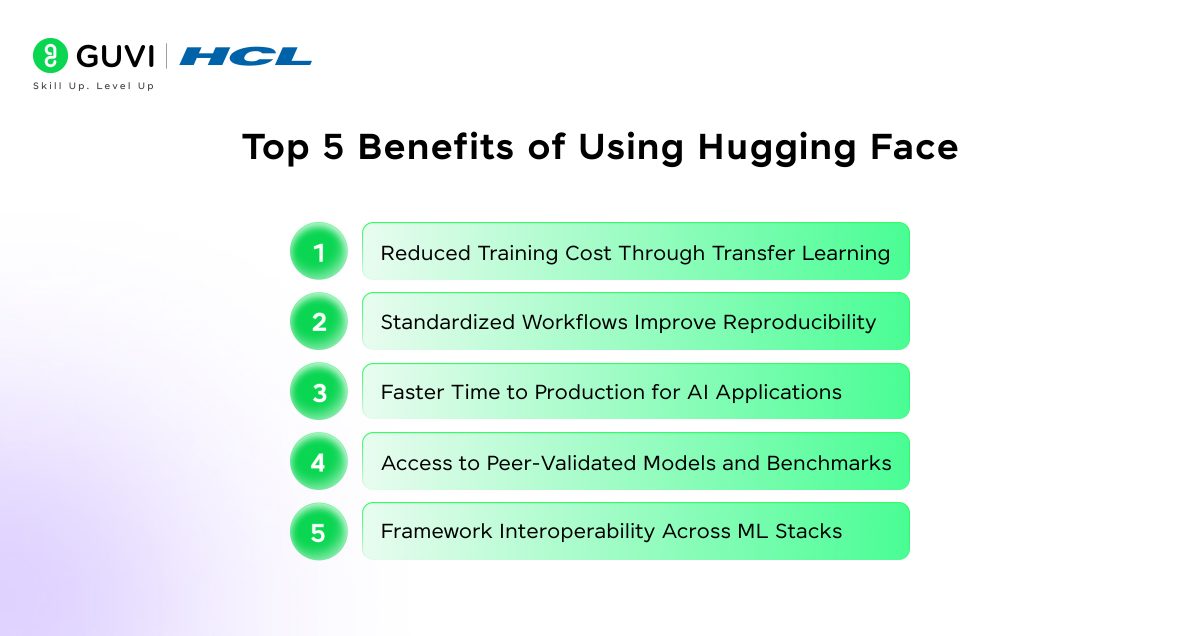

Top 5 Benefits of Using Hugging Face

1. Reduced Training Cost Through Transfer Learning

Hugging Face leverages transfer learning, where models pre-trained on massive corpora can be adapted to specific tasks with relatively small datasets. This minimizes computational expense and removes the need for large-scale model training, which traditionally requires expensive GPU clusters and long training cycles.

2. Standardized Workflows Improve Reproducibility

The platform provides consistent APIs and training utilities that make experiments easier to replicate across environments. This is critical for research validation, regulated industries, and collaborative development where traceability and repeatability are required.

3. Faster Time to Production for AI Applications

With integrated tools for preprocessing, training, evaluation, and deployment, development teams can move from prototype to production in a structured manner. This reduces engineering bottlenecks and shortens delivery timelines without compromising model quality.

4. Access to Peer-Validated Models and Benchmarks

The Model Hub hosts architectures contributed by leading research institutions and technology companies. Using these validated models allows teams to build on proven methodologies rather than experimenting with untested designs, which improves reliability and performance confidence.

5. Framework Interoperability Across ML Stacks

Hugging Face supports major machine learning ecosystems including PyTorch, TensorFlow, and JAX. This flexibility allows organizations to integrate AI capabilities into existing technology stacks without restructuring infrastructure or retraining teams.

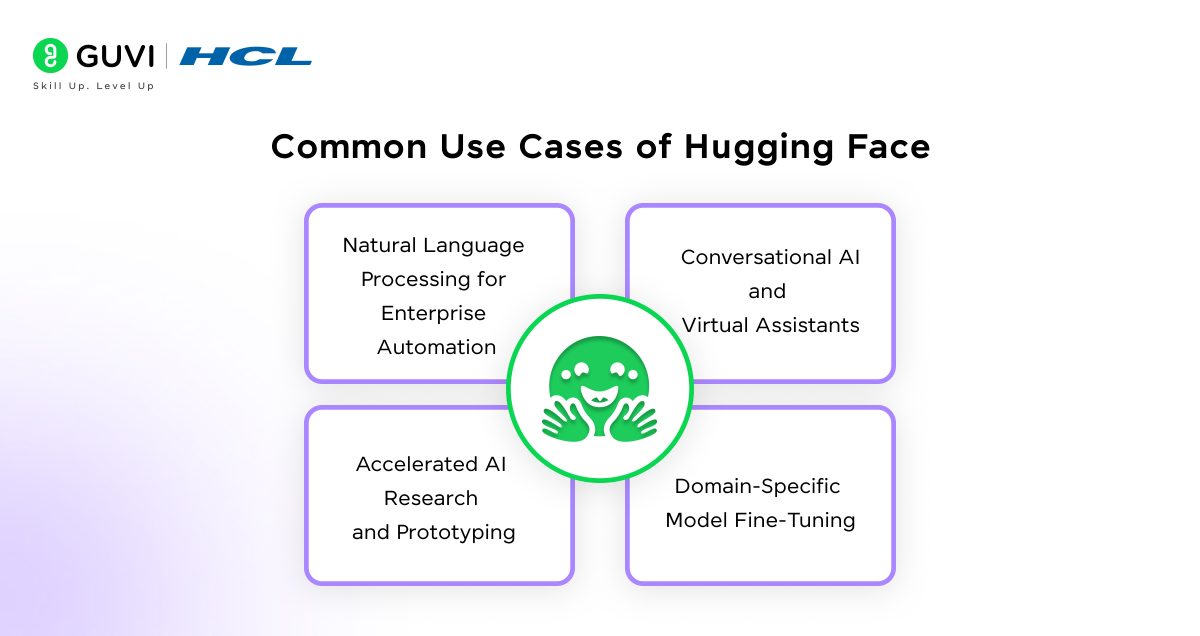

Common Use Cases of Hugging Face

1. Natural Language Processing for Enterprise Automation

Organizations rely on Hugging Face models to process large volumes of unstructured text such as customer queries, support tickets, and internal documents. Pre-trained transformer models reduce the need to build language systems from the ground up while maintaining high accuracy in classification, summarization, and entity recognition tasks. This approach improves operational efficiency and allows teams to focus on refining domain-specific insights rather than building infrastructure.

2. Conversational AI and Virtual Assistants

Hugging Face plays a central role in developing conversational systems used in customer service, education platforms, and SaaS products. Fine-tuned language models allow businesses to deploy context-aware chatbots that handle complex queries with greater linguistic precision. Unlike rule-based bots, these systems learn semantic relationships, which leads to more reliable interactions and measurable improvements in response quality.

3. Accelerated AI Research and Prototyping

Research teams use Hugging Face to validate hypotheses quickly by accessing peer-reviewed model architectures and benchmark datasets. The platform standardizes experimentation workflows, which supports reproducibility, a key requirement in scientific research. Faster validation cycles allow researchers to test model performance and publish findings without rebuilding pipelines repeatedly.

4. Domain-Specific Model Fine-Tuning

Industries such as healthcare, finance, and legal services adapt general-purpose models to specialized datasets. Hugging Face provides the tooling required to fine-tune models securely within controlled environments. This allows organizations to extract insights from sensitive data while maintaining governance standards, auditability, and performance transparency expected in regulated sectors.

5. Multimodal AI Development

Modern AI systems increasingly combine text, image, and audio understanding. Hugging Face supports multimodal workflows that allow developers to integrate vision models, speech recognition, and language intelligence into unified applications. This capability supports use cases such as document intelligence, media analysis, and accessibility technologies, where interpreting multiple data types is essential for accurate outcomes.

Ready to move from understanding AI platforms like Hugging Face to building real-world machine learning models? Enroll in HCL GUVI’s Artificial Intelligence & Machine Learning Course and gain hands-on experience with industry tools, structured mentorship, 1:1 doubt support, and dedicated Placement Assistance backed by 1000+ Hiring Partners.

How Hugging Face Works (Step-by-Step Workflow)

1. Select a Pre-Trained Model

The workflow begins by choosing a model from the Hugging Face Model Hub. These models are already trained on large, diverse datasets and are designed for tasks such as text classification, translation, summarization, or image recognition. Selecting an appropriate base model reduces development time and provides a strong performance baseline supported by peer-reviewed research and community validation.

2. Fine-Tune with Custom Data

After selecting a model, teams adapt it to their specific use case by training it on domain-relevant data. Fine-tuning adjusts the model’s internal parameters so it reflects the vocabulary, patterns, and context of the target environment. This step allows organizations to maintain accuracy while aligning outputs with real business or research requirements.

3. Train Using Hugging Face Libraries

Training is conducted using standardized libraries such as Transformers and Datasets, which manage tokenization, batching, and optimization workflows. These libraries reduce the need for custom engineering while maintaining transparency and reproducibility, both of which are essential for production-grade AI systems.

4. Evaluate Model Performance

Once training is complete, the model is evaluated using validation datasets and established metrics such as accuracy, precision, recall, or F1 score depending on the task. This evaluation phase confirms whether the model generalizes well and meets reliability expectations before deployment.

5. Deploy Using APIs or Spaces

After validation, the model can be deployed through APIs for integration into applications or hosted using Hugging Face Spaces for interactive access. This allows teams to operationalize AI capabilities quickly while maintaining version control and scalability.

Hugging Face vs Traditional Machine Learning Development

In traditional machine learning, teams often start from zero. They gather and clean data, design model architectures, build training pipelines manually, and manage deployment infrastructure themselves. Every experiment can take days or weeks because even small changes may require retraining and reconfiguring the entire workflow. This makes innovation slower and heavily dependent on specialized expertise and resources.

Hugging Face changes this approach by offering powerful pre-trained transformer models that already understand language and patterns learned from massive datasets. Instead of building everything manually, developers fine-tune these models for their specific use cases using an integrated set of tools for datasets, tokenization, training, and deployment. The result is faster experimentation, quicker prototyping, and a smoother path from concept to production-grade AI applications.

Here is a quick comparison to understand how Hugging Face simplifies machine learning workflows compared to traditional development approaches:

| Factor | Hugging Face | Traditional Machine Learning |

| Starting Point | Uses pre-trained models | Builds models from scratch |

| Data Handling | Ready-to-use datasets and tokenizers | Manual preprocessing and feature engineering |

| Workflow | Integrated libraries manage pipelines | Custom pipelines require full setup |

| Experimentation | Fast prototyping and iteration | Slower due to repeated retraining |

| Engineering Effort | Reduced infrastructure complexity | High system design effort |

| Deployment Speed | Faster transition to production | Longer development timelines |

| Skill Requirement | Moderate ML knowledge needed | Deep expertise required |

Who Should Learn Hugging Face?

- Machine Learning Engineers

Engineers building production-grade Artificial Intelligence systems can use Hugging Face to standardize model development, reduce custom pipeline work, and accelerate experimentation while maintaining scalability and performance monitoring.

- Data Scientists

Data scientists benefit from rapid model evaluation and fine-tuning capabilities that allow them to test hypotheses, analyze text-heavy datasets, and generate insights without investing time in building architectures from the ground up.

- Developers Entering AI

Software developers transitioning into AI can apply familiar Python-based workflows while gaining access to advanced transformer models. This lowers the barrier to building intelligent features into existing applications.

- Startups Building AI Products

Startups can prototype and launch AI-driven solutions faster by leveraging pre-trained models and integrated deployment tools. This allows lean teams to deliver functional products without heavy infrastructure investment.

- Students Learning Applied Machine Learning

Students gain hands-on exposure to real-world AI workflows, from data preparation to deployment, which bridges the gap between theoretical study and practical implementation.

How to Get Started with Hugging Face?

- Installing Libraries

Begin by installing core libraries such as Transformers and Datasets using Python package managers. This sets up the environment required to run and fine-tune models locally or in cloud environments.

- Running Your First Model

Load a pre-trained model and perform inference on sample data to understand how tokenization, inputs, and predictions work in practice.

- Exploring the Model Hub

Search the Model Hub to identify models aligned with your use case. Review documentation, training data sources, and evaluation benchmarks before selecting a model.

- Learning Fine-Tuning Basics

Train the selected model on domain-specific datasets to adapt it to specialized tasks such as classification, summarization, or information extraction.

- Deploying Simple Applications

Use APIs or Hugging Face Spaces to expose the model through a user interface or integrate it into applications, which demonstrates how research models transition into usable tools.

Conclusion

Hugging Face has reshaped how artificial intelligence systems are developed by replacing fragmented, resource-intensive workflows with an integrated, model-centric approach built on shared research and standardized tooling. By providing access to validated architectures and collaborative infrastructure, it allows engineers and organizations to move from experimentation to deployment with greater efficiency and technical rigor. As AI adoption expands across industries and multimodal capabilities mature, Hugging Face is positioned as a foundational model that supports reproducible research and the continued advancement of applied machine learning.

FAQs

Do Hugging Face models require high-end hardware to run?

Not always. Many models are optimized for inference on standard CPUs, while larger architectures can scale to GPUs when handling intensive workloads or training tasks.

Can organizations use Hugging Face in secure or regulated environments?

Yes. Models can be deployed within private infrastructure, which allows teams to maintain data control, comply with governance policies, and audit model behavior.

How does Hugging Face support collaboration across teams?

Its Model Hub provides version control, documentation, and shared access to models and datasets, allowing researchers and engineers to work on the same assets with clear traceability.

Did you enjoy this article?