Horizontal vs Vertical Scaling for Efficient System Design

Oct 28, 2025 6 Min Read 6909 Views

(Last Updated)

In the world of system design, envision a digital skyscraper – a multifaceted structure built to withstand the demands of the modern world. Just as an architect crafts blueprints to ensure a skyscraper’s stability, a well-thought-out system design guarantees your digital applications can thrive amid the ever-evolving landscape.

When demand for any application is soaring, system design architects quickly recognize the need to maintain an app’s uptime, accessibility, and capacity in the face of increased traffic. Addressing capacity planning poses a recurring challenge for engineering teams, as it involves the complex task of ensuring the availability of appropriate resources to accommodate both anticipated and unforeseen surges in traffic.

In this blog, we’re going to open a discussion about whether to scale out (horizontally) or up (vertically) when it comes to the scalability of an app. We’re also going to shed some light on the vital need for scalability and pitch Horizontal vs Vertical Scaling head-to-head and load balancing/consistent hashing that makes a bedrock for efficient system design.

Table of contents

- The Importance of Scalability

- Scalability: How Many Concurrent Requests Can Accommodate Our Design?

- Horizontal Scaling

- Pros of Horizontal Scaling

- Cons of Horizontal Scaling

- Vertical Scaling

- Pros of Vertical Scaling

- Cons of Vertical Scaling

- Horizontal vs Vertical Scaling

- Use Case Scenarios for System Design Needs

- On-Premise vs. Cloud Scaling

- Load Balancing with Consistent Hashing

- In Closing

- FAQs

- When should I choose horizontal scaling over vertical scaling?

- How do load balancers enhance system performance?

- What is consistent hashing and why is it essential for system design?

- Can I combine horizontal and vertical scaling in my architecture?

- What is the role of NGINX in load balancing?

The Importance of Scalability

Scalability stands at the heart of long-term viability, akin to a skyscraper’s foundation. It is the ability of your digital infrastructure to adapt and expand without excessive strain on resources. Picture owning a small bakery suddenly inundated with hundreds of customers. Without scalability, your business could crumble under the weight of its own success. This is where horizontal and vertical scaling emerges as crucial solutions.

The measure of an application’s scalability revolves around its ability to effectively handle a multitude of concurrent requests. This scalability threshold is the point at which an application’s capacity to manage additional requests begins to diminish, marking the limits of its scalability.

Such limits surface when a pivotal hardware resource becomes exhausted, necessitating diverse or increased resources. The scalability adjustments can comprise various alterations, such as modifications to CPU and physical memory, expanding hard disk capacity, and enhancing network bandwidth.

While both horizontal and vertical scaling shares the objective of augmenting computing resources within your infrastructure, they diverge significantly in terms of implementation and performance. Horizontal scaling entails the addition of more servers or instances to distribute the load across multiple resources, enhancing overall performance and capacity.

Conversely, vertical scaling involves enhancing the capabilities of a single server by upgrading its hardware components and optimizing performance and capacity on a single machine.

Also Read About 10 Best Database Management Systems For Software Developers

Scalability: How Many Concurrent Requests Can Accommodate Our Design?

Before we delve into the horizontal vs. vertical scaling debate, let’s explore the pivotal question: how many concurrent requests can your system design manage? The answer to this question is the compass guiding your strategy. Let’s say we’re building an e-commerce application, and 1 server can accommodate a maximum of 10k requests. So…

| Concurrent Users | Request/Seconds | Latency (Expected) |

| 2k | 0.5k | |

| 20k | 12k | |

| 100k | 80k | <= 2 Sec |

| 500k | 300k | ? |

So the question that arises is how can we make our e-commerce application for acceptable/optimal latency in case our application is dealing with unexpected traffic? As evident from the table, we are initiating a compact e-commerce application designed to accommodate a mere 2,000 concurrent users and process 500 requests per second. How can we effectively expand the application’s capacity when the need arises to serve a larger user base? That’s where optimal scaling is needed.

Before diving into the next section, ensure you’re solid on full-stack development essentials like front-end frameworks, back-end technologies, and database management. If you are looking for a detailed Full Stack Development career program, you can join HCL GUVI’s Full Stack Development Course with placement assistance. You will be able to master the MERN stack (MongoDB, Express.js, React, Node.js) and build real-life projects.

Additionally, if you want to explore JavaScript through a self-paced course, try HCL GUVI’s JavaScript certification course.

Horizontal Scaling

Horizontal scaling, often called “scaling out,” is equivalent to opening more bakeries to serve a burgeoning customer base. In the digital realm, it translates to adding more servers or instances to your infrastructure.

Pros of Horizontal Scaling

High Concurrency: Horizontal scaling excels in managing a substantial number of concurrent requests. As traffic surges, you can swiftly deploy additional servers to distribute the load, ensuring smooth operations.

Minimal Downtime: This approach can be implemented with minimal to no downtime, ensuring uninterrupted services to your users.

Load Balancing: Horizontal scaling effortlessly distributes incoming traffic across multiple servers, preventing any single server from being overburdened. This dynamic load balancing enhances system reliability.

Cost-Efficient: One of its most appealing aspects is cost-effectiveness. You can incrementally add servers as your needs grow, minimizing upfront expenses.

Performance Boost: The workload distribution across multiple servers enhances performance, as the load is shared, reducing the strain on individual components.

Cons of Horizontal Scaling

Complex Configuration: Implementing horizontal scaling requires intricate load balancing, dynamic routing, and auto-scaling mechanisms. It necessitates a comprehensive understanding of distributed systems.

Networking Overhead: As communication increases between servers, networking overhead can lead to latency. Efficient network management is essential to counter this challenge.

Limited Vertical Growth: Horizontal scaling may eventually encounter limitations in vertical growth, where adding more servers may not be feasible or efficient.

Maintenance Demands: Managing multiple servers demands increased maintenance efforts, such as updates, patch management, and monitoring.

Next in our space: What are the SOLID Design prinicples in System Design.

Vertical Scaling

Vertical scaling, often termed “scaling up,” involves enhancing the capabilities of a single server or instance to meet growing demands. Instead of opening more bakeries, you expand the size and capacity of your existing bakery, allowing it to serve more customers.

Pros of Vertical Scaling

Moderate Concurrency: Vertical scaling can effectively increase the capacity of a single server, allowing it to handle a moderate increase in concurrent requests. It’s an ideal solution when you expect gradual growth.

Simpler Implementation: Compared to horizontal scaling, vertical scaling is more straightforward to implement. You don’t need to manage multiple servers or complex load-balancing configurations.

Exceptional Performance: By upgrading CPU, RAM, and other hardware components, you can achieve exceptional performance gains. This is particularly beneficial for applications that rely on processing power.

Greater Vertical Limitations: Vertical scaling offers more substantial vertical growth potential compared to horizontal scaling. You can keep upgrading hardware to accommodate a growing user base.

Cons of Vertical Scaling

Higher Upfront Costs: The initial investment for vertical scaling can be high, as it involves purchasing more powerful hardware components. This can be a barrier for smaller businesses.

Possible Downtime: Upgrading hardware often requires a server restart, resulting in downtime. Careful planning and redundancy can mitigate this, but it remains a potential concern.

Less Efficient Load Balancing: Vertical scaling relies on a single server, so load balancing is less efficient compared to horizontal scaling, where multiple servers distribute the load.

Lower Cost Efficiency: Over time, as your hardware becomes more powerful, you may experience diminishing returns in terms of cost efficiency. Horizontal scaling may be more cost-effective in the long run.

Also Read: Top 10 Full-Stack Developer Frameworks

Horizontal vs Vertical Scaling

| Metric | Horizontal Scaling | Vertical Scaling |

|---|---|---|

| Concurrency | High | Moderate |

| Required Architecture | Distributed | Single Instance |

| Implementation | Complex | Simpler |

| Complexity and Maintenance | Moderate | High |

| Configuration | Load Balancing | Hardware Upgrades |

| Downtime | Minimal | Possible |

| Load Balancing | Efficient | Less efficient |

| Costs | Cost-Efficient | Higher upfront |

| Networking | Increased overhead | Lower overhead |

| Performance | Good | Exceptional |

| Limitations | Scalability limited | Greater vertical |

| Example | Kubernetes cluster, Cassandra | Upgrading CPU/RAM, MySQL, Amazon RDS |

Use Case Scenarios for System Design Needs

The decision between horizontal and vertical scaling hinges on the uniqueness of your use case. For instance, high-traffic e-commerce websites may lean toward horizontal scaling to meet varying demands, while a database-intensive application could opt for vertical scaling to enhance the capabilities of a single server.

Consider implementing vertical scaling in the following scenarios:

- When your engineering team and key stakeholders have conducted an assessment, and increasing a machine’s capabilities, such as CPU and memory capacity, aligns with the price-performance level your workloads necessitate.

- In the early stages of your venture, especially when you lack certainty regarding the consistency of incoming traffic or the expected user volume.

- When you intend to utilize your existing system for internal purposes while relying on cloud provider services for most of your customer-facing solutions.

- In cases where redundancy isn’t a practical or essential requirement for your optimal operations.

- When upgrades are infrequent, they result in minimal downtime concerns.

- If you’re dealing with a legacy application that doesn’t demand extensive distribution or high scalability.

On the other hand, horizontal scaling is the way to go in the following situations:

- Delivering high-quality service demands a paramount focus on performance.

- When you need backup machines to minimize the risk of single points of failure, it ensures system reliability.

- In instances where you seek enhanced flexibility configure your machines in diverse ways to optimize efficiency, such as the price-performance ratio.

- When running your application or services across various geographical locations at low latency is a pivotal requirement.

- The regular update, upgrade, and optimization of your system is a non-negotiable aspect, all while mitigating downtime.

- If you are confident that your usage, user base, or incoming traffic are consistently high or are projected to experience exponential growth.

- When you possess the necessary human and financial resources to acquire, install, and maintain additional hardware and software components.

- In cases where you adopt a micro-services architecture or employ containerized applications, as these approaches often deliver superior performance within a distributed system.

Prior to making a decision between horizontal and vertical scaling, it’s crucial to meticulously evaluate your current use case in the context of future expectations. For instance, consider running a portion of your workload on each system to assess performance against your service level agreements (SLAs) with your customers, ensuring your chosen scaling approach aligns seamlessly with your long-term goals.

Also Read: Best Web Development Roadmap for Beginners

On-Premise vs. Cloud Scaling

Scalability can be achieved both on-premise and in the cloud. The cloud offers scalability on-demand, allowing you to expand or shrink resources as needed. On-premise solutions offer more control but entail higher initial infrastructure investments. Your choice should align with your organization’s goals and budget.

Load Balancing with Consistent Hashing

Efficient load balancing is the linchpin of successful horizontal scaling.

Load balancing and the fascinating world of consistent hashing are crucial components in system design, and they hold particular importance in technical interviews. Let’s delve into why they are integral and what makes them indispensable.

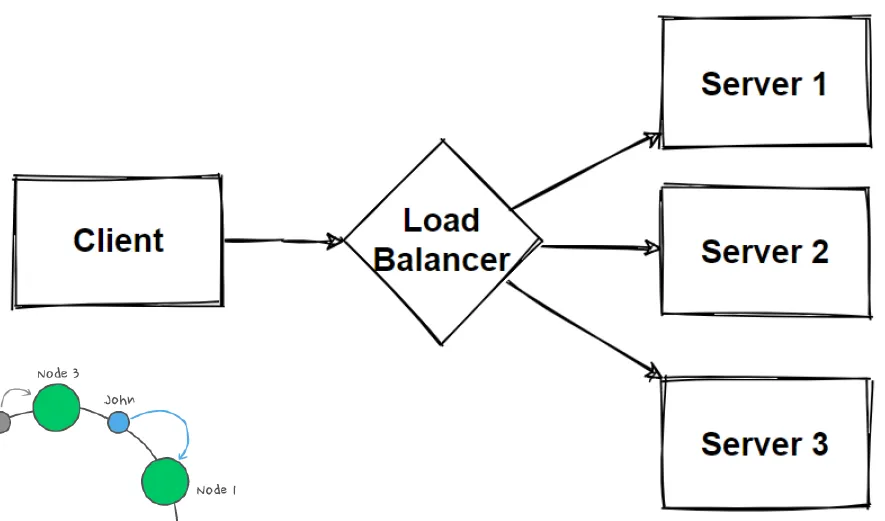

Load balancers, the unsung heroes of architecture, play a pivotal role in ensuring the smooth operation of your application. Picture them as traffic conductors, orchestrating the flow of data with precision. They stand between the clients and servers, deftly accepting incoming network and application traffic and then using sophisticated algorithms to distribute it across multiple backend servers.

NGINX is one of the popular open-source load-balancing software that is widely used in the software industry.

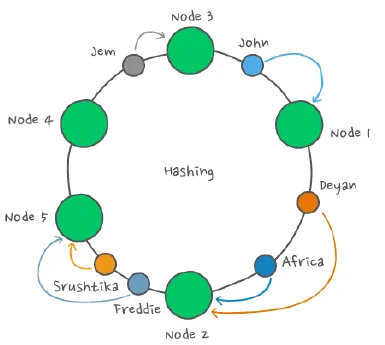

One key algorithm that works like magic in balancing the traffic is the consistent hashing algorithm. This algorithm is a gem when it comes to dividing data among multiple machines, especially in scenarios where the number of machines storing data is dynamic. It’s akin to a puzzle piece that elegantly slots into place during system design, particularly in the realm of distributed databases, where many machines are at play, and machine failure is a real-world concern.

But what’s the real magic of consistent hashing? It eliminates the need to reshuffle keys or touch all the database servers every time you scale up or down. It’s an architectural marvel, enabling horizontal scalability without the headache of constantly reorganizing your infrastructure.

This brings us to the world of microservices architecture, where the use of consistent hashing becomes paramount. As you aim to increase concurrent requests, the evolution of your architecture into a microservices model becomes a logical progression. Microservices, with their inherent flexibility and scalability, align seamlessly with the needs of a modern, dynamic digital landscape.

Consistent hashing serves as the linchpin, allowing this evolution to happen without hiccups. It’s the technological cornerstone that underpins your ability to handle more traffic, and it’s the secret sauce that makes microservices architecture such a powerful force in the world of system design.

Read Top 10 Eminent Tools Every Full Stack Developer Uses for their Workforce.

Kickstart your Full Stack Development journey by enrolling in HCL GUVI’s certified Full Stack Development Course with placement assistance where you will master the MERN stack (MongoDB, Express.js, React, Node.js) and build interesting real-life projects. This program is crafted by our team of experts to help you upskill and assist you in placements.

Alternatively, if you want to explore JavaScript through a self-paced course, try HCL GUVI’s JavaScript course.

In Closing

System design, scalability, and load balancing form the bedrock of a resilient digital infrastructure. Choosing between horizontal vs vertical scaling is a critical decision that should align with your specific requirements, financial constraints, and long-term aspirations. Success in the digital world hinges on a profound understanding of your system’s unique prerequisites and the readiness to adapt as your digital presence grows. In this ever-evolving landscape, adaptability stands as the ultimate recipe for long-term viability and success.

Also Explore: Best Full-Stack Development Project Ideas in 2024

FAQs

When should I choose horizontal scaling over vertical scaling?

Horizontal scaling is preferable when you anticipate consistent or rapidly growing user traffic, and redundancy, flexibility, and high performance are key requirements for your application.

How do load balancers enhance system performance?

Load balancers evenly distribute incoming traffic across multiple servers, optimizing responsiveness and availability. They also reroute traffic away from a failed server, ensuring fault tolerance.

What is consistent hashing and why is it essential for system design?

Consistent hashing is an algorithm that divides data across multiple machines efficiently, particularly in scenarios with changing machine numbers. It simplifies scaling, reduces the need for data reshuffling, and is ideal for microservices architectures.

Can I combine horizontal and vertical scaling in my architecture?

Yes, hybrid scaling is feasible. You can utilize both methods to enhance your infrastructure’s scalability, performance, and fault tolerance, based on specific use cases and requirements.

What is the role of NGINX in load balancing?

NGINX is a widely used open-source load-balancing software that efficiently manages the distribution of traffic across servers. Its features include fault tolerance, load distribution, and improved availability for your architecture.

Did you enjoy this article?