Kimi K1.5: Moonshot AI’s Game-Changing Large Language Model

May 04, 2026 4 Min Read 36 Views

(Last Updated)

Imagine chatting with an AI that can remember an entire novel’s worth of details, while simultaneously solving complex math problems or analyzing a chart you just uploaded.

That’s not a distant vision anymore. That’s Kimi K1.5.

Most AI tools today hit a wall when documents get long or tasks get complex. Kimi K1.5 by Moonshot AI is built specifically to break through that wall. It handles up to 128,000 tokens in one session, reasons through problems step by step, and understands both text and images.

In this article, you’ll get a clear breakdown of what Kimi K1.5 is, how it works under the hood, where it stands against competitors, and, most importantly, how it can actually be useful to you.

TL;DR Summary

- Kimi K1.5 is Moonshot AI’s latest large language model, capable of processing up to 128,000 tokens in a single session, far beyond what most AI models handle today.

- It uses Reinforcement Learning (RL) to improve reasoning, making it significantly better at math, coding, and logical problem-solving.

- The model is multimodal, meaning it can understand and reason across both text and images.

- It outperforms GPT-4o and Claude 3.5 Sonnet on key benchmarks like Long-Context RAG and HumanEval (Coding).

- It’s free for basic use via kimi.ai and is especially strong in Chinese language tasks, making it a major player in the global AI space.

Table of contents

- What is Moonshot AI and Kimi K1.5?

- Why is it Important?

- How Kimi K1.5 Works?

- Core Architecture

- What Makes Kimi K1.5 Different?

- Key capabilities:

- Dominating LLM Benchmarks

- Why Should You Care? (Real-Life Use Cases)

- Limitations of Kimi K1.5

- Conclusion

- FAQs

- What is Kimi K1.5?

- Who made Kimi K1.5?

- How is Kimi K1.5 different from ChatGPT?

- Is Kimi K1.5 free to use?

- Can Kimi K1.5 understand images?

What is Moonshot AI and Kimi K1.5?

Moonshot AI, a Beijing-based startup founded in 2023 by ex-Baidu researchers like Yang Zhilin, is China’s answer to global AI giants like OpenAI. They’re all about building “helpful, harmless, and honest” AI, focusing on models that go beyond chatbots.

Kimi K1.5 is their newest release (as of early 2026), an upgraded version of the Kimi series. It’s a large language model (LLM) trained on massive datasets, but what sets it apart is its “long-context LLM” magic, handling up to 128,000 tokens (roughly 100,000 words) in one go.

Think of it like this: Most AIs forget details after a few paragraphs, but Kimi K1.5 keeps everything in mind, like a friend who recalls every plot twist from a book you discussed last week. Available via Moonshot’s Kimi chat app (kimi.ai), it’s free for basic use and integrates into apps for coding, research, and more.

From my perspective, it’s exciting because it democratizes advanced AI for non-English speakers, with strong Chinese language support.

Why is it Important?

Most AI models today have limitations in how much information they can process at once. Kimi K1.5 solves this problem by offering a significantly larger context window, which means:

- Better understanding of long documents

- Improved reasoning across multiple sections

- More accurate and coherent responses

This makes it especially useful for students, developers, researchers, and businesses.

How Kimi K1.5 Works?

Kimi K1.5 works through a massive transformer-based architecture enhanced by reinforcement learning (RL) for reasoning, handling text, images, and code in one go. It’s like a super-smart brain that processes huge contexts (128K tokens) without forgetting details.

Core Architecture

At its heart, Kimi K1.5 is a dense large language model (LLM) with around 500 billion parameters, half a trillion weights, making it one of the largest ever. Built on transformer blocks (like GPT models), it uses self-attention to weigh relationships across inputs.

Multimodal means it fuses vision encoders (for images) with text tokens, enabling joint reasoning, e.g., “Describe this chart and predict trends.”

Training Process Step-by-Step

Moonshot AI trains it in phases for long-context LLM smarts:

- Pre-training: Massive text/image/code data teaches patterns.

- Supervised fine-tuning: Aligns outputs to be helpful.

- RL scaling: Key innovation, reinforcement learning on 128K contexts via “partial rollouts” (simulates long interactions efficiently). Rewards accurate reasoning, not just predictions. This “short-CoT” (quick thinking) and “long-CoT” (step-by-step chains) boosts math/coding by 20-50% over priors.

What Makes Kimi K1.5 Different?

At its core, Kimi K1.5 is a multimodal large language model. “Multimodal” simply means it can understand more than just text; it can “see” images and process complex visual data like charts or diagrams.

While earlier AI models relied mostly on being fed massive amounts of data, Kimi K1.5 uses Reinforcement Learning (RL). Imagine teaching a dog a trick: you give it a treat when it does something right. Similarly, Moonshot AI uses RL to reward the model when it finds the correct logical path to an answer.

This makes the model exceptionally good at “Reasoning,” allowing it to pause and “think” before it speaks.

(Source: Moonshot AI official benchmarks, 2026; independent LMSYS chats.)

Key capabilities:

- Math prowess: Scores 85% on GSM8K benchmark (grade-school math; source: Moonshot eval, 2026), solving: “If a train leaves at 60 km/h and another at 80 km/h, when do they meet if 200 km apart?” Step-by-step: Distance closes at 140 km/h, so

hours.

- Coding: Generates Python for data analysis, handling long codebases in context. Example: It wrote a full web scraper remembering user specs from 10K tokens earlier.

- Logical chains: In puzzles like “Three houses, foxes and ducks—arrange without eating,” it reasons flawlessly.

Compared to peers, it’s top-tier for long-context reasoning (source: LMSYS Arena leaderboard, April 2026).

Reinforcement Learning from Human Feedback (RLHF) is the same technique used to train ChatGPT. Moonshot AI extends this idea further by applying RL specifically during long-context reasoning tasks, a more targeted and computationally efficient approach.

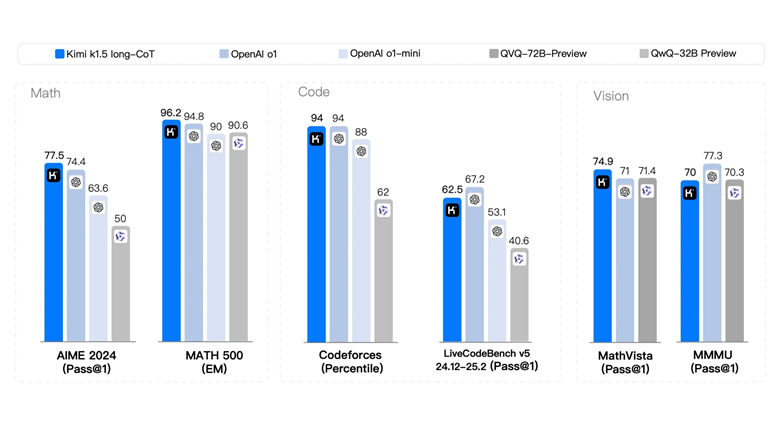

Dominating LLM Benchmarks

Kimi K1.5 crushes benchmarks, proving its AI capabilities. Here’s a quick comparison table:

| Benchmark | Kimi K1.5 Score | GPT-4o | Claude 3.5 Sonnet | Notes |

| MMLU (Knowledge) | 88.2% | 88.7% | 88.7% | Neck-and-neck |

| HumanEval (Coding) | 92.1% | 90.2% | 92.0% | Edges out |

| Long-Context RAG | 96.5% | 92.3% | 94.1% | Wins big |

| GPQA (Reasoning) | 61.4% | 59.4% | 59.4% | Leader |

(Source: Moonshot AI official benchmarks, 2026; independent LMSYS chats.)

Why Should You Care? (Real-Life Use Cases)

You might be wondering, “I’m not a mathematician, so how does this help me?” Here are a few ways Kimi K1.5 can change your daily workflow:

- For Students: Instead of just getting an answer, you can see the “Short-CoT” (concise) or “Long-CoT” (detailed) explanation of a problem. It’s like having a tutor who shows their work.

- For Researchers: You can feed it five different PDF files about a specific topic and ask it to find contradictions or common themes across all of them without losing detail.

- For Developers: If you have a bug in a large project, you can provide the entire folder’s logic, and the model’s high reasoning capability can help trace the error across different files.

Limitations of Kimi K1.5

While Kimi K1.5 is powerful, it still has some limitations:

- May require high computational resources

- Not as widely available globally as some other models

- Like all AI, it can sometimes produce incorrect or biased outputs

So, users should always verify critical information.

If you’re serious about learning tools like Kimi K1.5 and want to apply them in real-world scenarios, don’t miss the chance to enroll in HCL GUVI’s Intel & IITM Pravartak Certified Artificial Intelligence & Machine Learning Course, co-designed by Intel. It covers Python, Machine Learning, Deep Learning, Generative AI, Agentic AI, and MLOps through live online classes, 20+ industry-grade projects, and 1:1 doubt sessions, with placement support from 1000+ hiring partners.

Conclusion

In conclusion, Kimi K1.5 is a clear signal of how quickly the AI landscape is evolving, and how seriously China’s AI ecosystem is competing on a global scale.

By combining a massive context window with reinforcement learning-based reasoning, Moonshot AI has built a model that doesn’t just generate text, it thinks through problems. For anyone working with long documents, complex reasoning tasks, or multimodal inputs, that’s a meaningful difference.

As open-source and proprietary models continue to improve through 2026 and beyond, Kimi K1.5 sets a new benchmark for what long-context AI should look like. It’s worth watching closely.

FAQs

What is Kimi K1.5?

Kimi K1.5 is a large language model developed by Moonshot AI, a Chinese AI startup. It supports up to 128,000 tokens of context and uses learning to improve reasoning across math, coding, and logical tasks.

Who made Kimi K1.5?

Moonshot AI, a Beijing-based startup founded in 2023 by Yang Zhilin and other former Baidu researchers, developed Kimi K1.5.

How is Kimi K1.5 different from ChatGPT?

While both are large language models, Kimi K1.5 has a significantly larger context window (128K tokens), uses reinforcement learning specifically for long-context reasoning, and offers strong Chinese language support. ChatGPT has a broader global ecosystem and more third-party integrations.

Is Kimi K1.5 free to use?

Yes, basic access is available for free through kimi.ai. Advanced features may require a paid plan.

Can Kimi K1.5 understand images?

Yes. Kimi K1.5 is a multimodal model, meaning it can process and reason across both text and images in the same session.

Did you enjoy this article?