How Do Vision Transformers Work? A Comprehensive Guide

Apr 06, 2026 5 Min Read 418 Views

(Last Updated)

Computer vision has traditionally relied on Convolutional Neural Networks (CNNs) for tasks such as image classification, object detection, and segmentation. However, the introduction of Vision Transformers (ViTs) changed how machines interpret visual information.

Instead of relying on convolution operations, Vision Transformers apply the transformer architecture originally developed for Natural Language Processing (NLP) to image data.

If you already understand the basics of machine learning and deep learning, the next step is learning how this architecture actually works. This article walks you through the Vision Transformer architecture step by step, explaining the components, the workflow, and why this model has become so influential in modern computer vision systems.

Quick Answer:

A Vision Transformer (ViT) processes images by dividing them into small patches, converting each patch into an embedding, and analyzing their relationships using a transformer’s self-attention mechanism. This allows the model to understand global visual context across the entire image, enabling powerful performance in tasks like image classification and object detection.

Table of contents

- What is a Vision Transformer?

- Key idea behind Vision Transformers

- Why Vision Transformers Became Important?

- Advantages of Vision Transformers

- High-Level Vision Transformer Architecture

- Step-by-Step Architecture of Vision Transformers

- Image Patch Embedding

- Patch Embedding Projection

- Adding the CLS Token

- Positional Encoding

- Transformer Encoder

- Structure of a Transformer Encoder Block

- Self-Attention in Vision Transformers

- Understanding Self-Attention

- Multi-Head Self-Attention

- Feed-Forward Networks

- Final Classification Head

- Training Vision Transformers

- Applications of Vision Transformers

- Image classification

- Object detection

- Image segmentation

- Medical imaging

- Autonomous driving

- Vision Transformers vs CNNs

- Popular Variants of Vision Transformers

- Swin Transformer

- DeiT (Data-efficient Image Transformers)

- CvT (Convolutional Vision Transformer)

- TimeSformer

- Limitations of Vision Transformers

- Data hunger

- High computational cost

- Training instability

- The Future of Vision Transformers

- Final Thoughts

- FAQs

- What is a Vision Transformer in machine learning?

- How do Vision Transformers process images?

- Why are Vision Transformers better than CNNs?

- What is the patch size in Vision Transformers?

- What are Vision Transformers used for?

What is a Vision Transformer?

A Vision Transformer (ViT) is a deep learning architecture that adapts the transformer model to process images. Instead of analyzing pixels through convolution filters, the model divides an image into smaller patches and processes them as a sequence, similar to how transformers process words in a sentence.

In simple terms, a Vision Transformer treats an image like a sentence made of visual tokens.

Each token represents a small region of the image, and the model uses self-attention mechanisms to understand relationships between these regions.

If you’re interested in learning about Deep Learning and Neural Networks, then read the blog – Learn deep learning and neural network in just 30 days!!

Key idea behind Vision Transformers

The core idea is simple:

- Break an image into small patches

- Convert each patch into an embedding

- Add positional information

- Feed the sequence into a transformer encoder

- Use the final representation to make predictions

This pipeline allows the model to capture global relationships across the entire image, something CNNs often struggle with due to their local receptive fields.

Why Vision Transformers Became Important?

Before understanding the architecture, it’s worth seeing why ViTs gained attention.

Traditional CNNs are excellent at detecting local features like edges, shapes, and textures. However, they rely on stacked convolution layers to gradually expand their receptive field.

Vision Transformers solve this differently.

Because self-attention allows every patch to interact with every other patch, the model can understand global context right from the beginning.

Advantages of Vision Transformers

Vision Transformers introduced several benefits:

- Global context understanding across the image

- Scalability with large datasets

- Parallel processing through transformer architecture

- Flexibility across multiple vision tasks

Today, many advanced computer vision models use transformer-based architectures or hybrid CNN-transformer approaches.

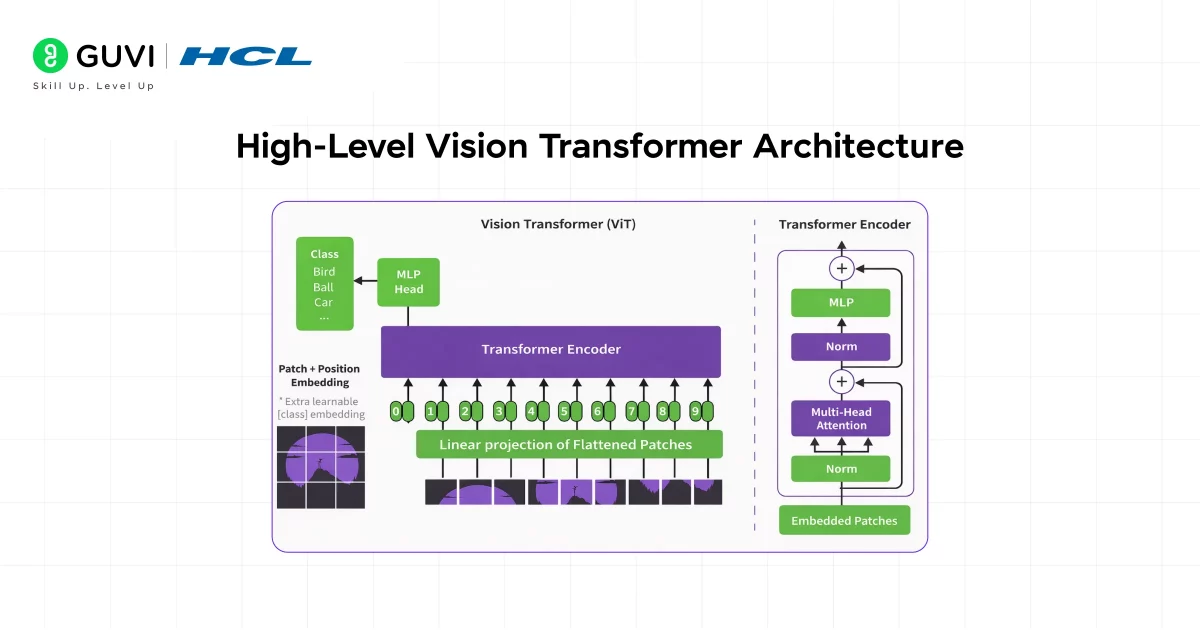

High-Level Vision Transformer Architecture

At a high level, the Vision Transformer architecture consists of four main components:

- Patch Embedding Layer

- Positional Encoding

- Transformer Encoder

- Classification Head

Each component plays a specific role in converting raw image data into meaningful predictions.

Let’s break each of these down.

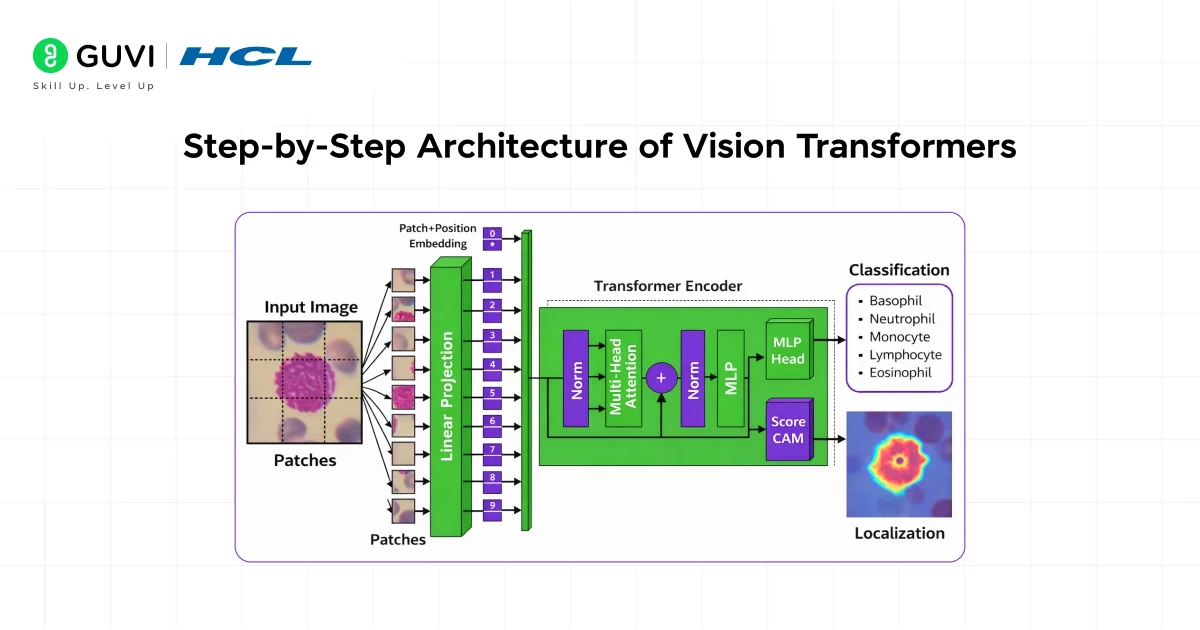

Step-by-Step Architecture of Vision Transformers

1. Image Patch Embedding

The first step in a Vision Transformer is converting the image into patches.

Splitting the Image

Instead of processing the entire image at once, the model divides it into fixed-size patches.

For example:

- Input image: 224 × 224

- Patch size: 16 × 16

This produces:

14 × 14 = 196 patches

Each patch becomes an independent token.

This step effectively transforms a 2D image into a sequence of visual tokens, which allows the transformer architecture to process it similarly to text sequences.

Flattening the Patches

Each patch contains multiple pixel values.

For example:

16 × 16 × 3 (RGB channels)

This patch is flattened into a vector.

After flattening, the vector is passed through a linear projection layer, which converts it into a fixed-size embedding vector.

These vectors are called patch embeddings.

Think of them as the visual equivalent of word embeddings in NLP models.

2. Patch Embedding Projection

Once the patches are flattened, they need to be projected into a higher-dimensional representation.

This is done using a learnable linear layer.

For example:

Patch vector → Linear transformation → Embedding vector

This projection helps the model encode meaningful visual features from the patch.

An alternative implementation uses a convolutional layer with kernel size equal to the patch size, which effectively performs patch extraction and embedding in one step.

At the end of this stage, the image has been converted into a sequence of embeddings.

3. Adding the CLS Token

Vision Transformers introduce a special token called the classification token ([CLS]).

This token is appended to the beginning of the patch sequence.

Its purpose is simple:

It gathers information from all patches during the transformer processing.

By the end of the network, this token contains the global representation of the entire image, which is then used for classification tasks.

You can think of it as a summary vector for the whole image.

4. Positional Encoding

Transformers process sequences in parallel and do not inherently understand the order of tokens.

This creates a challenge when working with images because spatial relationships matter.

To solve this, Vision Transformers add positional embeddings to each patch embedding.

Why positional encoding matters

Without positional information, the model would not know whether a patch came from:

- The top of the image

- The bottom

- The center

Positional embeddings encode spatial location so the model can understand the structure of the image.

After this step, each token contains:

Patch embedding + positional embedding

Now the sequence is ready for the transformer encoder.

5. Transformer Encoder

The transformer encoder is the core of the Vision Transformer.

It consists of multiple stacked layers that include:

- Multi-Head Self-Attention

- Feed-Forward Neural Networks

- Layer Normalization

- Residual Connections

These layers work together to learn relationships between patches.

Structure of a Transformer Encoder Block

Each encoder block typically contains:

- Layer normalization

- Multi-head self-attention

- Residual connection

- Feed-forward neural network

- Another residual connection

The model repeats this structure across multiple layers.

Self-Attention in Vision Transformers

Understanding Self-Attention

Self-attention is what allows Vision Transformers to understand relationships between patches.

Instead of focusing on neighboring pixels like CNNs, the model examines how each patch relates to every other patch.

For each patch embedding, three vectors are computed:

- Query (Q)

- Key (K)

- Value (V)

These vectors are generated using learnable matrices.

The attention mechanism computes similarity between patches using the query and key vectors.

This produces an attention score that determines how much importance one patch should give another.

Why this matters

Self-attention enables the model to capture:

- Long-range dependencies

- Global image relationships

- Context between distant regions

For example, a patch containing part of a cat’s ear can attend to a patch containing the cat’s body, even if they are far apart in the image.

Multi-Head Self-Attention

Instead of computing attention once, Vision Transformers use multiple attention heads.

Each head learns different types of relationships between patches.

For instance:

- One head may focus on texture

- Another may focus on edges

- Another may capture object boundaries

This multi-head design allows the model to capture diverse visual patterns simultaneously.

The outputs of all heads are then concatenated and passed to the next layer.

Feed-Forward Networks

After the attention layer, the output goes through a feed-forward neural network (FFN).

This network typically contains:

- Two fully connected layers

- A non-linear activation function (often GELU)

The purpose of the FFN is to further transform the representation learned from the attention mechanism.

Together, attention and feed-forward layers allow the model to learn both:

- Relationships between patches

- Complex feature representations

Final Classification Head

Once the sequence passes through multiple transformer layers, the final output corresponding to the CLS token is extracted.

This token now contains information aggregated from the entire image.

The classification head typically consists of:

- Layer normalization

- Linear layer

- Softmax

This produces the final prediction.

Training Vision Transformers

Vision Transformers typically require large datasets for training.

Unlike CNNs, they do not have built-in inductive biases like translation invariance.

As a result, they benefit significantly from large-scale pretraining datasets such as:

- ImageNet

- JFT-300M

- LAION datasets

After pretraining, the model can be fine-tuned for downstream tasks.

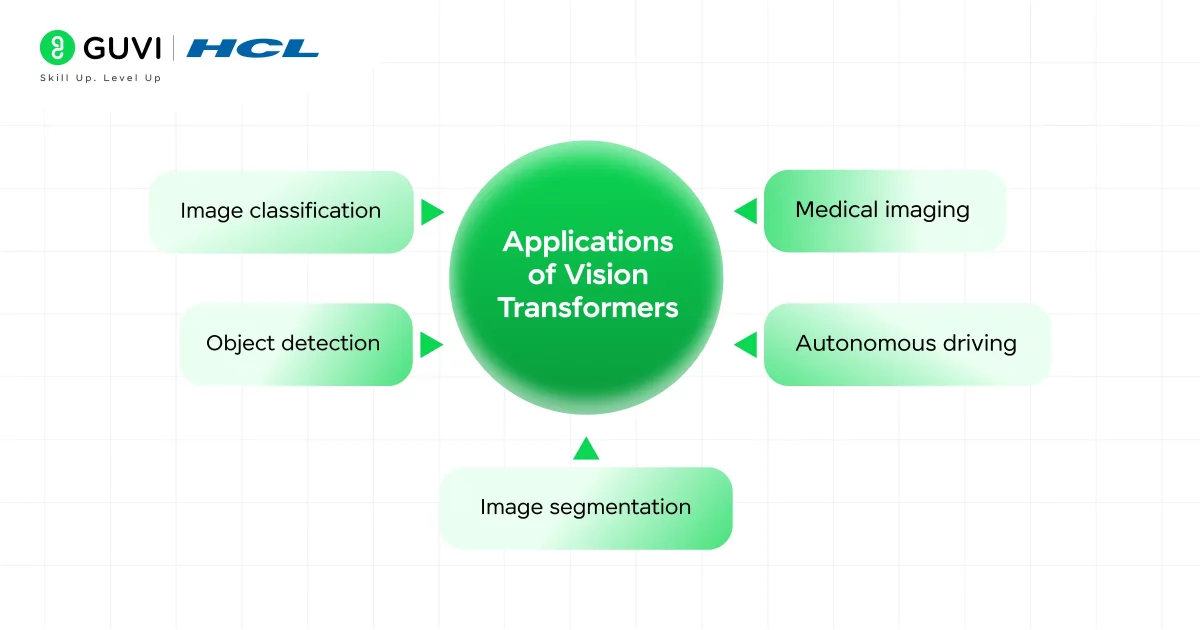

Applications of Vision Transformers

Vision Transformers are used across many modern AI systems.

1. Image classification

ViT models achieve state-of-the-art results on large datasets.

2. Object detection

Models like DETR combine transformers with detection pipelines.

3. Image segmentation

Vision Transformers help identify objects and boundaries in images.

4. Medical imaging

Used for detecting tumors, anomalies, and medical patterns.

5. Autonomous driving

Helps vehicles understand objects, lanes, and obstacles.

Vision Transformers vs CNNs

| Feature | CNN | Vision Transformer |

| Feature extraction | Convolution filters | Self-attention |

| Context understanding | Local first | Global from start |

| Data efficiency | Better on small datasets | Better on large datasets |

| Parallelism | Limited | High |

CNNs still perform well on smaller datasets, but Vision Transformers scale better with large data and compute.

Popular Variants of Vision Transformers

Since the original ViT paper, many improvements have been proposed.

1. Swin Transformer

Introduces shifted window attention to improve scalability and efficiency.

2. DeiT (Data-efficient Image Transformers)

Improves training efficiency with knowledge distillation.

3. CvT (Convolutional Vision Transformer)

Combines convolution operations with transformer architecture.

4. TimeSformer

Designed specifically for video understanding tasks.

These variants address limitations such as computational cost and data requirements.

Vision Transformers were inspired by NLP models: The architecture is based on the Transformer model introduced in the “Attention is All You Need” paper. The same mechanism used for language understanding is now used for visual perception.

Limitations of Vision Transformers

Despite their strengths, Vision Transformers also have challenges.

1. Data hunger

ViTs typically require large training datasets to perform well.

2. High computational cost

Self-attention scales quadratically with the number of patches.

3. Training instability

Training transformers from scratch can be difficult without careful optimization.

These limitations are why many modern architectures adopt hybrid approaches.

The Future of Vision Transformers

Vision Transformers are rapidly evolving.

Research is focusing on:

- Efficient attention mechanisms

- Multi-modal models combining vision and language

- Edge-friendly transformer architectures

- Self-supervised training

Models like CLIP, SAM, and multimodal foundation models already use transformer-based vision encoders.

As AI systems continue to scale, Vision Transformers are expected to play a major role in general-purpose visual intelligence.

If you’re serious about learning how AI can impact real-world scenarios, don’t miss the chance to enroll in HCL GUVI’s Intel & IITM Pravartak Certified Artificial Intelligence & Machine Learning course, co-designed by Intel. It covers Python, Machine Learning, Deep Learning, Generative AI, Agentic AI, and MLOps through live online classes, 20+ industry-grade projects, and 1:1 doubt sessions, with placement support from 1000+ hiring partners.

Final Thoughts

Vision Transformers represent a major shift in how machines understand images. By treating images as sequences of patches and applying self-attention mechanisms, these models can capture global context more effectively than traditional CNNs.

If you work in machine learning or computer vision, understanding the Vision Transformer architecture gives you insight into the direction modern AI research is heading.

The key takeaway is simple: Instead of learning visual features through convolution filters, Vision Transformers learn relationships between image patches using attention. This allows them to understand the entire image context simultaneously, enabling powerful performance across a wide range of vision tasks.

FAQs

1. What is a Vision Transformer in machine learning?

A Vision Transformer (ViT) is a deep learning model that applies the transformer architecture to image data. Instead of using convolution layers like CNNs, it splits images into patches and processes them using self-attention mechanisms to understand relationships across the image.

2. How do Vision Transformers process images?

Vision Transformers divide an image into small patches, convert them into embeddings, and feed them into a transformer encoder. The self-attention mechanism then learns relationships between these patches to understand the overall image.

3. Why are Vision Transformers better than CNNs?

Vision Transformers can capture global relationships between different parts of an image using self-attention. This allows them to understand broader context more effectively than CNNs, especially when trained on large datasets.

4. What is the patch size in Vision Transformers?

Patch size refers to how the input image is divided before processing. For example, a 224×224 image with a 16×16 patch size creates 196 patches, each treated as a token in the transformer model.

5. What are Vision Transformers used for?

Vision Transformers are used in tasks like image classification, object detection, image segmentation, medical imaging, and autonomous driving. They are increasingly used in modern AI systems because of their ability to learn global visual relationships.

Did you enjoy this article?