RAG vs LLM: Key Technical Differences Explained

Apr 30, 2026 7 Min Read 1147 Views

(Last Updated)

Artificial intelligence has evolved rapidly, but one question continues to dominate modern AI architecture discussions: What is the difference between RAG and LLM?

Large Language Models generate text, code, summaries, and analytical responses, but they rely on pre-trained knowledge and lack access to real-time or proprietary data without modification. Retrieval-Augmented Generation addresses this gap by combining LLMs with external retrieval systems, grounding outputs in relevant, verifiable documents.

In this guide, we break down how LLMs work, how RAG enhances them, and when to use each approach for modern AI systems.

Quick Answer:

LLMs generate responses using knowledge stored in model parameters, making them effective for reasoning, coding, and content creation. RAG enhances LLMs by retrieving relevant external documents before generation, improving factual accuracy and traceability. Choose LLM for general tasks and RAG for data-sensitive, compliance-driven, or frequently updated knowledge environments.

Table of contents

- What Is RAG?

- Core Components of a RAG System

- What Is LLM?

- Core Components of an LLM System

- Top Benefits of RAG

- Improved Factual Accuracy Through Retrieval Grounding

- Continuous Knowledge Updates Without Retraining

- Controlled Access to Enterprise Data

- Compliance and Audit Traceability

- Long-Term Operational Cost Efficiency

- Top Benefits of LLM

- Broad Cognitive Capability

- High Throughput Inference with Simpler Architecture

- Rapid Task Adaptation Through Prompting

- Natural Language Interface for Digital Systems

- Flexible Deployment Models

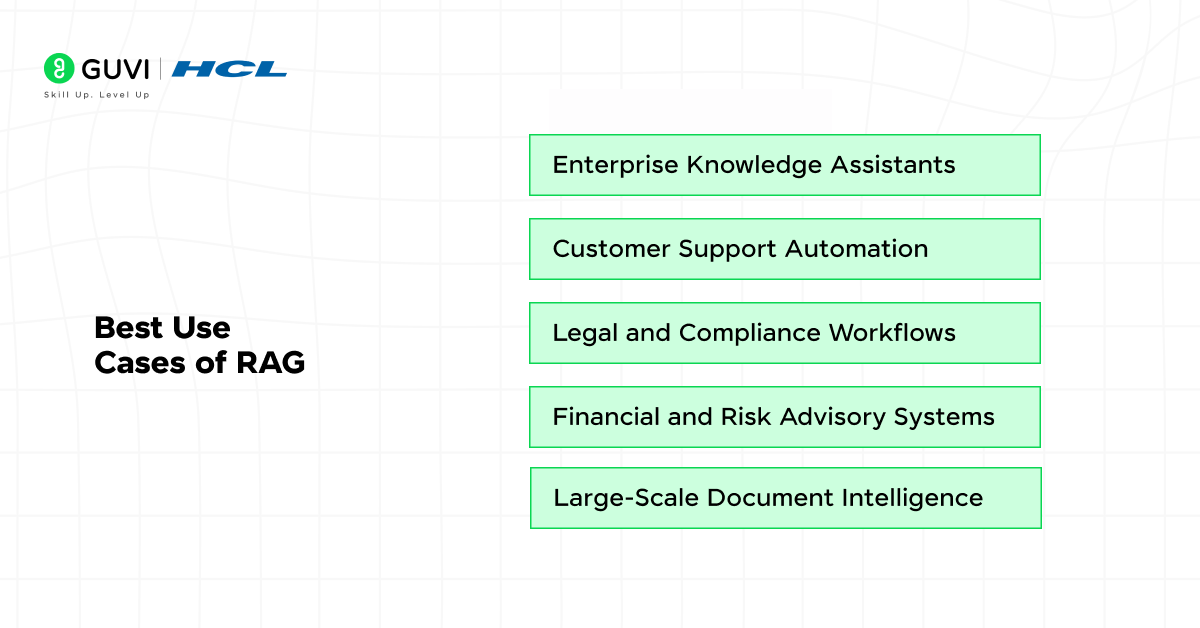

- Best Use Cases of RAG

- Enterprise Knowledge Assistants

- Customer Support Automation

- Legal and Compliance Workflows

- Financial and Risk Advisory Systems

- Large-Scale Document Intelligence

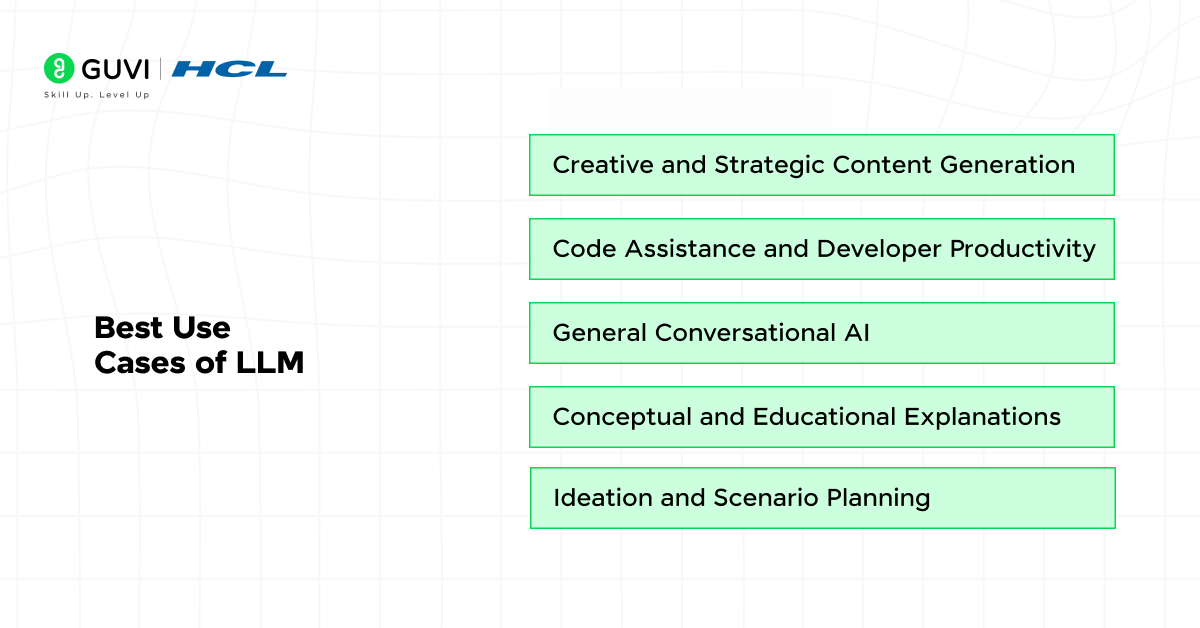

- Best Use Cases of LLM

- Creative and Strategic Content Generation

- Code Assistance and Developer Productivity

- General Conversational AI

- Conceptual and Educational Explanations

- Ideation and Scenario Planning

- How RAG Works: Step-by-Step Architecture

- Step 1: Query Embedding

- Step 2: Vector Similarity Retrieval

- Step 3: Context Construction and Filtering

- Step 4: Context Injection into LLM

- Step 5: Grounded Response Generation

- How LLM Works: Step-by-Step Architecture

- Step 1: Tokenization and Embedding

- Step 2: Transformer Layer Processing

- Step 3: Contextual Representation Formation

- Step 4: Probability Distribution Generation

- Step 5: Autoregressive Decoding

- Difference Between RAG and LLM: Side-by-Side Comparison

- Enterprise Readiness

- RAG vs LLM: Key Differences at a Glance

- Conclusion

- FAQs

- Is RAG more accurate than an LLM?

- Does RAG eliminate hallucinations?

- Is RAG more expensive?

- RAG vs fine-tuning?

What Is RAG?

Retrieval-Augmented Generation is a hybrid AI framework that extends LLM capability by integrating a retrieval pipeline with generation. Instead of relying only on parametric memory, RAG performs semantic search over external corpora at query time. The system embeds the user query, retrieves top-ranked documents using vector similarity search, and injects them into the prompt as structured context. This design combines parametric reasoning with non-parametric memory, improving factual grounding and reducing reliance on static training data. RAG architectures are widely adopted in enterprise AI systems where data freshness, compliance, and traceability are mandatory.

Core Components of a RAG System

- Embedding Model: Converts queries and documents into dense vector representations that capture semantic meaning.

- Document Preprocessing Pipeline: Cleans, chunks, and normalizes documents to optimize retrieval granularity and recall.

- Vector Database or ANN Index: Stores embeddings and supports approximate nearest neighbor search for low-latency retrieval.

- Retriever and Re-Ranker: Selects and optionally reorders top candidate documents based on semantic similarity and relevance scoring.

- Prompt Construction Layer: Formats retrieved content into structured prompts within context window constraints.

- Generative LLM Engine: Produces final output grounded in retrieved context.

- Monitoring and Evaluation Layer: Tracks retrieval accuracy, hallucination rate, and response relevance through evaluation metrics.

Want to build real-world RAG systems beyond theory? Enroll in the Retrieval-Augmented Generation (RAG) course and learn 100% online at your own pace, get full lifetime access to all content, clear your doubts through a dedicated forum, and strengthen your skills on 4 gamified practice platforms.

What Is LLM?

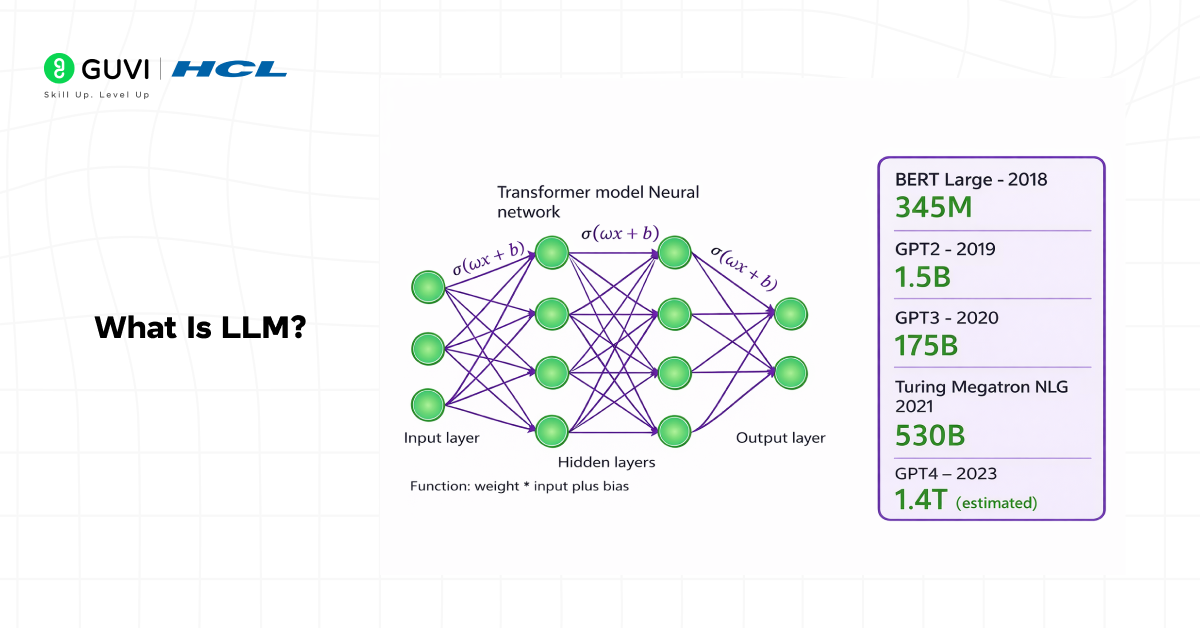

A Large Language Model is a transformer-based deep neural network trained on large-scale text corpora using self-supervised learning objectives such as next-token prediction. It learns high-dimensional representations of language through stacked attention layers, feed-forward networks, and positional encodings. Knowledge is stored in model parameters, often ranging from billions to trillions of weights.

During inference, the model processes tokens within a defined context window and generates output autoregressively. Advanced LLM systems integrate instruction tuning, reinforcement learning from human feedback, tool-calling interfaces, and system-level guardrails to improve reliability and controllability in production environments.

Core Components of an LLM System

- Transformer Backbone: Multi-head self-attention layers that compute contextual relationships between tokens across long sequences.

- Tokenizer and Vocabulary Layer: Subword tokenization algorithms such as Byte Pair Encoding that map raw text into numerical token IDs.

- Pretraining Objective Engine: Self-supervised learning tasks that optimize cross-entropy loss over massive datasets.

- Parameter Store and Model Weights: Learned representations encoding syntax, semantics, and factual associations.

- Context Window Management: Mechanisms that limit the number of tokens processed per inference cycle, affecting reasoning depth.

- Decoding Strategy Module: Sampling controls such as temperature, top-k, and nucleus sampling for output variability.

- Alignment and Safety Layer: Instruction fine-tuning, reward modeling, and policy constraints to regulate output behavior.

- Inference Infrastructure: GPU or TPU-backed serving environment with batching, caching, and latency optimization.

Top Benefits of RAG

1. Improved Factual Accuracy Through Retrieval Grounding

A central benefit of RAG systems is factual grounding. By retrieving relevant documents before generation, the model bases its output on verified content rather than internal statistical associations alone. This reduces hallucination risk and improves reliability in knowledge-intensive applications.

2. Continuous Knowledge Updates Without Retraining

RAG separates knowledge storage from model reasoning. New policies, product updates, or regulatory documents can be indexed into the vector database without modifying model weights. This allows systems to reflect current information without expensive retraining cycles.

3. Controlled Access to Enterprise Data

RAG architectures allow retrieval from approved repositories only. Role-based access controls can restrict which documents are retrievable per user session. This supports governance and prevents unauthorized exposure of sensitive information.

4. Compliance and Audit Traceability

Because outputs are grounded in retrieved content, systems can return source references alongside responses. This improves auditability in regulated sectors such as finance, healthcare, and legal services. Teams can trace which document segments influenced a given answer.

5. Long-Term Operational Cost Efficiency

Retraining large models to update knowledge is computationally expensive. RAG reduces this cost by updating document indexes instead of model parameters. Over time, this decoupled design lowers operational overhead while maintaining knowledge freshness.

Top Benefits of LLM

1. Broad Cognitive Capability

One of the primary benefits of LLMs is their ability to perform multi-task reasoning within a single architecture. Because they are trained on large-scale corpora using next-token prediction, they internalize linguistic structure, reasoning patterns, and cross-domain associations. This allows them to handle translation, summarization, question answering, and code synthesis without task-specific retraining. As a result, organizations can deploy a single model across multiple workflows instead of maintaining separate systems.

2. High Throughput Inference with Simpler Architecture

Another major advantage is architectural simplicity. A standalone LLM requires only model hosting and prompt processing, without retrieval pipelines or indexing layers. This reduces infrastructure components and operational coordination. For high-volume conversational systems, inference can be optimized through batching, quantization, and caching strategies, supporting scalable deployment.

3. Rapid Task Adaptation Through Prompting

LLMs offer strong few-shot and zero-shot performance, meaning new tasks can be introduced through structured prompts rather than retraining. By conditioning the model with examples inside the context window, teams can quickly test new use cases. This shortens experimentation cycles and supports fast iteration in research and product environments.

4. Natural Language Interface for Digital Systems

LLMs serve as a translation layer between structured backend systems and human users. They convert database outputs, analytics dashboards, and API responses into coherent explanations. This reduces dependency on custom rule-based language generation systems and simplifies user interaction design.

5. Flexible Deployment Models

LLMs can be deployed via managed APIs, private cloud clusters, or parameter-efficient fine-tuned variants. Organizations can choose deployment strategies based on data sensitivity, latency constraints, and infrastructure policies. This flexibility supports both startup-scale and enterprise-scale adoption.

Best Use Cases of RAG

1. Enterprise Knowledge Assistants

RAG systems are ideal when employees need accurate responses grounded in internal documentation, HR policies, or operational manuals.

2. Customer Support Automation

They retrieve product manuals, troubleshooting guides, and historical ticket data before generating responses, improving consistency and policy alignment.

3. Legal and Compliance Workflows

RAG supports retrieval of statutes, contract clauses, and regulatory frameworks, producing citation-backed summaries for review and decision support.

4. Financial and Risk Advisory Systems

In advisory contexts where factual correctness is mandatory, RAG grounds outputs in regulatory documents and market reports to reduce error exposure.

5. Large-Scale Document Intelligence

Organizations managing large unstructured repositories use RAG systems to retrieve, synthesize, and summarize information from contracts and research datasets. Each response is grounded in indexed document segments, supporting source-level traceability and audit validation.

Best Use Cases of LLM

1. Creative and Strategic Content Generation

LLMs are well suited for drafting articles, marketing copy, reports, and structured documents where fluency and reasoning matter more than real-time factual precision.

2. Code Assistance and Developer Productivity

They support multi-language code generation, debugging suggestions, and documentation writing. In development workflows where outputs are validated by compilers or human review, LLMs accelerate productivity.

3. General Conversational AI

For chat systems handling broad and non-specialized queries, standalone LLMs provide efficient and scalable interaction without retrieval overhead.

4. Conceptual and Educational Explanations

LLMs perform well when explaining general scientific, technical, or business concepts that do not require access to proprietary documents.

5. Ideation and Scenario Planning

They assist in generating alternative strategies, structured outlines, and exploratory discussions where precision constraints are limited, making generative AI particularly effective for ideation, scenario modeling, and early-stage strategic planning.

How RAG Works: Step-by-Step Architecture

Step 1: Query Embedding

The user query is processed and converted into a dense vector embedding using a trained embedding model. This embedding captures semantic meaning rather than surface-level keyword similarity.

Step 2: Vector Similarity Retrieval

The query embedding is compared against pre-indexed document embeddings stored in a vector database. Approximate nearest neighbor search retrieves the top-k most semantically relevant document chunks.

Step 3: Context Construction and Filtering

Retrieved documents are optionally re-ranked for relevance and filtered according to access control policies. The selected content is structured and formatted to fit within the LLM’s context window constraints.

Step 4: Context Injection into LLM

The original query and retrieved documents are combined into a single augmented prompt. This prompt is passed into the LLM, allowing generation to be conditioned on both parametric knowledge and external content.

Step 5: Grounded Response Generation

The LLM generates the final response using the injected context as primary evidence. If configured, citations are mapped to source documents. Knowledge updates occur by re-indexing documents rather than retraining the model, maintaining separation between reasoning and storage layers.

How LLM Works: Step-by-Step Architecture

Step 1: Tokenization and Embedding

The input prompt is converted into subword tokens using algorithms such as Byte Pair Encoding. Each token is mapped to a numerical ID and transformed into a dense embedding vector. Positional encodings are added to preserve sequence order within the context window.

Step 2: Transformer Layer Processing

The embedded tokens pass through stacked transformer blocks composed of multi-head self-attention and feed-forward networks. Self-attention computes Query, Key, and Value projections to model contextual relationships across tokens. Residual connections and layer normalization stabilize signal propagation across layers.

Step 3: Contextual Representation Formation

After traversing multiple transformer layers, each token embedding becomes context-aware, encoding semantic and syntactic relationships across the entire input sequence. This final hidden state represents the model’s internal understanding of the prompt.

Step 4: Probability Distribution Generation

The contextual representation of the final token is projected into vocabulary space through a linear transformation. A softmax function converts logits into a probability distribution over possible next tokens.

Step 5: Autoregressive Decoding

A decoding strategy such as greedy selection or nucleus sampling selects the next token. The token is appended to the sequence, and the process repeats iteratively until a termination condition is met. The final output is produced entirely from parametric knowledge stored in model weights.

Want to build practical skills with large language models and apply them to real-world problems? Enroll in the LLMs and Their Applications course to learn core concepts, hands-on workflows, and model deployment techniques through 100% online, self-paced learning with full lifetime access, dedicated forum support, and 4 gamified practice platforms.

Difference Between RAG and LLM: Side-by-Side Comparison

- Architecture Differences

- LLM: Standalone generative model

A Large Language Model operates as a parametric system. Knowledge is encoded within model weights during pretraining and fine-tuning. At inference time, the model predicts the next token based solely on its internal parameters and the prompt context window. There is no external memory lookup unless explicitly engineered.

This architecture is computationally efficient at runtime because it requires only model inference. However, knowledge updates require retraining, fine-tuning, or adapter-based modification. The model functions as a closed knowledge system.

- RAG: Retrieval layer plus generative model

Retrieval Augmented Generation introduces a non parametric memory layer. The system first converts the user query into embeddings, performs similarity search in a vector database, retrieves top relevant documents, and injects them into the LLM prompt.

The architecture consists of:

- Embedding model for semantic indexing

- Vector database such as FAISS, Pinecone, or Weaviate

- Retriever and ranking mechanism

- Prompt orchestration layer

- Generative LLM

This separation between storage and generation allows knowledge to be updated independently of the base model.

- Knowledge Source

- LLM: Pre-trained static knowledge

LLMs rely on data seen during training. Their knowledge cutoff is fixed at the time of model training. They cannot access new policies, recent research, internal company documents, or real-time data without integration layers.

This makes them strong at general reasoning but limited for domain specific or time sensitive applications.

- RAG: External, updatable knowledge base

RAG systems retrieve information from structured or unstructured enterprise data sources such as PDFs, policy manuals, databases, or APIs. The knowledge base can be updated continuously without retraining the model.

This architecture supports rapid knowledge refresh cycles. In regulated sectors, versioned document storage also improves audit traceability.

- Accuracy and Hallucination

- LLM: Higher hallucination risk

LLMs generate text based on statistical likelihood rather than verified retrieval. When prompts request information outside training distribution, models may produce confident but incorrect outputs.

This behavior is well documented in academic evaluations of large generative models. Hallucination risk increases when tasks require precise factual grounding.

- RAG: Grounded in retrieved documents

RAG constrains the model by supplying relevant source documents before generation. The model generates responses based on provided context rather than pure parametric recall.

Empirical studies show retrieval augmented systems reduce hallucination rates in question answering benchmarks. When combined with citation output, RAG improves transparency and factual accountability. Performance depends on retrieval precision, chunking strategy, and embedding quality.

5. Enterprise Readiness

- LLM: Requires alignment layers

For enterprise deployment, standalone LLMs require additional guardrails such as:

- Prompt engineering constraints

- Output validation layers

- fine-tuning for domain tone

- Human review loops

Without retrieval grounding, traceability is limited.

- RAG: Direct integration with enterprise data

RAG architectures integrate directly with internal repositories, CRM systems, policy databases, and document stores. This supports:

- Policy consistent responses

- Controlled data exposure

- Source level traceability

- Governance aligned output

For sectors such as healthcare, finance, and legal operations, document grounding supports compliance requirements.

Curious how Retrieval-Augmented Generation (RAG) stacks up against traditional large language models? Master both theoretical foundations and practical AI workflows with HCL GUVI’s Artificial Intelligence & Machine Learning Course; dive deep into transformer architectures, RAG pipelines, evaluation metrics, and real-world applications across search, QA, and knowledge-centric systems.

RAG vs LLM: Key Differences at a Glance

| Key Factor | LLM | RAG |

| Architecture | Standalone generative model | Retrieval layer plus generative model |

| Knowledge Source | Pre-trained, static model weights | External, updatable knowledge base |

| Memory Type | Parametric memory | Parametric plus non-parametric memory |

| Data Freshness | Fixed at training cutoff | Real-time or regularly updated |

| Hallucination Risk | Higher for factual queries | Lower due to document grounding |

| Traceability | Limited source visibility | Supports citations and source attribution |

| Enterprise Readiness | Needs fine-tuning and guardrails | Direct integration with internal data |

| Maintenance | Retraining required for knowledge updates | Update documents without retraining |

| Best Use Cases | Creative writing, coding, general chat | Customer support, compliance, enterprise search |

| Operational Complexity | Model hosting only | Embeddings, vector database, retrieval layer |

Conclusion

In conclusion, the difference between RAG and LLM lies in how knowledge is stored, accessed, and governed. LLMs rely on parametric memory to deliver broad reasoning and generative capability, while RAG extends this foundation with retrieval-based grounding for factual accuracy and compliance alignment. The right choice depends on data volatility, regulatory exposure, and tolerance for error. Production AI systems increasingly combine both to balance intelligence, reliability, and operational efficiency.

FAQs

1. Is RAG more accurate than an LLM?

Yes, for knowledge-intensive tasks. RAG retrieves relevant documents before generation, reducing hallucinations and improving auditability. Accuracy depends on retrieval quality.

2. Does RAG eliminate hallucinations?

No. It reduces them by grounding outputs in retrieved data, especially when citations are used.

3. Is RAG more expensive?

It adds infrastructure such as embeddings and vector databases, but avoids frequent retraining. Cost depends on scale and latency.

4. RAG vs fine-tuning?

Fine-tuning adjusts model behavior. RAG injects external knowledge at inference. Many production systems combine both.

Did you enjoy this article?