Building an AI Chatbot with Rasa and Ollama: Exploring the Process

Mar 10, 2026 4 Min Read 44 Views

(Last Updated)

AI chatbots have become increasingly significant in contemporary applications. Chatbots are transforming online communication by enabling quicker, smarter digital interactions that respond to customer queries and help users find information faster. As the field of artificial intelligence (AI) continues to develop, developers can now create more sophisticated chatbots that understand natural language and provide more precise answers.

The combination of Ollama and Rasa is one of the potent methods of developing such chatbots. Rasa assists with dialogue and intent management, whereas Ollama enables you to run powerful AI models on the fly. In this blog, you will learn how these two tools can collaborate, and you will have a basic step-by-step process on how to create your own AI chatbot.

Quick Answer:

You can build an AI chatbot with Rasa and Ollama by setting up the environment, creating a Rasa project, connecting it with Ollama’s local AI model, and running the chatbot to handle user conversations.

Table of contents

- Rasa and Ollama: Powering AI Chatbots Together

- Rasa

- Ollama

- How Rasa & Ollama Are Used to Develop Your Own AI Chatbot

- How to Prepare the Environment Setup

- Create a Virtual Environment

- Activate the Virtual Environment

- Install Rasa and Rasa SDK

- Download and Install Ollama

- Pull the Llama 3 Model

- Run the Llama 3 Model

- Initialize the Rasa Project

- Step-by-Step Implementation Guide

- Step 1: Define Intents and Responses

- Step 2: Create a Custom Action to Call Llama 3

- Step 3: Update domain.yml for Custom Action

- Step 4: Train Your Rasa Model

- Step 5: Run Rasa Action Server

- Step 6: Run the Rasa Chatbot

- Conclusion

- FAQs

- What is the role of Rasa in building an AI chatbot?

- Why is Ollama used with Rasa?

- Do you need coding knowledge to build a chatbot with Rasa and Ollama?

Rasa and Ollama: Powering AI Chatbots Together

Rasa

Rasa is a platform for creating intelligent applications. It offers features for text perception, dialogue, and working with structured data. It is an open-source tool used by developers to build systems that can understand natural language and make decisions based on input.

Ollama

Ollama is a tool that lets you run LLM (Large Language Models) on your system. It enables developers to use advanced AI without relying on cloud services, giving them control over performance, privacy, and customization.

How Rasa & Ollama Are Used to Develop Your Own AI Chatbot

- To create a fully functional chatbot, Rasa and Ollama collaborate to combine conversation management with advanced artificial intelligence processing.

- First, Rasa receives user input, then identifies user intent, extracts valuable information (entities), and directs the conversation through its dialogue management system.

- After Rasa processes the user message, it forwards the input to Ollama, which connects a Large Language Model (LLM) to your system.

- The LLM in Ollama interprets the input, produces a meaningful, context-sensitive response, and then transfers it to Rasa.

- Finally, Rasa provides this answer to the user through the chat interface.

Note:

Here, we are using the Llama3 LLM to generate AI responses.

Also Read: The Influence of Chatbots on Customer Services

The first chatbot, ELIZA, was created in 1966 by Joseph Weizenbaum at MIT.

How to Prepare the Environment Setup

The following steps are required to set up the AI chatbot project environment:

1. Create a Virtual Environment

python -m venv bot-env- Creates an isolated virtual Python environment named bot-env.

- Keeps project dependencies separate from your system Python.

- Ensures Rasa and other packages won’t conflict with other projects.

2. Activate the Virtual Environment

Windows:

bot-env\Scripts\activatemacOS/Linux:

source bot-env/bin/activate- Switches your terminal to use the virtual environment.

- All Python commands and pip install will now affect only this project.

- The terminal prompt changes to show (bot-env) to indicate activation.

3. Install Rasa and Rasa SDK

pip install rasa rasa-sdk- Installs Rasa, the main framework for building AI chatbots.

- Installs the Rasa SDK, which lets you create custom actions and logic for your chatbot.

- Prepares your virtual environment so your chatbot project can run and interact with the AI model.

4. Download and Install Ollama

- Download from Download Ollama depending on your OS (Windows, macOS, Linux).

- Ollama provides the LLM platform that runs AI models like Llama 3.

- This step enables your chatbot to generate intelligent responses.

5. Pull the Llama 3 Model

ollama pull llama3- Download the Llama 3 model to your computer.

- The model will be used to generate AI responses for your chatbot.

- This only needs to be done once per machine.

6. Run the Llama 3 Model

ollama run llama3- Starts the Llama 3 model in a terminal.

- The model listens for requests (from Rasa) to generate responses.

- Must be running in a separate terminal while you test your chatbot.

7. Initialize the Rasa Project

rasa init --no-prompt- Creates a new Rasa project with all default folders and files.

- Includes domain.yml, data/, actions.py, and other essential files.

- –no-prompt skips interactive setup and creates the project automatically.

Note:

After running rasa init –no-prompt, Rasa creates a project with all the necessary files and folders for your chatbot.

- data/ folder – this is the training data:

- nlu.yml → trains the bot to decipher user messages

- rules.yml → rules set that cannot be altered by the user

- stories.yml → indicates example dialogs of the bot

- config.yml – installs NLU pipeline and dialogue policies.

- actions.py – create your own Python actions for the bot.

- endpoints.yml – opine links to outside services.

- credentials.yml – channel credentials such as Slack or Telegram.

- tests/ folder – stores test stories to test bot responses.

Step-by-Step Implementation Guide

Follow these steps carefully to quickly get your chatbot running and integrated with the Llama 3 model:

Step 1: Define Intents and Responses

Update data/nlu.yml with the user intents and domain.yml with responses. This tells your bot what the user might say and how it should reply.

nlu.yml:

version: "3.1"

nlu:

- intent: greet

examples: |

- hi

- hello

- heydomain.yml:

responses:

utter_greet:

- text: "Hello! How can I help you today?"This trains the bot to recognize greetings and respond appropriately.

Step 2: Create a Custom Action to Call Llama 3

Edit actions.py to make a function that sends the user message to Llama 3 and returns the response.

from rasa_sdk import Action, Tracker

from rasa_sdk.executor import CollectingDispatcher

import subprocess

class ActionCallLlama3(Action):

def name(self):

return "action_call_llama3"

def run(self, dispatcher, tracker, domain):

user_message = tracker.latest_message.get('text')

# Call Llama3 via terminal command

result = subprocess.run(["ollama", "run", "llama3", user_message], capture_output=True, text=True)

dispatcher.utter_message(text=result.stdout)

return []This lets your bot generate intelligent AI replies using Llama 3 rather than predefined responses.

Step 3: Update domain.yml for Custom Action

Tell Rasa about your new action:

actions:

- action_call_llama3This makes Rasa aware of the custom action so it can call it during conversations.

Step 4: Train Your Rasa Model

Run this in your terminal:

rasa trainRasa reads your training data (nlu.yml, stories.yml) and builds a model that understands user messages and responds with predefined responses or your custom Llama 3 action.

Step 5: Run Rasa Action Server

Start the server that handles custom actions:

rasa run actionsThis keeps your custom Llama 3 action active and ready to use in conversations.

Step 6: Run the Rasa Chatbot

Start the bot itself:

rasa shellNow you can chat with your bot. Rasa will process user input, decide on a response, and call Llama 3 if needed.

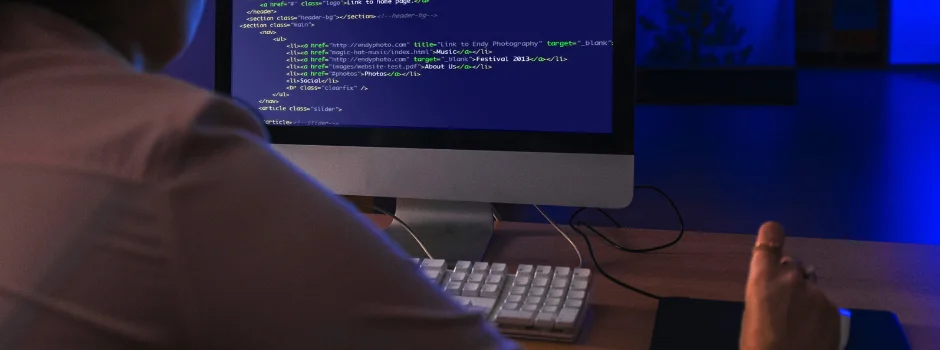

This is how the live AI chatbot looks in the terminal – refer to the screenshot below:

AI is transforming the world faster than ever, and mastering it can open doors to endless opportunities. Don’t get left behind – enroll in HCL GUVI’s AI/ML Course, and ride the wave of the AI revolution today!

Conclusion

Rasa and Ollama are useful for building intelligent, efficient conversational systems by enabling the creation of AI chatbots. By integrating Rasa’s conversation management with Ollama’s local AI models, developers can create responsive chatbots that understand users’ needs. Once the configuration is complete and the procedures are followed properly, developing an AI chatbot will become much easier.

FAQs

What is the role of Rasa in building an AI chatbot?

Rasa manages conversations and understands messages. It helps the chatbot detect intent and respond in a structured way.

Why is Ollama used with Rasa?

Ollama lets developers run AI models locally. Combined with Rasa, it helps the AI chatbot generate smarter, natural responses.

Do you need coding knowledge to build a chatbot with Rasa and Ollama?

Basic Python and chatbot knowledge helps, making setup, connecting Ollama, and managing responses easier.

Did you enjoy this article?