Beyond Accuracy: Why the AUC-ROC Curve Is Your Model’s Real Report Card

May 04, 2026 4 Min Read 29 Views

(Last Updated)

Imagine you build a machine learning model to detect cancer, and it gives you 95% accuracy. At first glance, that sounds amazing. But what if 95% of your data consists of healthy patients? That means your model could simply predict “no cancer” for everyone and still achieve 95% accuracy.

This is exactly why accuracy can sometimes be misleading, especially in real-world problems where data is not balanced.

In classification problems, our goal is not just to predict correctly, but to understand how well the model separates different classes. That’s where the AUC-ROC curve becomes important.

In this blog, we will go through the AUC-ROC curve in a simple way, with examples, so that even if you’re a beginner, you can confidently understand and use it. The AUC-ROC curve is a method used in machine learning to measure how well a model can distinguish between two classes (like yes/no or spam/not spam) across different decision thresholds.

TL;DR Summary

- This blog explains AUC, ROC, and AUC-ROC in a very simple way using real-life examples, making it easy for beginners to grasp model evaluation beyond accuracy.

- It explains why accuracy can be misleading and shows how AUC-ROC addresses real-world problems such as fraud detection and medical testing in classification models.

- It builds a clear intuition about how models work by explaining thresholds, TPR, and FPR, helping you understand how predictions change across different situations.

- It connects theory with practice through a step-by-step Python implementation, so you can directly learn how to use AUC-ROC in real machine learning projects.

The ROC curve was originally developed in signal detection theory during World War II for analyzing radar signals.

Table of contents

- What is ROC

- Real-world example: Disease testing

- What is AUC

- Real-world example: Hiring candidates

- What is an AUC-ROC Curve

- Real-world example: Bank fraud detection

- Real-world example: Email spam filter

- AUC-ROC Curve in Python: Step-by-Step Implementation Using Sklearn

- Output:

- Code Explanation:

- Conclusion

- FAQs

- What does the AUC-ROC curve measure?

- What does an AUC of 0.5 mean?

- When should I not use ROC-AUC?

What is ROC

ROC is a way to assess how well a classification model separates two classes, such as yes/no problems.

In real life, models don’t just say “yes” or “no”. They give a probability or score. Then we decide on a threshold:

- Above this score → positive (yes)

- Below this score → negative (no)

Now the problem is that if we change this threshold, the results change as well.

ROC helps us understand this trade-off.

It uses two important measures:

True Positive Rate (TPR):

Out of all actual positive cases, how many did we correctly find?

False Positive Rate (FPR):

Out of all actual negative cases, how many did we wrongly mark as positive?

So ROC is basically answering:

“If I change the strictness of my model, how do correct detections and mistakes change?”

Real-world example: Disease testing

Imagine a medical test for detecting cancer:

- If the test correctly identifies a patient who actually has cancer → True Positive

- If the test says a healthy person has cancer → False Positive

Now:

- If doctors make the test very strict → it will catch most cancer cases, but may create false alarms in healthy people

- If they make it less strict → fewer false alarms, but some cancer cases might be missed

ROC helps visualise this balance.

Learn the essentials of AI & ML through HCL GUVI’s insightful lessons at no cost: AI/ML Email Course

What is AUC

AUC stands for Area Under the Curve.

ROC gives a full curve, but in real work, comparing curves is difficult. So AUC converts that curve into a single number.

Now the important idea:

AUC indicates how well the model separates positive cases from negative cases.

Think of it like ranking ability.

Real-world example: Hiring candidates

Imagine a company trying to select good employees:

- Positive case = good candidate

- Negative case = bad candidate

The model gives a score to each candidate.

Now:

- A good model will always give higher scores to good candidates than bad ones

- A weak model will mix them up

So AUC is checking:

“If I randomly pick one good and one bad candidate, how often does my model rank the good one higher?”

That’s why:

- AUC = 1 → perfect separation

- AUC = 0.5 → random guessing

- AUC < 0.5 → worse than random

So AUC is not about exact answers — it is about ranking quality.

What is an AUC-ROC Curve

AUC-ROC combines both ideas:

- ROC shows performance at different thresholds (graph)

- AUC gives one final score from that graph

So ROC is the full behaviour of the model.

And AUC is the summary of that behaviour.

Real-world example: Bank fraud detection

Imagine a bank system detecting fraudulent transactions:

- Normal transactions = 99%

- Fraud transactions = 1%

Now:

- If the model predicts “not fraud” for everything → it may still get high accuracy, but it is useless

- Because it is not catching fraud at all

Now ROC helps the bank see:

“If we change the decision threshold, how many fraud cases are we catching vs how many false alerts are we creating?”

AUC then tells:

“How good is this model overall at separating fraud from normal transactions?”

Real-world example: Email spam filter

- Strict filter → catches more spam but may block important emails

- Loose filter → allows important emails but lets spam pass

ROC shows all these different behaviours

AUC tells the overall quality of the filter in one number

AUC-ROC Curve in Python: Step-by-Step Implementation Using Sklearn

from sklearn.metrics import roc_curve, roc_auc_score

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import train_test_split

from sklearn.datasets import make_classification

import matplotlib.pyplot as plt

# Create dataset

X, y = make_classification(n_samples=1000, random_state=42)

# Split data

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3)

# Train model

model = LogisticRegression()

model.fit(X_train, y_train)

# Get probability predictions

y_probs = model.predict_proba(X_test)[:, 1]

# Calculate ROC values

fpr, tpr, thresholds = roc_curve(y_test, y_probs)

# Calculate AUC score

auc_score = roc_auc_score(y_test, y_probs)

# Plot graph

plt.plot(fpr, tpr, label=f"AUC = {auc_score:.2f}")

plt.plot([0, 1], [0, 1], linestyle='--')

plt.xlabel("False Positive Rate")

plt.ylabel("True Positive Rate")

plt.title("ROC Curve")

plt.legend()

plt.show()

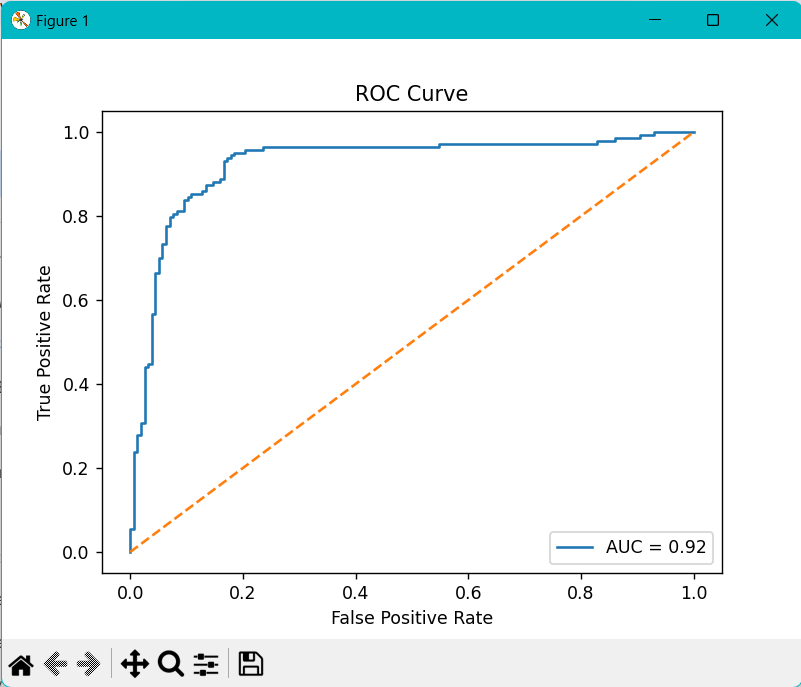

Output:

Code Explanation:

1. Importing Libraries

- First, we import all the required libraries. We use roc_curve and roc_auc_score from scikit-learn to compute ROC and AUC. We also use LogisticRegression as our model, train_test_split to divide the data, and make_classification to create a sample dataset.

- Finally, we use matplotlib.pyplot to plot the ROC curve. Together, these libraries help us build a model, evaluate it, and visualise the results.

2. Creating a Dataset

- Next, we create a dataset using make_classification. This function generates a simple synthetic dataset for classification problems. It is not real-world data; it is just a sample of 1000 rows. It gives us input features X and output labels y.

- This step is mainly to simulate a real machine learning problem, so we can focus on understanding AUC-ROC.

3. Splitting Data

- After that, we split the dataset into training and testing sets using train_test_split. The idea is simple: training data is used to teach the model, and testing data is used to check how well the model performs on unseen data.

- Here, 70% is used for training and 30% for testing. This helps us evaluate the model properly without bias.

4. Training the Model

- Now we create and train the model using LogisticRegression. We fit the model to the training data so it can learn patterns and separate the two classes (0 and 1).

- At this stage, the model is basically learning relationships between input features and output labels.

5. Getting Probability Predictions

- Once the model is trained, we get probability predictions using predict_proba(). Instead of directly predicting 0 or 1, we get probabilities like 0.8 or 0.3.

- These values indicate how confident the model is in its prediction. We take only the second column ([:, 1]) because it represents the probability of the positive class. This step is important because ROC works on probabilities, not hard predictions.

6. Calculating ROC Values

- After that, we calculate ROC values using roc_curve(). It takes the actual labels and predicted probabilities as input and returns three values: the False Positive Rate (FPR), the True Positive Rate (TPR), and the thresholds.

- These values show how the model behaves when we change the decision boundary. Each threshold gives a different balance between correct predictions and mistakes.

7. Calculating AUC Score

- Then we calculate the AUC score using roc_auc_score(). This gives us a single number that summarizes the ROC curve.

- It indicates how well the model separates positive and negative classes. A higher AUC means better performance and better ranking ability of the model.

8. Plotting the ROC Curve

- Finally, we plot the ROC curve using matplotlib. The X-axis represents False Positive Rate, and the Y-axis represents True Positive Rate. We also plot a diagonal dashed line, which represents a random model with no learning ability.

- The ROC curve shows the actual performance of our model; the closer it is to the top-left corner, the better the model performs. We also display the AUC score on the graph to quickly assess how well the model performs.

Become an AI & ML expert with HCL GUVI’s Intel & IITM Pravartak Certified AI/ML course. Learn from industry experts, build real projects in Python, ML, GenAI, and MLOps, and get 1:1 support. Earn a joint certification and placement assistance from 1000+ hiring partners. Enroll now and start your AI career.

Conclusion

The AUC-ROC curve is one of the most powerful tools for evaluating classification models. It helps us understand how well a model separates classes rather than just relying on accuracy.

However, no single metric is perfect. A good machine learning engineer always chooses the right evaluation method based on the problem.

If you remember one thing from this blog, let it be this:

Accuracy tells you how often you’re right.

AUC-ROC tells you how well your model truly discriminates between the classes.

FAQs

What does the AUC-ROC curve measure?

It measures how well a model distinguishes between positive and negative classes across all thresholds.

What does an AUC of 0.5 mean?

It means the model is performing like random guessing.

When should I not use ROC-AUC?

Avoid it when dealing with highly imbalanced datasets or when error costs differ greatly.

Did you enjoy this article?