Building Visual RAG Pipelines with Llama 3.2 Vision and Ollama: A Beginners Guide 2026

Mar 07, 2026 12 Min Read 25 Views

(Last Updated)

Most AI apps are great at reading plain text. But hand them a PDF packed with charts, scanned tables, and diagrams and they go completely blind. That is exactly the gap that visual RAG pipelines were built to fill.

In this guide you will learn everything about building visual RAG pipelines with Llama 3.2 Vision and Ollama, step by step. No cloud subscriptions, no expensive API bills, just a fully local setup that reads, understands, and answers questions about visual documents the same way a human actually would.

Quick Answer

Building visual RAG pipelines with Llama 3.2 Vision and Ollama means converting PDFs into page images, embedding them into a local vector store, and retrieving the most relevant page to pass to Llama 3.2 Vision for a grounded answer. The entire pipeline runs locally on your machine with no internet connection required after setup.

Table of contents

- What Is a Visual RAG Pipeline and Why Does It Matter

- Important Licensing Note Before You Start

- Understanding the Tools: Llama 3.2 Vision, Ollama, and the Supporting Stack

- Hardware Requirements Before You Begin

- How Visual RAG Pipelines Work: The Full Architecture Explained

- Building Visual RAG Pipelines with Llama 3.2 Vision and Ollama Step by Step

- Common Errors and How to Fix Them

- A Complete Example Flow You Can Test Right Now

- Tips for Building Better Visual RAG Pipelines with Llama 3.2 Vision

- 💡 Did You Know?

- FAQs

- What is a visual RAG pipeline and how is it different from regular RAG?

- Do I need a GPU to build visual RAG pipelines with Llama 3.2 Vision and Ollama?

- Can EU-based developers use Llama 3.2 Vision for building visual RAG pipelines?

- Why does the pipeline convert PDFs to images instead of extracting text directly?

- How do I improve retrieval accuracy when my documents are mostly visual with very little text?

What Is a Visual RAG Pipeline and Why Does It Matter

Most people have heard of RAG by now. RAG stands for Retrieval-Augmented Generation. Instead of relying only on what an AI was trained on, RAG lets it pull in relevant content from your own documents before generating an answer. Think of it as giving the AI an open book during an exam.

Standard RAG works great for text documents. But the moment a document contains a bar chart, a scanned invoice, an engineering diagram, or a table full of data, standard RAG becomes useless. It simply cannot see those elements. Visual RAG solves this by adding image understanding to the retrieval and generation process.

Fun Fact: Research published in the VisRAG paper in 2024 found that visual RAG systems achieved a 39% relative accuracy improvement over text-only RAG systems when tested across six different multi-modal document datasets. Seeing the page directly is simply more accurate than parsing text alone.

Here is why building visual RAG pipelines matters so much in 2026:

- Works with real-world documents: Business reports, research papers, medical records, and technical manuals all contain charts, tables, and diagrams that text-only RAG misses completely.

- More accurate and grounded answers: When the model can see the actual chart and its caption together, its answers are far more reliable than anything text extraction alone could produce.

- Fully private and local: Running the pipeline with Ollama means your documents never leave your machine. No cloud uploads, no data sharing, no per-token API costs.

- Scales to any document type: PDFs, scanned images, slide decks, and technical manuals all work within the same pipeline structure once it is set up.

Do check out HCL GUVI’s Artificial Intelligence and Machine Learning Course if you want to build real-world AI applications like Visual RAG pipelines using Llama 3.2 Vision and Ollama. The program helps beginners learn machine learning, deep learning, and modern AI tools through practical projects.

Important Licensing Note Before You Start

This is something most beginner guides skip and it matters before you write a single line of code. Llama 3.2 Vision has a geographic licensing restriction that directly affects who can legally use it.

If you are an individual based in the European Union, or a company with its principal place of business in the EU, Meta’s Llama 3.2 Community License does not grant you the rights to use the multimodal vision models directly. This restriction was put in place due to regulatory uncertainty around the EU AI Act and EU data privacy laws.

What this means in plain language:

- If you are outside the EU: You can download and use Llama 3.2 Vision freely for research and most commercial use cases as long as you follow the Community License terms.

- If you are based in the EU: You cannot legally use the Llama 3.2 Vision model directly as an individual or business. You can, however, use products and services that incorporate the model as an end user.

- If you work for a non-EU company but are based in the EU: You may use the model within the scope of your work for that non-EU company.

- Alternative options for EU developers: Consider using Mistral’s vision models or other multimodal models that are fully available in the EU without restriction.

Always check the official Llama 3.2 license and acceptable use policy at llama.com before building or distributing anything using this model.

Understanding the Tools: Llama 3.2 Vision, Ollama, and the Supporting Stack

Before writing any code it is important to understand what each tool does and why this particular combination works so well together. Most beginners jump straight to installation and end up confused when something does not connect the way they expected.

Think of it like assembling a team before a project. Each person has a specific role and knowing those roles upfront means the project runs smoothly instead of chaotically.

Brain Teaser: If Llama 3.2 Vision is the reader who can see both text and images, and Ollama is the desk that lets it work locally, then what are ChromaDB and CLIP? They are the filing cabinet and the index system that make sure the right document lands on the reader’s desk when a question comes in.

Here is what each tool in the visual RAG stack does:

- Llama 3.2 Vision: Meta’s open source multimodal model released on September 25, 2024. It comes in two sizes, 11 billion parameters for consumer hardware and 90 billion parameters for large-scale applications. It processes both text and images in a single prompt and is trained on 6 billion image-text pairs, making it excellent at reading charts, diagrams, tables, and scanned documents.

- Ollama: A free local model runner that lets you download and run large language models including Llama 3.2 Vision directly on your own machine. It requires no API key and no internet connection once the model is downloaded. It works on Mac, Windows, and Linux.

- ChromaDB: A lightweight open source vector database that stores your text and image embeddings and makes them searchable by meaning rather than just keywords. It runs entirely on your local machine alongside the rest of the pipeline.

- CLIP: OpenAI’s Contrastive Language-Image Pretraining model, trained on 400 million image-text pairs. It maps both images and text into the same numerical space, which is what makes it possible to search your documents using a natural language question even when the answer lives inside a chart or diagram.

- Tesseract OCR: An open source text extraction tool that reads and extracts text from images. Since Llama 3.2 Vision receives images as input rather than raw PDFs, Tesseract pulls the visible text from each page image so it can be embedded and searched alongside the visual content.

- pdf2image: A Python library that converts each page of a PDF into a high-resolution image file. This conversion is the first step in any visual RAG pipeline built around a vision language model.

Hardware Requirements Before You Begin

This is another section most beginner tutorials skip entirely and it is one of the most searched questions about this topic. Knowing your hardware limits before you start saves hours of frustration.

The good news is you do not need a server or a high-end workstation to build a working visual RAG pipeline. The 11B model is designed to run on consumer hardware.

Here is a clear breakdown of what you need for each setup:

| Setup | RAM | GPU VRAM | Storage | Best For |

| Llama 3.2 Vision 11B on CPU | 16 GB minimum | No GPU needed | 8 GB free | Laptops, development, testing |

| Llama 3.2 Vision 11B on GPU | 16 GB RAM | 8 GB VRAM minimum | 8 GB free | Faster inference on most modern laptops |

| Llama 3.2 Vision 90B on GPU | 64 GB RAM | 24 GB VRAM minimum | 55 GB free | Workstations, production applications |

| Llama 3.2 Vision 90B high performance | 128 GB RAM | Dual RTX 4090 or A100 | 55 GB free | Enterprise grade speed and accuracy |

If you are on a modern laptop with 16 GB of RAM, the 11B model will work for development and testing. Expect slower responses on CPU-only setups but the output quality remains strong.

How Visual RAG Pipelines Work: The Full Architecture Explained

Understanding what happens under the hood before writing code is what separates someone who can fix problems from someone who is completely lost when something goes wrong. The architecture is simpler than it sounds once you see it laid out clearly.

A visual RAG pipeline runs in two phases. The indexing phase processes your documents and stores them so they can be searched. The retrieval and generation phase uses that index to find the right page and generate an answer when a user asks a question.

Fun Fact: Traditional text-only RAG pipelines lose critical information when processing visual documents. Table layouts get completely flattened, chart values get separated from their axis labels, and diagram relationships disappear when only the text is extracted. Visual RAG keeps all of that intact by treating the page image itself as the source of truth.

Here is the full pipeline from document to answer in plain steps:

- Phase 1, Document ingestion: Your PDF is loaded and every page is converted into a high-resolution PNG image using pdf2image. This step is necessary because vision models process images, not raw PDF file formats.

- Phase 2, OCR text extraction: Tesseract OCR reads each page image and extracts all visible text. This text is stored alongside the image file path so both are available during the retrieval step.

- Phase 3, Embedding generation: The extracted text is embedded using a sentence transformer model called all-MiniLM-L6-v2, and each page image is embedded using CLIP. Both embeddings are stored in ChromaDB with metadata that links them back to their source page.

- Phase 4, Semantic retrieval: When a user asks a question, the question is embedded using the same text model. ChromaDB performs a similarity search to find the most relevant page. A second ranking step uses CLIP to confirm the retrieved page is also visually relevant, not just textually similar.

- Phase 5, Multimodal generation: The retrieved page image and the user’s question are passed together to Llama 3.2 Vision running locally via Ollama. The model reads both the image and the question, reasons across the visual content, and returns a grounded answer based on what it actually sees on that page.

Building Visual RAG Pipelines with Llama 3.2 Vision and Ollama Step by Step

Now that you understand the architecture it is time to build. This section walks through every step from installation to your first working visual question and answer query. Follow each step in order and run the verification checks before moving on to the next one.

All commands in this guide are written in bold inline text. No code shell blocks, just clean instructions you can follow directly.

1. Install Ollama and Pull Llama 3.2 Vision

Everything starts here. Ollama is what makes it possible to run Llama 3.2 Vision on your local machine without any cloud dependency. Getting this right first means everything else builds on a solid foundation.

Think of this the same way you would think about plugging in and turning on your oven before you start cooking. Nothing else in the kitchen matters until that step is done correctly.

Here is exactly how to install Ollama and pull the Llama 3.2 Vision model:

- Download and install Ollama: Go to ollama.com, click Download, and install the version for your operating system. Ollama version 0.4.1 or higher is required for full Llama 3.2 Vision support. Earlier versions will not handle vision inputs correctly.

- Pull the vision model: Open your terminal and run ollama pull llama3.2-vision to download the 11B model. It is approximately 7 gigabytes so allow a few minutes depending on your connection speed.

- Verify it is working: Run ollama run llama3.2-vision in your terminal, type a simple question, and confirm you get a sensible response before continuing.

- Check model sizes: If you have the hardware for it, you can pull the larger model with ollama pull llama3.2-vision:90b. For most beginners the 11B model is the right starting point.

Fun Fact: Meta officially listed Ollama as a single-node distribution partner when Llama 3.2 was released on September 25, 2024, making it the most straightforward and recommended way to run these vision models locally on a single machine.

2. Install All Required Python Libraries

With Ollama running, the next step is installing every Python library the pipeline depends on. Each one plays a specific role and none of them are optional. Installing everything in one go here avoids the frustrating experience of hitting a missing library error halfway through a build.

It is the same principle as unpacking all your ingredients before you start cooking. Finding out you are missing something halfway through is far more disruptive than checking upfront.

Here is what to install and exactly why each library is needed:

- Install pdf2image: Run pip install pdf2image to get the library that converts PDF pages into PNG image files. Also install the Poppler binary for your operating system as pdf2image depends on it to function. On Mac run brew install poppler, on Ubuntu run sudo apt-get install poppler-utils, and on Windows download the binary from the official Poppler releases page.

- Install pytesseract: Run pip install pytesseract for the Python wrapper around Tesseract OCR. Then install the Tesseract binary itself. On Mac run brew install tesseract, on Ubuntu run sudo apt install tesseract-ocr, and on Windows download the installer from the official Tesseract GitHub wiki and add its installation path to your system environment variables.

- Install sentence-transformers: Run pip install sentence-transformers to get the text embedding model that converts extracted page text into searchable vectors for ChromaDB.

- Install chromadb: Run pip install chromadb to set up the local vector database. ChromaDB runs entirely in-process with no separate server required for single-worker development setups.

- Install transformers and torch: Run pip install transformers torch to get access to the CLIP model and its image processing tools for visual embedding generation.

- Install the Ollama Python client: Run pip install ollama to get the Python library that lets your script send prompts and images to the locally running Ollama model.

3. Convert Your PDF Into High-Resolution Page Images

This is the first real step of the indexing phase and it surprises many beginners. Llama 3.2 Vision cannot process a raw PDF file. It needs images. So the very first thing you do to any document is convert every page into a PNG image.

It is the same logic as scanning a physical document before feeding it to a machine reader. The scanner does not read the paper, it photographs it. That photograph is what the AI can actually work with and reason over.

Brain Teaser: Why do we convert PDFs to images instead of just extracting all the text directly from the PDF? Because PDFs contain charts, tables, and diagrams where the visual layout carries meaning that plain text extraction completely destroys. A bar chart converted to text becomes a list of disconnected numbers with no context. The image keeps everything intact.

Here is how to convert your PDF into searchable page images:

- Import the library: Add from pdf2image import convert_from_path at the top of your Python script.

- Convert the PDF to images: Use pages = convert_from_path(“your_document.pdf”, dpi=200) to load every page as a high-resolution image object. A DPI setting of 200 gives Tesseract and Llama 3.2 Vision enough clarity to read small text and fine diagram details accurately.

- Save each page as a PNG file: Loop through the pages list and save each one with page.save(f”page_{i}.png”, “PNG”) where i is the page index. Store every file path in a list for use in later steps.

- Verify the output visually: Open three or four of the saved PNG files on your computer and confirm they are readable, properly oriented, and capturing the full content of each page before moving on.

4. Extract Text from Page Images Using Tesseract OCR

With page images ready, Tesseract OCR now reads and extracts all visible text from each one. This text is what gets embedded into ChromaDB for keyword and semantic search during the retrieval phase.

A common beginner question is why you need OCR at all if Llama 3.2 Vision can already read images. The answer is efficiency and scale. OCR creates searchable text that lets you retrieve only the one or two most relevant pages from a hundred-page document instead of passing every single page to the vision model every time a question comes in.

Here is how to run Tesseract OCR across all your page images:

- Import the required libraries: Add import pytesseract and from PIL import Image to your imports at the top of your script.

- Extract text from a single page: Use text = pytesseract.image_to_string(Image.open(“page_0.png”)) to pull all readable text from a page image as a plain string.

- Store text alongside its page reference: Save each extracted text string with its image path in a structured list like {“page”: 0, “text”: text, “image_path”: “page_0.png”} so both are easy to look up during retrieval.

- Keep visually heavy pages in the index: Some pages have very little text because they are mostly diagrams or charts. Do not skip them. Keep their image paths in the index so CLIP can still embed and retrieve the visual content even when OCR produces minimal text output.

Fun Fact: OCR error rates of 20 percent or higher are still common in real-world documents, especially scanned ones with low resolution or unusual fonts. Using a high DPI setting of 200 or above when converting PDFs to images dramatically reduces these errors and improves retrieval accuracy downstream.

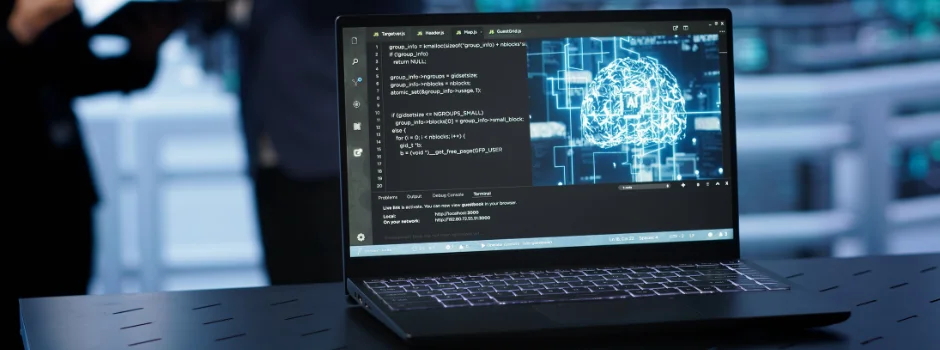

5. Generate Text and Image Embeddings and Store Them in ChromaDB

This is the most technically important step in the entire indexing phase. Embeddings are numerical representations of your content that capture meaning rather than just keywords. When you embed a sentence and an image that contain the same concept, both end up with similar numbers in the vector space. That similarity is what makes semantic search possible.

Think of embeddings as translating your document into a language that a computer can compare instantly. A question about sales performance and a bar chart showing monthly revenue will end up numerically close to each other even though one is text and the other is an image.

Here is how to generate embeddings and load your vector store:

- Import your embedding models: Add from sentence_transformers import SentenceTransformer and from transformers import CLIPProcessor, CLIPModel to your script. Load them with text_model = SentenceTransformer(“all-MiniLM-L6-v2”) for text and clip_model = CLIPModel.from_pretrained(“openai/clip-vit-base-patch32”) for images.

- Generate text embeddings: For each page, encode the OCR-extracted text using text_embedding = text_model.encode(page_text).tolist() to produce a vector that represents the semantic meaning of that page’s text content.

- Generate image embeddings: Open each page image with PIL, run it through the CLIP processor and model, and extract the image features tensor. Convert this to a list for storage. These image embeddings are kept separately in a dictionary keyed by page ID for use during the visual re-ranking step.

- Store everything in ChromaDB: Create a ChromaDB collection and use collection.add(embeddings=[text_embedding], documents=[page_text], metadatas=[{“image_path”: “page_0.png”, “page”: 0}], ids=[“page_0”]) to store each page’s text embedding with its image path and page number as metadata.

6. Retrieve the Most Relevant Page for a User Query

The indexing phase is now complete. When a user asks a question, your pipeline needs to find the single most relevant page from the entire document before anything gets sent to Llama 3.2 Vision. This retrieval step is what keeps the system accurate and prevents the model from hallucinating answers.

Good retrieval is the difference between an AI that gives you the right answer and one that confidently makes something up. The better your retrieval, the more grounded your final answer will be in content that actually exists in the document.

Brain Teaser: What happens if your retrieval step returns the wrong page? Llama 3.2 Vision will generate an answer based on whatever image it receives. If that image is the wrong page, the answer will look confident but be completely wrong. This is why testing retrieval independently before connecting the model is so important.

Here is how to build the retrieval step with hybrid text and visual ranking:

- Embed the user’s question: When a question arrives, encode it with query_embedding = text_model.encode(user_question).tolist() to turn the question into the same vector format as your stored page embeddings.

- Search ChromaDB for the top matches: Use results = collection.query(query_embeddings=[query_embedding], n_results=3) to retrieve the three pages whose text embeddings are most semantically similar to the question.

- Apply CLIP visual re-ranking: For each of the top three results, compute the cosine similarity between the CLIP text encoding of the question and the stored image embedding of that page. The page with the highest combined text and visual similarity score wins.

- Extract the winning image path: Pull the image path from the metadata of the top-ranked result so you know exactly which page image to send to Llama 3.2 Vision in the next step.

7. Generate an Answer Using Llama 3.2 Vision via Ollama

This is the final step and the payoff for everything that came before it. You now have the user’s question and the most relevant page image. You pass both to Llama 3.2 Vision running locally via Ollama and it generates a grounded, context-aware answer based on what it actually sees.

The model is not guessing from its training data. It is looking at your actual document page and reasoning from the real visual content in front of it. That is what makes visual RAG fundamentally different and more reliable than a standard LLM response.

Here is how to run multimodal inference with Llama 3.2 Vision through Ollama:

- Import the required libraries: Add import ollama and import base64 to your script to prepare for the image-encoded API call.

- Encode the page image as base64: Use image_data = base64.b64encode(open(image_path, “rb”).read()).decode(“utf-8”) to convert the PNG file into a base64 string that can be embedded in the API request.

- Build and send the multimodal prompt: Call the Ollama API with response = ollama.chat(model=”llama3.2-vision”, messages=[{“role”: “user”, “content”: user_question, “images”: [image_data]}]) to send both the question and the image together to the model.

- Extract and return the answer: Get the text response with answer = response[“message”][“content”] and return it to the user. The answer will reference specific visual elements from the page, making it far more accurate than any text-only system could produce.

Common Errors and How to Fix Them

This is the section that saves beginners the most time. Every guide shows you the happy path. Almost none of them tell you what to do when something breaks. Here are the most common errors in visual RAG pipeline builds and exactly how to fix them.

No setup goes perfectly the first time. Knowing what the error means and how to solve it is what turns a frustrating afternoon into a productive one.

Here are the errors you are most likely to hit and how to resolve each one:

- “TesseractNotFoundError” when running pytesseract: This means Python cannot find the Tesseract binary on your system. On Windows you need to add the Tesseract installation folder path, usually C:\Program Files\Tesseract-OCR, to your system environment variables under Path. On Mac or Linux verify the installation with which tesseract in your terminal and set the path explicitly in your script with pytesseract.pytesseract.tesseract_cmd = “/usr/bin/tesseract”.

- “Error: unable to find image” when calling Ollama: This usually means the base64 encoding step produced an empty or malformed string. Verify the image file path exists and the file is not corrupted by opening it manually before encoding. Also confirm you are using Ollama version 0.4.1 or higher since earlier versions do not support vision inputs.

- Blank or near-empty OCR output on some pages: This happens on pages that are predominantly visual with very little readable text. Do not try to fix this with OCR settings. Instead make sure those pages still have their image paths stored in ChromaDB so CLIP can retrieve them visually even when text retrieval fails.

- ChromaDB collection already exists error on restart: ChromaDB persists data to disk by default. When you restart your script it tries to create a collection that already exists. Fix this by using client.get_or_create_collection(“your_collection_name”) instead of client.create_collection() so it reuses the existing collection rather than throwing an error.

- Slow PDF conversion on large documents: pdf2image processes pages sequentially which gets slow on documents with 50 or more pages. Speed this up by using Python’s multiprocessing library to convert multiple pages in parallel, or by processing and indexing the document once and saving the ChromaDB collection to disk so you never repeat the indexing step.

A Complete Example Flow You Can Test Right Now

Reading through steps is useful but seeing the entire pipeline described from input to output makes everything concrete. Here is what a proper end-to-end test looks like and what a good response tells you about the health of your pipeline.

Use a PDF that contains at least one chart, table, or diagram for your first test. A business annual report, a scientific paper, or a chemistry textbook page all work well. The goal is to ask a question where the answer only exists inside a visual element.

Here is the example test flow:

Load your PDF, convert it to images at 200 DPI, run Tesseract OCR on all pages, generate text and image embeddings for each page, store everything in ChromaDB, then ask the question: “What does the chart on the third page show and what were the peak values?”

What a good visual RAG response looks like:

- It names specific elements from the image: A reliable response mentions actual axis labels, data series names, percentage values, or time periods that are visibly present in the chart. Generic descriptions without specific numbers are a sign of poor retrieval or a low resolution image.

- It stays within the retrieved page: The answer should only contain information visible on the page that was retrieved. If the model starts discussing content from other parts of the document, your retrieval is returning the wrong page.

- It connects the visual and text content together: The strongest answers combine what Tesseract extracted as caption text with what the model sees in the chart itself, producing a richer and more complete answer than either source alone could give.

- It is honest about limitations: If the image resolution is too low for the model to read a specific value clearly, Llama 3.2 Vision will typically say so rather than guess. This is a good sign. Confident wrong answers indicate the model is hallucinating rather than grounding its response in the image.

Tips for Building Better Visual RAG Pipelines with Llama 3.2 Vision

- Always use a DPI of 200 or higher when converting PDFs. Higher resolution gives Tesseract and Llama 3.2 Vision clearer images to work with and dramatically improves accuracy on small text and fine diagram details.

- Store image paths in your ChromaDB metadata. Without this direct link from the stored embedding back to the image file, retrieved results cannot connect to the right page for Ollama inference.

- Test retrieval independently before testing generation. Run several test queries against ChromaDB and confirm it returns the correct pages before connecting Llama 3.2 Vision. Bad retrieval produces bad answers regardless of model quality.

- Use hybrid text and image re-ranking. Combining ChromaDB text similarity with CLIP visual similarity gives significantly better page retrieval on documents where charts and diagrams carry as much meaning as the surrounding text.

- Keep Ollama updated to version 0.4.1 or higher. Vision model support requires this minimum version. Running an older version will cause silent failures or unhelpful error messages when image inputs are included.

- Start with the 11B model before attempting 90B. The 11B version handles most visual RAG tasks reliably and runs on standard hardware. Only upgrade to 90B if you are working with highly complex visual reasoning tasks and have the GPU resources to support it.

💡 Did You Know?

- Llama 3.2 Vision was officially released by Meta on September 25, 2024, at the Meta Connect developer conference. It is available in 11 billion and 90 billion parameter sizes and was trained on 6 billion image-text pairs to support visual reasoning, document understanding, image captioning, and chart interpretation.

- The Llama 3.2 Vision 11B and 90B multimodal models are not legally available for direct use by individuals or companies based in the European Union due to Meta’s licensing policy around the EU AI Act. EU-based end users can still access products and services that incorporate the model but cannot use the model directly.

- CLIP, which stands for Contrastive Language-Image Pretraining, was trained by OpenAI on 400 million image-text pairs, allowing it to map images and text into the same vector space and enabling cross-modal retrieval in visual RAG pipelines.

FAQs

1. What is a visual RAG pipeline and how is it different from regular RAG?

Regular RAG retrieves text to help an AI answer questions. Visual RAG also retrieves and processes images, charts, and diagrams using a vision model, making it far more accurate on real-world documents that contain more than just plain text.

2. Do I need a GPU to build visual RAG pipelines with Llama 3.2 Vision and Ollama?

No. The 11B model runs on a laptop with 16 GB of RAM using CPU inference, though it will be slower. For faster responses a GPU with at least 8 GB of VRAM is recommended. The 90B model needs at least 24 GB of VRAM.

3. Can EU-based developers use Llama 3.2 Vision for building visual RAG pipelines?

Not directly. Meta’s license restricts individuals and companies based in the EU from using the multimodal vision models. EU developers can use products that incorporate the model as end users but cannot build with it directly. Mistral’s vision models are a good alternative.

4. Why does the pipeline convert PDFs to images instead of extracting text directly?

Llama 3.2 Vision processes image inputs, not raw PDFs. Converting to images also preserves charts, tables, and diagrams that plain text extraction would distort or lose entirely.

5. How do I improve retrieval accuracy when my documents are mostly visual with very little text?

Increase the weight of CLIP image similarity during re-ranking so visual relevance matters more than text similarity. Use a DPI of 200 or above for clearer images. For documents with almost no text, skip Tesseract entirely and rely purely on CLIP embeddings for retrieval.

Did you enjoy this article?