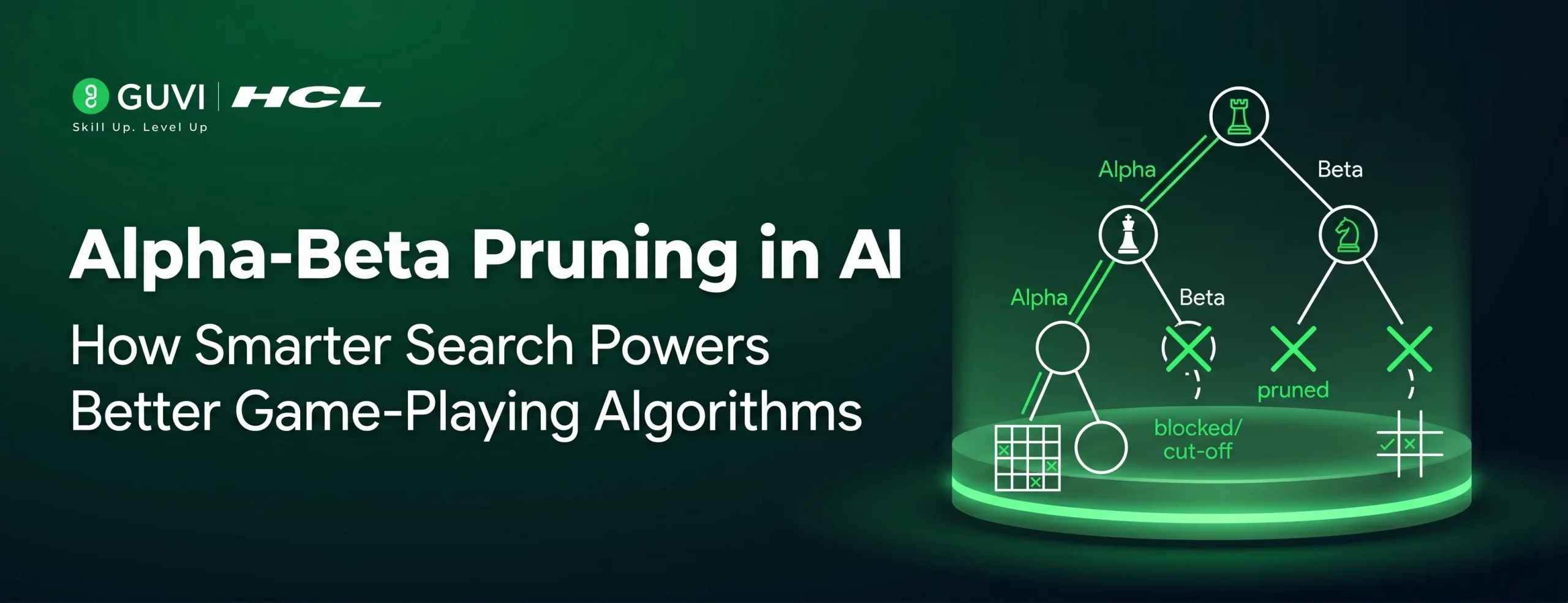

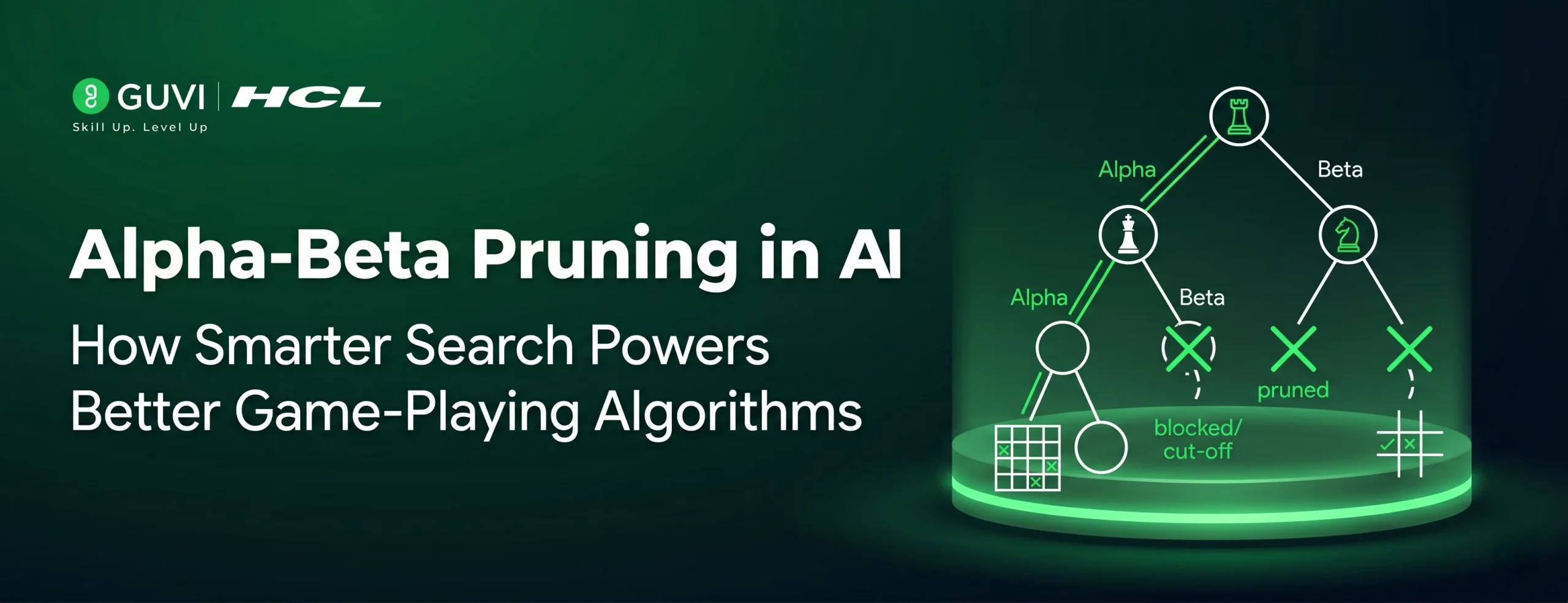

Alpha-Beta Pruning in AI: How Smarter Search Powers Better Game-Playing Algorithms

May 07, 2026 6 Min Read 30 Views

(Last Updated)

When you sit down to play chess against a computer, the AI isn’t guessing. It is systematically evaluating thousands, sometimes millions, of possible future moves and selecting the one most likely to lead to victory. But raw computation has limits. Even the fastest hardware cannot evaluate every possible game state in complex games.

This is where Alpha-Beta Pruning transforms the problem. By intelligently discarding branches of the game tree search that are provably irrelevant, the Minimax algorithm becomes far more efficient. The result is an AI that searches deeper, decides faster, and plays stronger, all without sacrificing correctness.

In this article, we break down how Alpha-Beta Pruning works, why it matters in artificial intelligence, how it connects to the broader Minimax framework, and where it is used in real-world game-playing AI systems.

Table of contents

- TL;DR

- Understanding the Minimax Algorithm: The Foundation of Game Tree Search

- How the Minimax Algorithm Works

- What Is Alpha-Beta Pruning? The Core Pruning Technique Explained

- The Pruning Condition

- Alpha-Beta Pruning: A Step-by-Step Walkthrough

- The Setup

- The Traversal

- The Result

- Search Efficiency: How Much Does Alpha-Beta Pruning Actually Help?

- Best Case: Optimal Move Ordering

- Worst Case: Reverse Move Ordering

- Average Case: Random Move Ordering

- Heuristic Evaluation: How AI Scores Game States

- Real-World Applications: Where Alpha-Beta Pruning Powers Game-Playing AI

- Chess Engines

- Checkers and Draughts AI

- Game Tree Search Beyond Board Games

- Advanced Optimisations Built on Alpha-Beta Pruning

- Limitations of Alpha-Beta Pruning You Should Understand

- Conclusion

- FAQs

- What is the difference between Minimax and Alpha-Beta Pruning?

- Does Alpha-Beta Pruning always find the optimal move?

- Why is move ordering so important for Alpha-Beta Pruning?

- Can Alpha-Beta Pruning be used in games with randomness?

- How is Alpha-Beta Pruning used in modern AI systems beyond games?

TL;DR

- The Minimax algorithm evaluates a game tree to find the optimal move for an AI player.

- Alpha-Beta Pruning is a search efficiency technique that cuts branches that Minimax would never choose.

- It maintains two values, alpha (best for maximiser) and beta (best for minimiser, to prune.

- In ideal conditions, it reduces the number of evaluated nodes from O(b^d) to O(b^(d/2)).

- It is the core adversarial search technique used in chess engines, Go AI, and decision-making systems.

What Is Alpha-Beta Pruning in AI?

Alpha-Beta Pruning is an adversarial search optimization technique applied to the Minimax algorithm in artificial intelligence. It eliminates branches of the game tree that cannot influence the final decision, allowing AI systems to search deeper and make better moves without evaluating every possible outcome. It is a foundational technique used in game-playing AI for chess, checkers, and similar strategy games.

Understanding the Minimax Algorithm: The Foundation of Game Tree Search

Before Alpha-Beta Pruning makes sense, you need to understand the Minimax algorithm it optimises. Minimax is a decision-making strategy used in two-player, zero-sum games where one player’s gain is exactly the other’s loss.

The algorithm models the game as a tree structure. Each node represents a game state, and each edge represents a move. The two players are called the Maximiser and the Minimiser.

How the Minimax Algorithm Works

The Maximiser tries to achieve the highest possible score. The Minimiser tries to achieve the lowest possible score, making the Maximiser’s situation as bad as possible.

• The algorithm traverses the game tree to the terminal nodes (leaf nodes).

• Terminal nodes are assigned a heuristic evaluation score.

• Values are propagated back up the tree: MAX nodes take the maximum child value, MIN nodes take the minimum.

• The root node’s final value determines the best move for the current player.

This process guarantees the optimal move assuming the opponent also plays optimally. The problem is computational cost. In chess, the branching factor is roughly 35 moves per position, and a typical game lasts 80 moves. Evaluating the full tree is computationally impossible. This is exactly the problem that Alpha-Beta Pruning solves.

What Is Alpha-Beta Pruning? The Core Pruning Technique Explained

Alpha-Beta Pruning is an adversarial search optimisation that eliminates branches of the game tree that cannot affect the final decision. It runs on top of Minimax, producing identical results while evaluating significantly fewer nodes.

The technique introduces two boundary values that are tracked throughout the search:

- Alpha (α): The best value the Maximiser can guarantee from the current path. Initialised to −∞.

- Beta (β): The best value the Minimiser can guarantee from the current path. Initialised to +∞.

The Pruning Condition

At any point during the search, if the current node’s value makes it impossible for the current player to improve over what they already have, the remaining subtree is pruned — meaning it is not evaluated at all.

Specifically:

• If at a MIN node, the value ≤ alpha → prune. The Maximiser would never choose this path.

• If at a MAX node, the value ≥ beta → prune. The Minimiser would never allow this path.

This is the essence of the pruning technique: if you already know a better option exists elsewhere in the tree, there is no point evaluating a branch that will never be chosen.

Alpha-Beta Pruning: A Step-by-Step Walkthrough

Let’s walk through a simplified game tree to see exactly how Alpha-Beta Pruning eliminates branches in practice. This makes the abstract algorithm concrete and intuitive.

The Setup

• Consider a game tree with depth 3: one MAX level (root), one MIN level, one MAX level (leaves).

• The leaf nodes have scores: [3, 5, 2, 9, 1, 7, 4, 8].

• Alpha starts at −∞, Beta starts at +∞.

The Traversal

1. The algorithm visits the leftmost leaf (score: 3). Alpha updates to 3 at the root MAX node.

2. It visits the second leaf (score: 5). The parent MIN node sees 5 > 3, so it keeps 3 as the MIN value. Alpha is 3, Beta is now 3 at this MIN node.

3. Before visiting the third leaf under a different MIN node, the algorithm checks: can this path improve on what the Maximiser already has (3)? If the MIN node finds a value ≤ 3 first, the rest of its children are pruned.

4. This process continues, with pruning cuts eliminating roughly half the tree in ideal conditions.

The Result

The algorithm arrives at the same optimal decision as full Minimax but evaluates significantly fewer nodes. In this small example, 2–3 nodes are skipped. In real games with branching factors of 20–35 and depths of 6–10 moves, the savings are enormous.

Search Efficiency: How Much Does Alpha-Beta Pruning Actually Help?

The efficiency gains from Alpha-Beta Pruning depend on the order in which nodes are evaluated. This makes move ordering one of the most important practical considerations when implementing game-playing AI.

Best Case: Optimal Move Ordering

When the best moves are evaluated first, which is what good heuristic evaluation enables, Alpha-Beta Pruning achieves its theoretical maximum efficiency.

• Nodes evaluated: O(b^(d/2)) instead of O(b^d)

• For chess with branching factor 35 and depth 6: ~1,800 nodes instead of ~1.8 billion

• This effectively doubles the searchable depth for the same computational budget

Worst Case: Reverse Move Ordering

When the worst moves are evaluated first, almost no pruning occurs, and the algorithm degenerates back toward full Minimax performance. This is why move ordering heuristics are critical in real implementations.

Average Case: Random Move Ordering

With random ordering, Alpha-Beta Pruning still achieves meaningful savings — roughly O(b^(3d/4)) node evaluations. Even without optimised ordering, the pruning technique delivers a substantial improvement over raw Minimax.

Heuristic Evaluation: How AI Scores Game States

Neither Minimax nor Alpha-Beta Pruning can evaluate every game tree to its terminal state in complex games. Instead, the search is cut off at a set depth,h, and a heuristic evaluation function assigns a score to each leaf node reached.

A well-designed heuristic evaluation function is what separates a strong game-playing AI from a weak one. The algorithm is only as smart as its ability to evaluate non-terminal states.

In chess, a heuristic evaluation might consider:

• Material balance (total piece values for each side)

• King safety (open files near the king, pawn shield integrity)

• Piece mobility (number of legal moves available)

• Pawn structure (isolated, doubled, or passed pawns)

• Control of key squares and the centre of the board

The quality of this heuristic directly impacts the quality of the AI’s decisions. Alpha-Beta Pruning maximises how deeply the algorithm can search within a given time budget — but it is the heuristic that determines what the AI considers valuable at each position.

Real-World Applications: Where Alpha-Beta Pruning Powers Game-Playing AI

Alpha-Beta Pruning is not a theoretical curiosity. It is the practical foundation of adversarial search in deployed AI systems across competitive games and decision-making problems.

Chess Engines

Every serious chess engine, including Stockfish, one of the strongest chess programs ever built, uses Alpha-Beta Pruning as its core search efficiency technique. Modern engines combine it with iterative deepening, transposition tables, and null-move pruning to search 20+ moves deep in competitive play.

Checkers and Draughts AI

Jonathan Schaeffer’s Chinook, the first computer program to win the world championship in a board game, used Alpha-Beta Pruning as a central component of its adversarial search system. It defeated the human world champion in 1994.

Game Tree Search Beyond Board Games

The principles of Alpha-Beta Pruning extend beyond traditional board games:

- Card games like Poker and Bridge, where AI must reason about opponent strategy

- Real-time strategy games where AI evaluates tactical decision trees

- Economic and negotiation simulations modelling multi-agent adversarial decisions

- Robot planning systems that treat obstacle avoidance as an adversarial optimisation problem

IBM’s Deep Blue, the chess computer that defeated Garry Kasparov in 1997, could evaluate up to 200 million positions per second.

This level of search was made feasible by Alpha-Beta Pruning, which eliminates branches of the game tree that cannot affect the final decision.

By reducing the number of positions that needed to be evaluated, it allowed Deep Blue to reach greater search depth within the limits of the hardware available at the time.

Advanced Optimisations Built on Alpha-Beta Pruning

In practice, modern game-playing AI systems layer additional optimisations on top of basic Alpha-Beta Pruning to push search efficiency even further.

- Iterative Deepening Depth-First Search (IDDFS): The algorithm searches to increasing depths in successive passes. This improves move ordering for deeper searches and allows time-bounded search stopping when the clock runs out rather than at a fixed depth.

- Transposition Tables: A hash table that stores previously evaluated positions. If the same board state appears via different move sequences, the cached result is reused rather than recomputed, eliminating redundant work.

- Killer Move Heuristic: Moves that caused a beta cutoff in sibling nodes are tried first in subsequent nodes at the same depth. Since strong moves tend to repeat across similar positions, this dramatically improves move ordering.

- Null-Move Pruning: The algorithm tests what happens if the current player passes their turn. If the resulting position is already bad for the opponent, the current node can be pruned as a powerful but occasionally risky shortcut.

- Quiescence Search: Instead of cutting off evaluation at a fixed depth, the algorithm continues searching until a ‘quiet’ position is reached, one with no captures or immediate threats. This prevents the horizon effect,t where a tactical sequence is misread at the search boundary.

Limitations of Alpha-Beta Pruning You Should Understand

Alpha-Beta Pruning is powerful, but understanding its limitations is essential for building reliable game-playing AI systems.

- Performance depends on move ordering: Without good heuristics to order moves well, pruning efficiency collapses toward Minimax performance. The algorithm is only as efficient as its move ordering strategy.

- Heuristic evaluation quality is critical: If the evaluation function is inaccurate, Alpha-Beta Pruning will efficiently search toward the wrong answer. Garbage in, garbage out at high speed.

- Does not handle randomness natively: Alpha-Beta Pruning is designed for deterministic, perfect-information games. Stochastic games (like backgammon with dice) require extensions such as the Expectiminimax algorithm.

- Horizon effect: The fixed search depth can cause the AI to miss tactical sequences that extend just beyond its evaluation horizon. Quiescence search mitigates but does not fully eliminate this problem.

- Imperfect information games: In games where players have private information, like poker,r standard Alpha-Beta Pruning cannot be directly applied. Alternative frameworks, such as counterfactual regret minimisation, are required.

If you want to learn more about building skills for Claude Code and automating your procedural knowledge, do not miss the chance to enroll in HCL GUVI’s Intel & IITM Pravartak Certified Artificial Intelligence & Machine Learning courses. Endorsed with Intel certification, this course adds a globally recognized credential to your resume, a powerful edge that sets you apart in the competitive AI job market.

Conclusion

Alpha-Beta Pruning is one of the most elegant ideas in artificial intelligence. It solves a fundamental problem, the exponential cost of game tree search, by making a simple but powerful observation: you do not need to evaluate a branch if you already know it cannot change your decision.

By maintaining the alpha and beta boundaries during Minimax traversal, the pruning technique can cut the effective search space from O(b^d) to O(b^(d/2)) under optimal conditions. This doubles the searchable depth for the same computational cost, a transformation that turns impractical search problems into tractable ones.

From IBM’s Deep Blue to modern open-source chess engines like Stockfish, Alpha-Beta Pruning has been at the core of the strongest game-playing AI systems ever built. Its principles extend into decision-making, planning, and adversarial optimisation far beyond the chessboard.

Understanding Alpha-Beta Pruning is not just an academic exercise. It is a window into how intelligent systems make optimal decisions under computational constraints, and that is a problem at the heart of AI itself.

FAQs

1. What is the difference between Minimax and Alpha-Beta Pruning?

Minimax is the foundational algorithm that evaluates every node in the game tree to find the optimal move. Alpha-Beta Pruning is an optimisation applied to Minimax that eliminates branches that cannot affect the final result. Both produce identical decisions. Alpha-Beta simply arrives at the same answer by evaluating far fewer nodes.

2. Does Alpha-Beta Pruning always find the optimal move?

Yes, Alpha-Beta Pruning is a lossless optimisation. It never prunes a branch that could contain the optimal move. The pruning condition is mathematically guaranteed to only eliminate irrelevant subtrees, so the final result is always identical to running full Minimax.

3. Why is move ordering so important for Alpha-Beta Pruning?

Move ordering determines how many branches get pruned. When the best moves are evaluated first, the alpha and beta bounds tighten quickly, causing more branches to be cut. In the best case, this halves the effective tree depth. Poor move ordering means fewer cuts and performance approaching that of full Minimax.

4. Can Alpha-Beta Pruning be used in games with randomness?

Standard Alpha-Beta Pruning is designed for deterministic, perfect-information games. For games that include random elements, such as dice in backgammon, an extension called Expectiminimax is used. It adds chance nodes to the game tree alongside MAX and MIN nodes to represent probabilistic outcomes.

5. How is Alpha-Beta Pruning used in modern AI systems beyond games?

The adversarial search principles underlying Alpha-Beta Pruning are applied across many decision-making domains: automated planning in robotics, competitive multi-agent economic simulations, AI in real-time strategy games, and negotiation systems. Any domain requiring optimal decision-making against an adversarial opponent can benefit from these techniques.

Did you enjoy this article?