In today’s data-driven world, companies generate massive amounts of data every second, and processing this data quickly is a real challenge. Traditional data processing tools struggle when data becomes too large or needs to be analyzed in real time. This is where Apache Spark comes into the picture, offering a faster and more efficient way to work with big data.

Apache Spark is a powerful open-source big data processing framework designed to handle large datasets at high speed. This blog explains what Apache Spark is, how it works, where it is used, its key features, and why it has become so popular in big data and data engineering.

Quick Answer

Apache Spark is a tool used to process large amounts of data very fast. It helps companies analyze data in real time, run big data jobs, and build data-driven applications. Apache Spark is popular because it is easy to use and much faster than traditional big data tools.

Table of contents

- What Is Apache Spark

- How Apache Spark Works

- Components Of Apache Spark

- Spark Core

- Resilient Distributed Datasets (RDDs)

- Spark SQL

- DataFrames And Datasets

- Spark Streaming

- Structured Streaming

- MLlib

- GraphX

- Cluster Manager

- Why Apache Spark Is Used

- Key Features Of Apache Spark

- 💡 Did You Know?

- Conclusion

- FAQs

- Is Apache Spark easy to learn for beginners?

- What is Apache Spark mainly used for?

- How is Apache Spark different from Hadoop?

- Can Apache Spark handle real-time data?

- Do I need Hadoop to use Apache Spark?

What Is Apache Spark

Apache Spark is an open-source big data processing framework used to process and analyze very large amounts of data efficiently. It works by processing data in memory instead of repeatedly reading it from disk, which makes it much faster than traditional big data tools. Apache Spark is widely used for data analytics, real-time data processing, machine learning, and large-scale data applications.

For example, imagine an e-commerce website that collects millions of user clicks, searches, and purchases every day. Using Apache Spark, this huge data can be processed quickly to find popular products, analyze customer behavior, and generate recommendations in near real time. Without Spark, processing such large data would take hours instead of minutes.

How Apache Spark Works

Apache Spark works by dividing large datasets into smaller chunks and processing them in parallel across multiple computers. Instead of reading data from disk again and again, Spark stores data in memory, which greatly improves processing speed. It uses a master-worker architecture where tasks are distributed efficiently to complete jobs faster.

For example, if a company wants to analyze one year of sales data, Spark splits the data across different machines. Each machine processes its part at the same time, and the results are combined at the end. This parallel and in-memory approach allows Spark to finish complex data processing tasks much quicker than traditional systems.

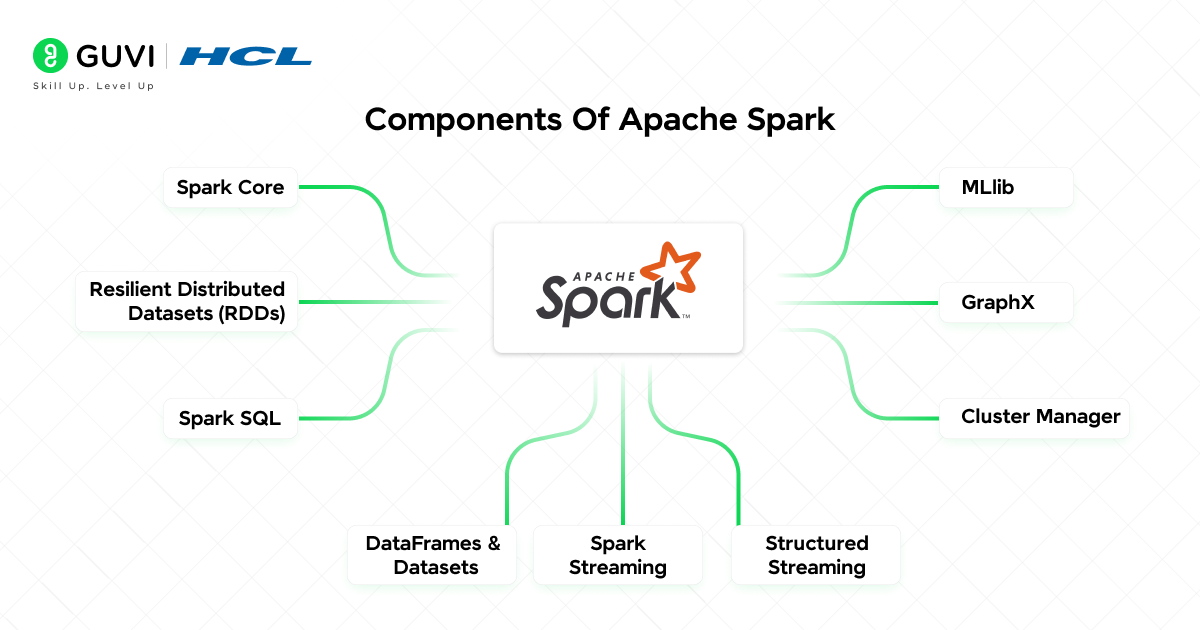

Components Of Apache Spark

Apache Spark is built using multiple components, each designed to handle a specific type of data processing task. These components work together to make Spark fast, flexible, and suitable for different big data use cases. Below are the main components of Apache Spark, explained clearly for beginners.

1. Spark Core

Spark Core is the foundation of Apache Spark and is responsible for all basic operations. It handles task scheduling, memory management, fault tolerance, and communication between different machines in a cluster. Every Spark job runs on Spark Core, making it the backbone of the entire framework. Without Spark Core, none of the other Spark components can function.

Key Features:

- Manages distributed job execution

- Handles memory and resource allocation

- Provides fault tolerance using RDDs

- Acts as the base for all Spark modules

2. Resilient Distributed Datasets (RDDs)

RDDs are the primary data structure used in Apache Spark for distributed data processing. They store data across multiple machines and allow operations to be performed in parallel. RDDs are immutable, meaning the data cannot be changed once created, which helps maintain consistency. They also support fault recovery by recomputing lost data automatically.

Key Features:

- Stores data across a cluster

- Enables parallel data processing

- Automatically recovers from failures

- Supports transformations and actions

3. Spark SQL

Spark SQL is used to process structured and semi-structured data using SQL queries. It allows users to work with large datasets using familiar SQL syntax instead of complex programming logic. Spark SQL also introduces DataFrames, which make data processing more efficient and readable. This component is widely used by data analysts and engineers.

Key Features:

- Supports SQL queries on big data

- Works with structured data and tables

- Integrates with Hive and databases

- Optimizes queries for better performance

4. DataFrames And Datasets

DataFrames and Datasets provide a higher-level abstraction for working with structured data in Spark. They make code easier to read and maintain compared to RDDs. These structures allow Spark to automatically optimize execution plans for better performance. They are commonly used in modern Spark applications.

Key Features:

- Provides structured data representation

- Easier to use than RDDs

- Improves performance through optimization

- Supports multiple programming languages

5. Spark Streaming

Spark Streaming enables Apache Spark to process live data streams in near real time. It works by dividing incoming data into small batches and processing them continuously. This component is useful for applications that need to analyze logs, events, or sensor data as it arrives. Spark Streaming makes real-time analytics simpler to implement.

Key Features:

- Processes real-time data streams

- Works with Kafka and Flume

- Supports near real-time processing

- Integrates with Spark Core

6. Structured Streaming

Structured Streaming is an advanced and more reliable version of Spark Streaming. It treats streaming data as a continuously growing table, making stream processing easier to understand. This approach provides better fault tolerance and consistency. Structured Streaming is preferred for modern real-time data pipelines.

Key Features:

- Uses SQL-like operations on streams

- Provides strong fault tolerance

- Simplifies real-time data processing

- Suitable for live analytics

7. MLlib

MLlib is Apache Spark’s machine learning library designed for large-scale data processing. It provides ready-to-use algorithms for building machine learning models on big datasets. MLlib allows models to be trained faster by using distributed computing. It is widely used in data science and analytics applications.

Key Features:

- Supports classification and regression

- Provides clustering algorithms

- Scales machine learning tasks

- Integrates with Spark pipelines

8. GraphX

GraphX is used to process graph-based data and analyze relationships between data points. It is helpful for applications like social networks, recommendation systems, and network analysis. GraphX combines graph computation with Spark’s data processing capabilities. This makes it efficient for handling complex relationship data.

Key Features:

- Processes graph-structured data

- Supports graph algorithms like PageRank

- Analyzes relationships efficiently

- Integrates with Spark Core

9. Cluster Manager

The cluster manager controls how Apache Spark runs across multiple machines. It manages resource allocation and decides where Spark jobs should run. Spark supports different cluster managers depending on deployment needs. This component ensures efficient use of system resources.

Key Features:

- Allocates resources to applications

- Manages cluster nodes

- Supports YARN, Mesos, Kubernetes, and Standalone

- Ensures efficient job execution

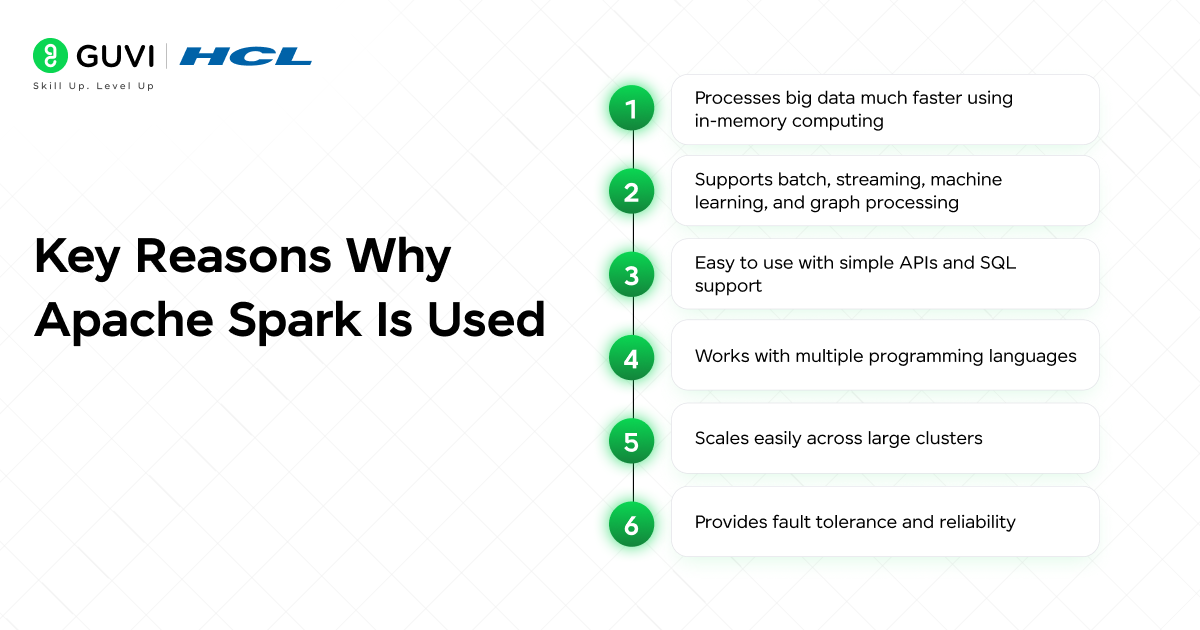

Why Apache Spark Is Used

Apache Spark is widely used because it can process large amounts of data much faster than traditional big data tools. It performs most operations in memory instead of repeatedly reading from disk, which significantly improves speed. Spark also supports multiple types of data processing, making it a single solution for different big data needs.

Another reason Spark is popular is its ease of use and flexibility. Developers can write Spark programs using simple APIs and work with batch data, real-time streams, machine learning, and graph processing within the same framework. This makes Spark suitable for beginners as well as large enterprises.

Key Reasons Why Apache Spark Is Used:

- Processes big data much faster using in-memory computing

- Supports batch, streaming, machine learning, and graph processing

- Easy to use with simple APIs and SQL support

- Works with multiple programming languages

- Scales easily across large clusters

- Provides fault tolerance and reliability

If you want to dive deeper into big data processing and analytics, do check out HCL GUVI’s Big Data Engineering course. This program helps you build practical skills in handling large datasets, understanding frameworks like Apache Spark, Hadoop, and real-world data pipelines. It’s ideal for beginners and data professionals who want a career in data engineering and analytics.

Key Features Of Apache Spark

Apache Spark comes with several powerful capabilities that help process large-scale data quickly and efficiently. These features make Spark suitable for beginners as well as experienced professionals working with big data. Each feature focuses on improving speed, scalability, and ease of use across different data workloads.

- In-Memory Processing – Spark processes data in memory instead of disk, which makes computations much faster.

- Batch And Real-Time Processing – Spark supports both batch jobs and real-time data streams within the same framework.

- Multi-Language Support – Spark allows developers to write applications using Python, Java, Scala, or R.

- Built-In Libraries – Spark provides ready-to-use libraries for SQL, machine learning, graph processing, and streaming.

- High Scalability – Spark can scale from a single machine to thousands of nodes without changing the code.

Fault Tolerance – Spark automatically recovers lost data using lineage information when failures occur.

💡 Did You Know?

- Apache Spark was originally developed at UC Berkeley before becoming an Apache open-source project.

- Spark can run on top of Hadoop and use HDFS without replacing existing big data systems.

- It processes data in memory, which makes it much faster than traditional disk-based frameworks.

Conclusion

Apache Spark has become a core technology in the big data ecosystem because it makes large-scale data processing faster, simpler, and more efficient. Its ability to handle batch processing, real-time streaming, and machine learning within a single framework makes it highly valuable for modern data-driven applications.

For beginners, Spark offers an easier entry into big data with support for multiple programming languages and a clear processing model. As data volumes continue to grow, understanding Apache Spark helps build a strong foundation for careers in data engineering, analytics, and machine learning.

FAQs

1. Is Apache Spark easy to learn for beginners?

Yes, Apache Spark is beginner-friendly, especially if you already know Python, Java, or SQL. Its simple APIs and clear processing model make it easier to understand compared to older big data tools.

2. What is Apache Spark mainly used for?

Apache Spark is mainly used for processing large amounts of data quickly. It is commonly used in data analytics, real-time data processing, machine learning, and big data applications.

3. How is Apache Spark different from Hadoop?

Apache Spark processes data in memory, which makes it much faster than Hadoop that relies heavily on disk storage. Spark is also more flexible and supports multiple workloads in one framework.

4. Can Apache Spark handle real-time data?

Yes, Apache Spark supports real-time data processing using Spark Streaming. This makes it suitable for applications like live analytics, monitoring systems, and event processing.

5. Do I need Hadoop to use Apache Spark?

No, Apache Spark can run independently without Hadoop. However, it can also work with Hadoop for storage using HDFS if needed.

Did you enjoy this article?