Build an LLM Evaluation Framework: A Complete Guide

Mar 06, 2026 7 Min Read 51 Views

(Last Updated)

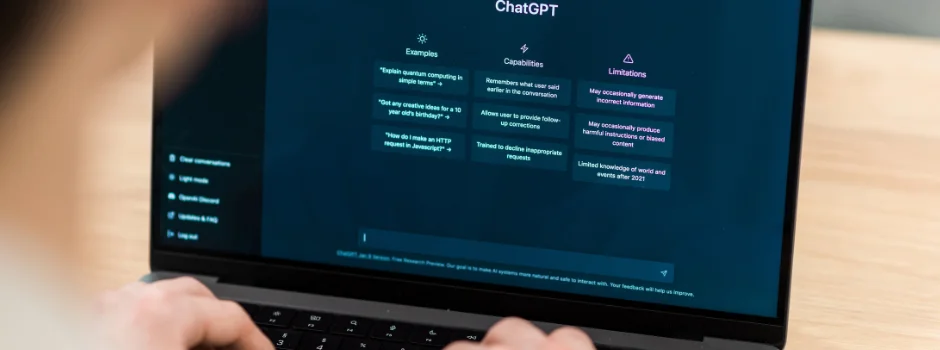

Have you ever wondered how companies know whether their AI chatbot or language model is actually giving correct and reliable answers? Large Language Models (LLMs) can generate impressive responses, but they can also produce inaccurate information, hallucinate facts, or give answers that sound confident yet are completely wrong. That raises an important question: how do developers measure whether an LLM is performing well or not?

This is where an LLM evaluation framework becomes essential. Instead of relying on guesswork or manually checking a few responses, developers create a structured system that tests model outputs using datasets, metrics, and automated scoring methods.

In this article, you’ll learn what an LLM evaluation framework is, why it matters, and how you can build a simple evaluation framework step-by-step with code to test and improve your language model

Quick Answer:

An LLM evaluation framework is a structured system used to test and measure how well a large language model performs by running prompts against a dataset and scoring the responses using metrics like accuracy, relevance, and similarity. It helps developers detect errors, compare models, and continuously improve AI output quality.

Table of contents

- What is an LLM Evaluation Framework?

- Why Evaluate LLMs?

- Popular Tools and Frameworks

- Evaluation Approaches

- Step-by-Step: Build a Simple Evaluation Framework

- Define Your Use Case & Success Criteria

- Assemble an Evaluation Dataset

- Generate Model Responses

- Score the Outputs

- Review and Iterate

- Example: A Basic Evaluation Script

- Key Metrics to Track

- Conclusion

- FAQs

- What is an LLM evaluation framework?

- Why is evaluating an LLM important?

- What metrics are commonly used in LLM evaluation?

- Can LLMs evaluate other LLMs?

- What tools can be used for LLM evaluation?

What is an LLM Evaluation Framework?

At its core, an LLM evaluation framework is software that tests and scores a language model’s outputs on defined criteria. In other words, it’s how you verify an AI “is actually doing what you want it to do”.

These frameworks are the AI equivalent of test suites in traditional software. Instead of just eyeballing a few responses, you automate tests so you can track improvements (or regressions) over time.

Key point: Unlike normal code, LLM outputs vary. A model can sound fluent yet be wrong. That’s why we need special tests, not just simple assertions.

Why Evaluate LLMs?

Language models are powerful but unpredictable. When you ask an LLM a question, it might give a confident-sounding answer that’s entirely incorrect or out-of-scope. Without a formal testing setup, you risk deploying a model that hallucinates facts or violates policies.

In fact, real incidents have shown how costly this can be: AI-written news articles or chatbots once published blatantly false information, leading to lost trust and even legal trouble.

Here’s the thing: evaluation isn’t optional. It’s how you catch problems before they hurt your users or brand. As one expert noted, skipping proper LLM evaluation is not just a technical oversight—it’s a business risk that can cost you money, trigger regulatory action, and leave a stain on your reputation.

A good framework lets you measure things like:

- Accuracy: Does the answer match the true or expected answer?

- Relevance: Is the output actually addressing the question or task?

- Coherence: Does the response make logical sense in context?

- Safety/Bias: Does it avoid toxic, biased, or inappropriate content?

- Hallucinations: Is the model making up facts?

Popular Tools and Frameworks

You don’t have to build everything from scratch. Several open-source and commercial tools can help:

- OpenAI Evals: An open-source framework by OpenAI to define evaluation tasks, run models, and log results. It supports custom metrics and a registry of standard benchmarks.

- DeepEval (Confident AI): An open-source library offering many built-in metrics and tests for LLM outputs. It includes things like “G-Eval” (generative evaluation) and guardrails testing.

- RAGAS: A tool focused on Retrieval-Augmented Generation evaluation. It provides metrics like context precision/recall and faithfulness for RAG systems.

- LangSmith (LangChain): A platform for LLM application observability. It offers offline and continuous evaluation and even uses LLMs as automated “judges” in tests.

- LangFuse: Open-source toolkit for LLM engineering (prompt management, evaluations, traces) with dashboards and integrations.

- TruLens: A testing/monitoring library with easy integrations, focusing on groundedness and safety.

- Arize (Phoenix): An AI observability platform that can log and evaluate LLM outputs in real time (model-agnostic).

- MLflow, Weights & Biases, ClearML: While general ML platforms, they can track evaluation metrics over time and compare model versions.

Even if you end up using a tool, knowing the concepts will help you apply them correctly.

Evaluation Approaches

There are several ways to actually score model outputs. The main approaches are:

- Automated Metrics: Pre-defined formulas. Common examples include:

- BLEU/ROUGE: Overlap-based scores (BLEU for translation, ROUGE for summarization). They work by comparing n‑gram overlap to reference answers.

- F1/Exact Match: Especially for classification or QA tasks, F1 (precision/recall) or exact match percentages measure correctness against a known answer.

- Perplexity: Measures how well the model predicts text (lower is better). Useful for language models generically but not always intuitive.

- Embedding-Based Scores: Newer metrics compute semantic similarity (e.g., BERTScore) or have the model judge its own outputs using learned heuristics (e.g., GPTScore, SelfCheckGPT).

- Automated scores are fast and cheap, but may miss nuance. For example, BLEU can fail on creative writing.

- LLM-as-a-Judge: Using a second (usually larger or specialized) model to critique the output. You give the answer and ask the judge-model yes/no or a rating on criteria like “Is this answer correct?” or “How helpful is this response?”.

- Human Evaluation: The gold standard for complex tasks. Expert annotators or domain specialists read outputs and rate or rank them. This catches subjective issues (tone, context, subtle factuality) but is slow and expensive.

- Hybrid: A combination of the above. Often you run automated and LLM-based checks first, and only flag uncertain or critical cases for human review. This scales better while still harnessing human judgment where it counts.

No single method is perfect. In practice, you’ll combine multiple approaches (and metrics) to build confidence in your system.

Step-by-Step: Build a Simple Evaluation Framework

Now let’s put theory into practice. Below are the essential steps to create your own LLM evaluation framework from scratch. We’ll keep it simple: a basic Python example that any developer can follow.

1. Define Your Use Case & Success Criteria

First, be crystal clear on what the LLM should do. Are you building a chatbot, a summarizer, a code assistant, or something else? The answers determine everything else:

- Task-specific goals: For a QA bot, accuracy on factual questions is key. For summarization, conciseness and coverage matter. For chat, helpfulness and empathy might be metrics.

- Constraints: Maybe you must ensure no profanity (safety), or it fits a brand voice (style), or answers are always a certain length.

Define what a “good” output looks like for your scenario. This guides which metrics to use and how to collect test data.

2. Assemble an Evaluation Dataset

Gather a set of test prompts (inputs) along with the expected answers or criteria. This is your evaluation dataset, akin to unit tests for code. Each entry should include:

- Input: The user question or prompt (e.g. “Who invented Python?” or a paragraph to summarize).

- Expected Output / Ground Truth: The correct answer or reference summary (if available). For some tasks (like advice or creative writing), define what the success conditions are.

- Context (optional): For RAG or multi-turn systems, include any supporting documents or conversation history.

- Additional Metadata (optional): E.g. difficulty level, category tags, etc.

A simple format is a JSON or CSV file. For example, a JSON list of QA pairs:

[

{

"question": "Who invented Python?",

"expected_answer": "Guido van Rossum"

},

{

"question": "What is the capital of France?",

"expected_answer": "Paris"

}

]Include both easy cases and edge cases (tricky queries, ambiguous wording, etc.). Aim for a diverse set of 10–50 examples at first. (Later you can expand or synthesize more.) The idea is to cover the core functionality and known pitfalls.

3. Generate Model Responses

Now run your LLM on each test input and collect its output. In Python, this could be as simple as:

import openai, json

# Load your evaluation dataset

with open("evaluation_dataset.json") as f:

dataset = json.load(f)

results = []

for item in dataset:

prompt = item["question"]

response = openai.ChatCompletion.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}]

)

answer = response.choices[0].message.content.strip()

results.append({

"question": prompt,

"expected": item.get("expected_answer", ""),

"model_answer": answer

})

# Save model outputs for later analysis

with open("model_outputs.json", "w") as f:

json.dump(results, f, indent=2)This script loops through your dataset, sends each prompt to the model (replace “gpt-4o-mini” with your actual model or API), and saves both the expected answer and what the model responded. You now have a record of “what the model said vs what it should have said.”

4. Score the Outputs

With inputs and outputs ready, define a scoring method. For a simple framework, start with basic metrics:

- Exact Match / Accuracy: Check if the model’s answer exactly equals the expected answer. (Good for fixed-answer tasks.)

- String Similarity: For open-ended tasks, compute a similarity score (e.g., Levenshtein or SequenceMatcher). For example, Python’s difflib:

from difflib import SequenceMatcher

def similarity(a, b):

return SequenceMatcher(None, a, b).ratio()

for entry in results:

score = similarity(entry["expected"], entry["model_answer"])

entry["similarity_score"] = score- A score of 1.0 means a perfect match, lower is worse. You can set thresholds (e.g. ≥0.8 is a “pass”).

- Keyword Check: See if certain keywords or entities are present in the answer. Useful when exact wording isn’t critical but key info must be included.

- Custom Logic: You could write simple rules (e.g. “count ‘yes’ vs ‘no’”).

- Automated Metrics: If appropriate, you can plug in standard metrics. For example, use BLEU/ROUGE libraries for text tasks, or call an evaluation API.

After scoring, compute aggregate metrics. For instance:

correct = sum(1 for e in results if e["similarity_score"] > 0.9)

total = len(results)

accuracy = correct / total * 100

print(f"Accuracy: {accuracy:.1f}%")

print(f"Average similarity: {sum(e['similarity_score'] for e in results)/total:.2f}")This gives you numbers like “Accuracy: 80%, Avg similarity: 0.85.” Now you have measurable results, not just impressions.

5. Review and Iterate

Inspect where the model failed or scored low. You might see patterns: maybe it got all questions right except the ones about geography, or it always misses a certain format. Use these insights to improve prompts, fine-tune a model, or add more training data.

You can also add human review here. For any outputs that are unclear or that low scores don’t capture well, ask a colleague or crowdworker to rate them.

Example: A Basic Evaluation Script

Here’s a simplified example putting it all together:

import json

from difflib import SequenceMatcher

import openai

# Load evaluation dataset

with open("eval_data.json") as f:

eval_data = json.load(f)

results = []

for item in eval_data:

# Query the model

resp = openai.Completion.create(

engine="text-davinci-003",

prompt=item["prompt"],

max_tokens=100

)

answer = resp["choices"][0]["text"].strip()

# Score the answer

sim = SequenceMatcher(None, item["expected"], answer).ratio()

correct = sim > 0.8

results.append({

"prompt": item["prompt"],

"expected": item["expected"],

"answer": answer,

"score": sim,

"correct": correct

})

# Summary

total = len(results)

correct = sum(1 for r in results if r["correct"])

print(f"Passed {correct}/{total} ({correct/total*100:.1f}%) of prompts.")This pseudo-code does a simple “did it get most words right?” check. You could replace the similarity and threshold logic with anything that fits your task.

Remember to install and configure any APIs or libraries (like OpenAI’s Python SDK) before running the script.

Key Metrics to Track

Your framework should record the metrics most relevant to your use case. Here are some common ones:

- Correctness/Accuracy: For tasks with clear answers. Measures how often the model’s output matches the true answer.

- Semantic Similarity: For open-ended answers, use embedding-based or string metrics to capture meaning.

- Relevance: Did the response address the question/task? (For example, answer relevance in summarization or QA).

- Hallucination Rate: How often does the model invent facts? (You might detect this via a faithfulness check).

- Coverage/Recall: In summarization, did the summary cover the main points?

- Task Completion: If the model is an agent, did it complete the multi-step task? (See Confident AI’s agent metrics).

- Latency & Throughput: How fast and cost-effective are responses? (Important for production).

- Quality dimensions: For dialogue or assistance, measure tone, coherence, or user satisfaction (often via human ratings).

For Retrieval-Augmented systems (RAG), add RAG-specific metrics like:

- Contextual Precision/Recall: Did the retriever pull relevant docs (RAGAS metrics)?

- Contextual Relevancy: Are retrieved chunks truly useful for answering.

And for “Responsible AI” considerations:

- Bias & Toxicity: Does the output contain hate speech, slurs, or biased language? (You might run a toxicity model or human check).

It’s also common to set thresholds for critical metrics (e.g. accuracy must stay above 90%). If a test run drops below, that signals a red flag.

Evaluation is a Growing Field: There are now dozens of tools and companies focused solely on LLM evals. Monitoring and testing AI has become as important as building it. Experts compare it to “observability” in software – you need it to debug and maintain AI systems safely.

If you’re serious about mastering LLMs and want to apply it in real-world scenarios, don’t miss the chance to enroll in HCL GUVI’s Intel & IITM Pravartak Certified Artificial Intelligence & Machine Learning course. Endorsed with Intel certification, this course adds a globally recognized credential to your resume, a powerful edge that sets you apart in the competitive AI job market.

Conclusion

In conclusion, building an LLM evaluation framework may seem daunting, but breaking it down makes it manageable. The key is to treat your model like critical software: create test cases, define clear success metrics, and automate the checks. A simple loop of run model → score output → analyze results can catch most issues early.

We saw that a framework typically includes an evaluation dataset, a set of metrics, and an automated pipeline to generate reports. We provided an example Python script to illustrate the basic idea.

FAQs

1. What is an LLM evaluation framework?

An LLM evaluation framework is a structured system used to test and measure the performance of large language models. It uses datasets, metrics, and automated scripts to analyze the quality, accuracy, and relevance of model outputs.

2. Why is evaluating an LLM important?

Evaluating an LLM helps detect errors, hallucinations, and biased outputs before deployment. It ensures the model performs reliably and meets the quality standards required for real-world applications.

3. What metrics are commonly used in LLM evaluation?

Common metrics include accuracy, semantic similarity, relevance, hallucination rate, BLEU, ROUGE, and F1 score. These metrics measure how well the model’s output matches the expected response.

4. Can LLMs evaluate other LLMs?

Yes, this approach is called LLM-as-a-judge, where one model evaluates the responses generated by another. It helps automate large-scale evaluation by scoring responses based on criteria like relevance, correctness, and completeness.

5. What tools can be used for LLM evaluation?

Popular tools include OpenAI Evals, DeepEval, RAGAS, LangSmith, and MLflow. These tools help automate testing, track evaluation metrics, and monitor LLM performance over time.

Did you enjoy this article?