How to Fine-Tune Large Language Models (LLMs)?

Apr 06, 2026 6 Min Read 383 Views

(Last Updated)

If you’ve spent any time working with AI tools, you’ve probably noticed that general-purpose models like GPT-4 or LLaMA are incredibly powerful, but they can also feel frustratingly vague when you throw something hyper-specific at them.

That’s not a flaw in the model; it’s just the nature of how they’re built. They’re trained to be good at everything, which sometimes means they’re not great at your thing.

That’s exactly where the need to fine-tune Large Language Models comes in. And if you’re looking to build AI systems that actually deliver consistent, specialized, high-quality results, understanding how to fine-tune Large Language Models isn’t optional anymore; it’s foundational. Let’s break it all down, step by step.

Quick Answer:

Fine-tuning a Large Language Model (LLM) means taking a pre-trained model and retraining it on your own domain-specific data, so it performs accurately for your exact use case, without the cost or complexity of building a model from scratch.

Table of contents

- What is Fine-Tuning and Why Does It Matter?

- Fine-Tune Large Language Models vs. Prompt Engineering vs. RAG: Which One Do You Actually Need?

- The Core Fine-Tuning Methods You Should Know

- Full Fine-Tuning

- Instruction Fine-Tuning

- Parameter-Efficient Fine-Tuning (PEFT)

- RLHF and DPO: Aligning Models With Human Preferences

- The Step-by-Step Fine-Tuning Large Language Models

- Step 1: Define Your Task Clearly

- Step 2: Prepare Your Training Data

- Step 3: Choose the Right Base Model

- Step 4: Select Your Fine-Tuning Technique

- Step 5: Configure Hyperparameters

- Step 6: Train and Monitor

- Step 7: Evaluate and Deploy

- Common Challenges and How to Handle Them

- Catastrophic Forgetting

- Overfitting

- Bias Amplification

- Generalization Challenges

- Tools and Frameworks to Know

- Wrapping Up

- FAQs

- What is the difference between fine-tuning and prompt engineering?

- How much data do I need to fine-tune an LLM?

- Is fine-tuning expensive? What hardware do I need?

- What is LoRA and why is everyone using it?

- Can fine-tuning cause a model to "forget" what it already knows?

What is Fine-Tuning and Why Does It Matter?

At its core, fine-tuning is a process used to adapt pre-trained models like LLaMA, Mistral, or Phi to specialized tasks without the enormous resource demands of training from scratch. This approach allows you to extend the model’s knowledge base or change its style using your own data.

Think of it this way: imagine hiring an experienced surgeon and then training them specifically in robotic surgery at your hospital. They already know medicine, you’re just sharpening their skills for a particular context. That’s essentially what fine-tuning does.

So, what’s the real-world motivation? Here’s why organizations are investing in fine-tuning today:

- Domain specificity: While LLMs are trained on vast amounts of data, they might not be acquainted with the specific terminologies, nuances, or contexts relevant to a particular business or industry.

- Brand consistency: Businesses can adapt a model to match a specific tone, writing style, or operational directive, something a generic model simply can’t do out of the box.

- Cost efficiency: Training a model from scratch requires vast computing resources. Fine-tuning lets you achieve domain-level performance at a fraction of that cost.

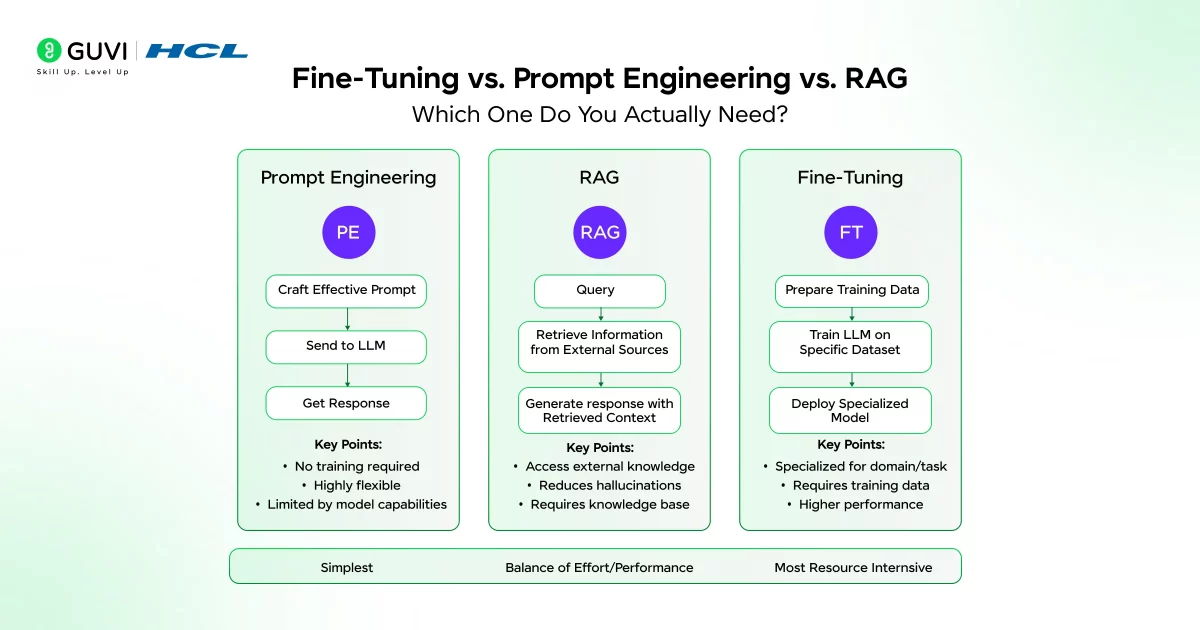

Fine-Tune Large Language Models vs. Prompt Engineering vs. RAG: Which One Do You Actually Need?

Remember, fine-tune large language models should be your last resort, not your first choice. The recommended progression starts with prompt engineering, escalates to RAG when external knowledge is needed, and only proceeds to fine-tuning when deep specialization is required.

Here’s a simple way to think about it:

- Prompt engineering works well for basic task adaptation — tweaking how the model responds through better instructions.

- Retrieval-Augmented Generation (RAG) is ideal when you want the model to reference a dynamic or frequently updated knowledge base.

- Fine-tuning becomes necessary when you need permanent behavior changes, consistent style, or domain-level expertise baked directly into the model.

Fine-tuning is ideal when you need your LLM to generate highly specialized content, match your brand’s tone, or excel in niche applications. It is especially useful for industries such as healthcare, finance, or legal services where general-purpose LLMs may not have the depth of domain-specific knowledge required.

The Core Fine-Tuning Methods You Should Know

Not all fine-tuning approaches are created equal. Depending on your goals, data, and infrastructure, you’ll likely land on one of the following approaches.

1. Full Fine-Tuning

Full fine-tuning is a method that retrains all of your base model’s parameters. Full fine-tuning offers the most control over model outputs, which may be ideal for developers looking to execute obscure, domain-specific tasks. It is, however, very resource-intensive.

2. Instruction Fine-Tuning

Instruction fine-tuning is about training the machine learning model using examples that demonstrate how the model should respond to the query. The dataset you use for fine-tuning has to serve the purpose of your instruction.

3. Parameter-Efficient Fine-Tuning (PEFT)

This is where things get exciting for most practitioners. PEFT methods let you fine-tune large models without updating all their parameters, only a small, targeted subset. The two most popular approaches here are LoRA and QLoRA.

LoRA (Low-Rank Adaptation) is by far the dominant PEFT technique today. LoRA builds on a simple insight: neural network weight updates often lie in a low-dimensional subspace. LoRA trains and injects small, low-rank matrices into the model layers while keeping the core weights frozen.

QLoRA (Quantized LoRA) takes this a step further. QLoRA is an efficient fine-tuning approach for large language models that significantly reduces memory usage while maintaining the performance of full 16-bit fine-tuning.

4. RLHF and DPO: Aligning Models With Human Preferences

Once you’ve fine-tuned a model for a task, you’ll often want to go one step further and ensure it behaves in ways humans actually prefer. That’s where alignment techniques come in.

Reinforcement Learning from Human Feedback (RLHF) is the methodology behind many production-grade models like ChatGPT. After instruction tuning, human reviewers rank multiple model outputs for quality, helpfulness, and safety.

This human feedback is used to train a Reward Model. The LLM is then optimized using reinforcement learning to generate responses that maximize the predicted score from this Reward Model.

DPO requires only preference data (prompt, chosen response, rejected response), a reference policy, and standard supervised learning infrastructure.

OpenAI’s InstructGPT demonstrated that a 1.3B aligned model could outperform a 175B base model on human evaluations, underscoring that alignment isn’t just a safety feature; it’s a performance multiplier. In other words, a well-aligned smaller model can be more useful than a much larger, unaligned one. Size isn’t everything.

If you are confused about the differences between LLM and RAG, then read the blog – Difference Between RAG and LLM: Key Comparisons

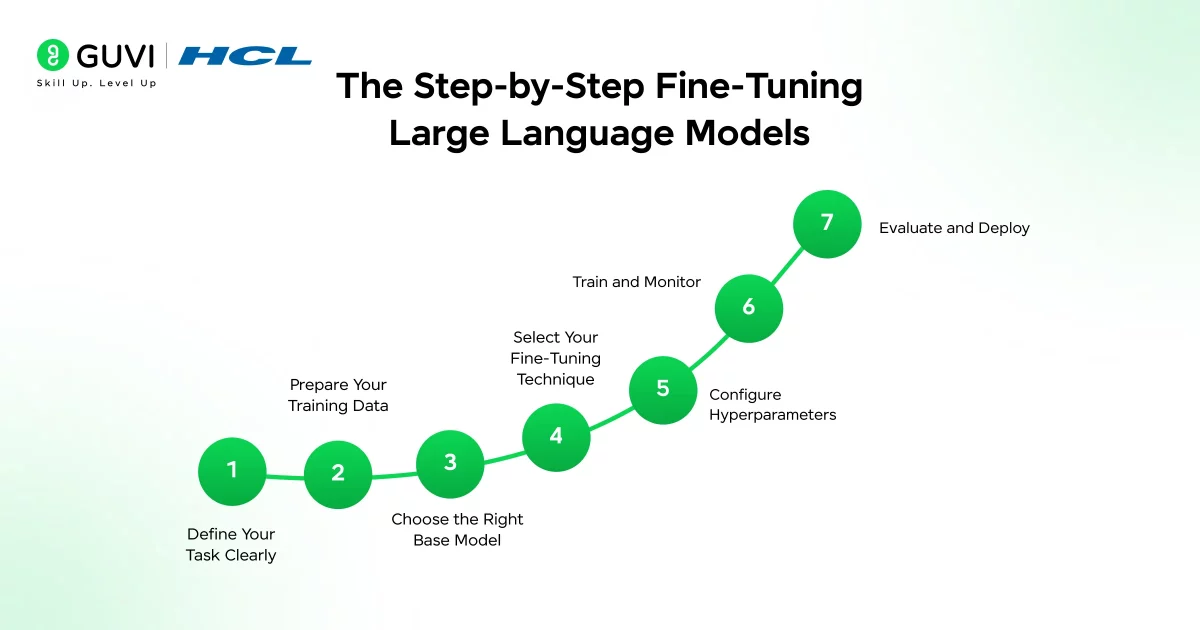

The Step-by-Step Fine-Tuning Large Language Models

A structured seven-stage pipeline for fine-tuning LLMs covers the complete lifecycle from data preparation to model deployment, with key considerations including data collection strategies, handling of imbalanced datasets, model initialization, and optimization techniques.

Here’s how to think about that pipeline practically:

Step 1: Define Your Task Clearly

Before you touch a dataset or write a single line of training code, you need to be laser-focused on what you want the model to do. Vague objectives lead to vague models. Ask yourself: What’s the exact input? What does a perfect output look like? How will you measure success?

Step 2: Prepare Your Training Data

Data quality is the single biggest predictor of fine-tuning success. Even the most sophisticated LLM fine-tuning techniques will fail if the underlying data is poor. Data is the fuel, and in fine-tuning, you need high-octane fuel.

Key data preparation steps include:

- Collect relevant data that matches your use case and domain.

- Clean the dataset — remove duplicates, errors, and inconsistencies.

- Format correctly — structure your data as clear input-output (prompt-completion) pairs.

- Watch for bias — biased training data leads to biased model outputs, which can create serious ethical and operational problems.

Quality beats quantity: 1,000 excellent examples trump 10,000 mediocre ones. That’s not an exaggeration. A small, high-quality dataset will consistently outperform a bloated, noisy one.

Step 3: Choose the Right Base Model

This is the crucial decision point where you select the specific pre-trained LLM that will serve as the base for your fine-tuning. Key considerations include model size (larger models are more capable but require more resources) and whether to use a base vs. instruction-tuned model. For further specialization, starting with an instruction-tuned model is often beneficial.

Popular choices include LLaMA 2/3, Mistral 7B, Phi-3, and Falcon, all of which are open-source and widely supported by tools like Hugging Face.

Step 4: Select Your Fine-Tuning Technique

For most teams, LoRA or QLoRA is the right call. LoRA and QLoRA work for most cases and balance performance with resource needs. Start small and iterate to test your approach before spending big on data and training.

If you have virtually unlimited compute and need the deepest possible specialization, full fine-tuning becomes worth considering. But for the majority of real-world applications, PEFT methods deliver excellent results at a fraction of the cost.

Step 5: Configure Hyperparameters

This is where many practitioners underestimate the nuance involved. Key hyperparameters to tune include:

- Learning rate: Keep it low to prevent the model from forgetting its general knowledge.

- Batch size: Balance between memory constraints and training stability.

- Number of epochs: More isn’t always better — overfitting is a real risk with small datasets.

- LoRA rank (r): A higher rank gives more expressive power but increases memory usage.

Step 6: Train and Monitor

Run your training loop while monitoring both training loss and validation loss closely. Good training metrics but poor real-world performance is a common pitfall. To improve generalization, add more diverse data to cover different scenarios and test regularly on completely new data to see if the model really learned or just memorized.

Use experiment tracking tools like Weights & Biases or MLFlow to log your runs — you’ll thank yourself later when you’re comparing multiple experiments.

Step 7: Evaluate and Deploy

Once fine-tuning is complete, the model’s performance is assessed on the test set. This provides an unbiased evaluation of how well the model is expected to perform on unseen data. Consider also iteratively refining the model if it still has potential for improvement.

Never skip the evaluation step. Always benchmark your fine-tuned model against the base model to quantify the actual improvement. If you can’t prove the fine-tuning helped, you shouldn’t deploy it.

Common Challenges and How to Handle Them

Fine-tuning is powerful, but it’s not without its pitfalls. Here are the most common ones you’ll encounter, and what to do about them.

1. Catastrophic Forgetting

This is arguably the most well-known fine-tuning failure mode. When you train a model intensely on a narrow task, it can “forget” its broader general knowledge. To stop catastrophic forgetting, you need to train gently. Use lower learning rates so the model changes slowly. Use LoRA instead of full fine-tuning to keep most of the original model intact. Mix in some general instruction data to keep broad skills.

2. Overfitting

Fine-tuning can be prone to overfitting, a condition where the model becomes overly specialized on the training data and performs poorly on unseen data. This risk is particularly pronounced when the task-specific dataset is small or not representative of the broader context.

The solution? More diverse data, stronger regularization, and early stopping when your validation loss starts climbing while training loss continues to drop.

3. Bias Amplification

Pre-trained models inherit biases from their training data, which fine-tuning can inadvertently amplify when applied to task-specific data. This amplification may lead to biased predictions and outputs, potentially causing ethical concerns.

Always audit your training data for demographic, linguistic, and contextual biases before starting. It’s much harder to fix these problems after the fact.

4. Generalization Challenges

Even a well-trained model can struggle when it encounters inputs that look slightly different from its training distribution. Ensuring that a fine-tuned model generalizes effectively across various inputs and scenarios is challenging. Cross-validation and thorough A/B testing in production environments are your best defenses here.

Tools and Frameworks to Know

You don’t have to build your fine-tuning pipeline from scratch. The ecosystem has matured considerably, and there are excellent tools available:

- Hugging Face Transformers + PEFT + TRL — The most widely used combination for instruction tuning and LoRA-based fine-tuning. Broad community support and extensive documentation.

- Unsloth — Optimized for speed; free Colab notebooks available, making it accessible for experimentation.

- LLaMA-Factory — LLaMA-Factory has a web interface that’s both easy and flexible. It supports multiple models and has team-friendly features, great for projects where not everyone is comfortable with the command line.

- Axolotl — Built on top of Hugging Face, well-suited for PEFT techniques and scaling workflows.

- AWS SageMaker / Google Vertex AI — Cloud-managed options with automatic scaling, ideal for production deployments.

If you’re serious about learning Large Language Models (LLMs) like this and want to apply them in real-world scenarios, don’t miss the chance to enroll in HCL GUVI’s Intel & IITM Pravartak Certified Artificial Intelligence & Machine Learning course, co-designed by Intel. It covers Python, Machine Learning, Deep Learning, Generative AI, Agentic AI, and MLOps through live online classes, 20+ industry-grade projects, and 1:1 doubt sessions, with placement support from 1000+ hiring partners.

Wrapping Up

Fine-tuning LLMs is no longer just a research lab privilege, it’s a practical, accessible skill that developers, data scientists, and AI practitioners can apply today. Whether you’re building a healthcare assistant that understands clinical language, a legal tool that speaks in precise terminology, or an ed-tech platform that adapts to a learner’s needs, fine-tuning is what bridges the gap between a general-purpose model and one that actually works for you.

The process demands patience, clean data, thoughtful evaluation, and iterative refinement, but the payoff is a model that feels purpose-built rather than borrowed. As the tools get more accessible and the techniques more efficient, there’s never been a better time to move beyond prompting and start truly owning your AI.

FAQs

1. What is the difference between fine-tuning and prompt engineering?

Prompt engineering tweaks how you ask the model something without changing the model itself, while fine-tuning actually updates the model’s weights through additional training. Fine-tuning is the better choice when you need consistent, domain-specific behavior rather than a one-time output adjustment.

2. How much data do I need to fine-tune an LLM?

There’s no fixed number, but quality always wins over quantity — even 1,000 well-curated, domain-specific examples can outperform 10,000 noisy ones. Starting small, evaluating results, and scaling your dataset iteratively is the most practical approach.

3. Is fine-tuning expensive? What hardware do I need?

Full fine-tuning is resource-heavy and typically requires multiple high-end GPUs, but techniques like QLoRA have made it accessible enough to run on a single consumer GPU. The cost depends significantly on model size, the method you choose, and whether you use cloud platforms like AWS or Google Vertex AI.

4. What is LoRA and why is everyone using it?

LoRA (Low-Rank Adaptation) is a parameter-efficient fine-tuning technique that freezes the base model’s weights and trains only small adapter layers, drastically reducing memory and compute needs. It’s become the go-to method because it delivers performance close to full fine-tuning at a fraction of the cost.

5. Can fine-tuning cause a model to “forget” what it already knows?

Yes — this is called catastrophic forgetting, where intensive training on a narrow task erodes the model’s broader general knowledge. You can minimize it by using a lower learning rate, opting for LoRA instead of full fine-tuning, and mixing some general instruction data into your training set.

Did you enjoy this article?